Evaluate Ai Agents

How To Evaluate Ai Agents Galileo Ai The Ai Observability And Learn how to evaluate ai agents using built in evaluators for quality, safety, and agent specific behaviors. Learn how to evaluate ai agent performance using the four pillars framework: task success, tool quality, reasoning coherence, and cost efficiency.

Ai Agent Handbook How To Evaluate Ai Agents We see several common types of agents deployed at scale today, including coding agents, research agents, computer use agents, and conversational agents. each type may be deployed across a wide variety of industries, but they can be evaluated using similar techniques. Agent evaluation is the systematic process of measuring ai agent performance across technical capabilities, autonomy levels, and business outcomes. it has become a critical discipline as ai. Learn how to systematically evaluate, improve, and iterate on ai agents using structured assessments. Learn how to evaluate ai agent quality with practical metrics, testing strategies, and improvement frameworks. covers accuracy, latency, cost, and user satisfaction measurement.

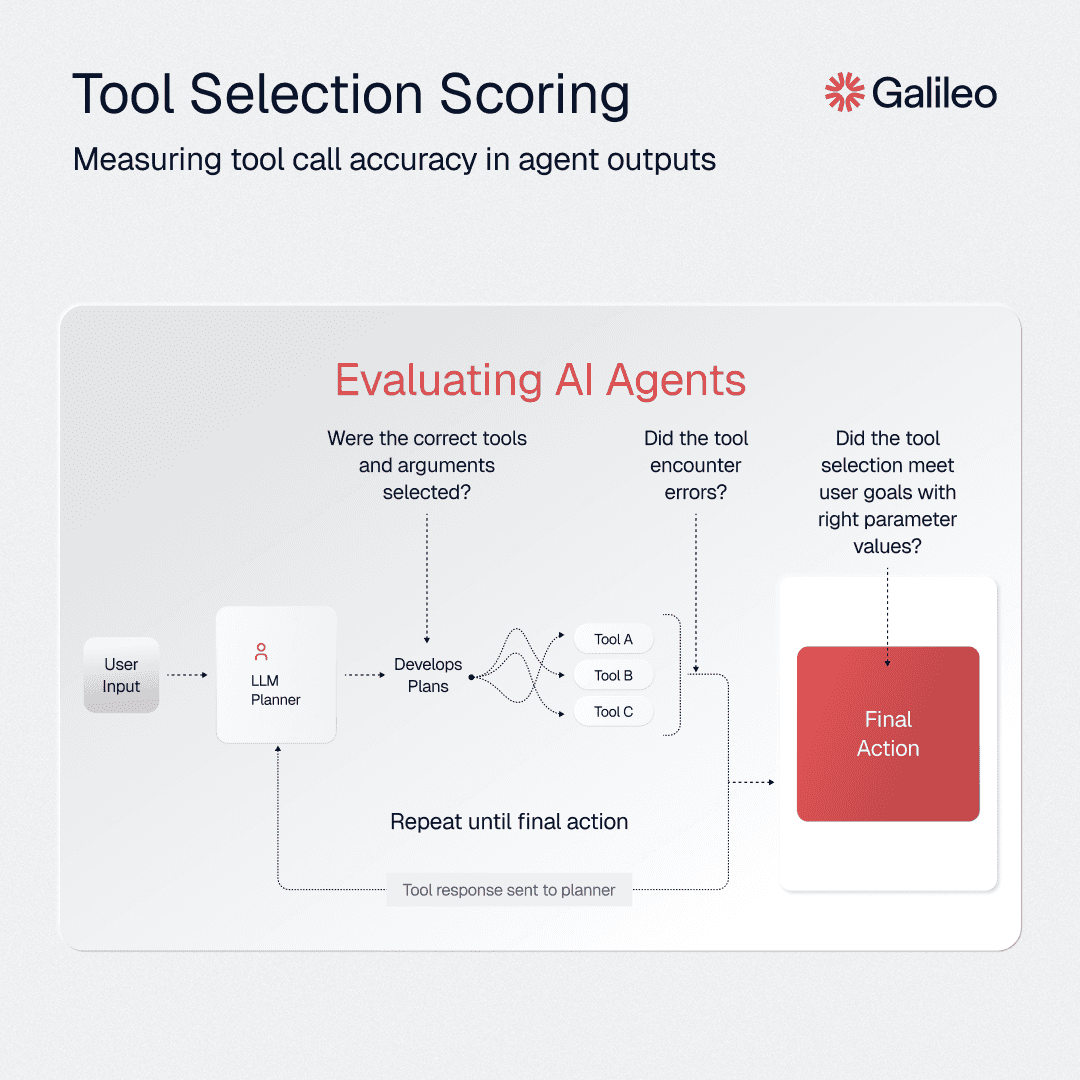

Performance Evaluation Ai Agents Learn how to systematically evaluate, improve, and iterate on ai agents using structured assessments. Learn how to evaluate ai agent quality with practical metrics, testing strategies, and improvement frameworks. covers accuracy, latency, cost, and user satisfaction measurement. Discover comprehensive frameworks for evaluating ai agents: learn about goal setting, metrics, data collection, testing, analysis, and iteration. Agent evals grade multi turn trajectories, tool selection, and drift over time. if your system calls tools or manages state, llm evals will miss most of the ways things can go wrong. what is the best tool for ai agent evaluation? cekura is the strongest option for production, covering trajectory tracing and drift detection in one place. Ai agent evaluation is the process of measuring how well an agent reasons, selects and calls tools, and completes tasks—separately at each layer—so you can pinpoint exactly what's broken. Ai agents fail in ways that traditional software testing was never designed to catch. here is the complete evaluation framework — from loop level failure surfaces to compound reliability scoring.

Comments are closed.