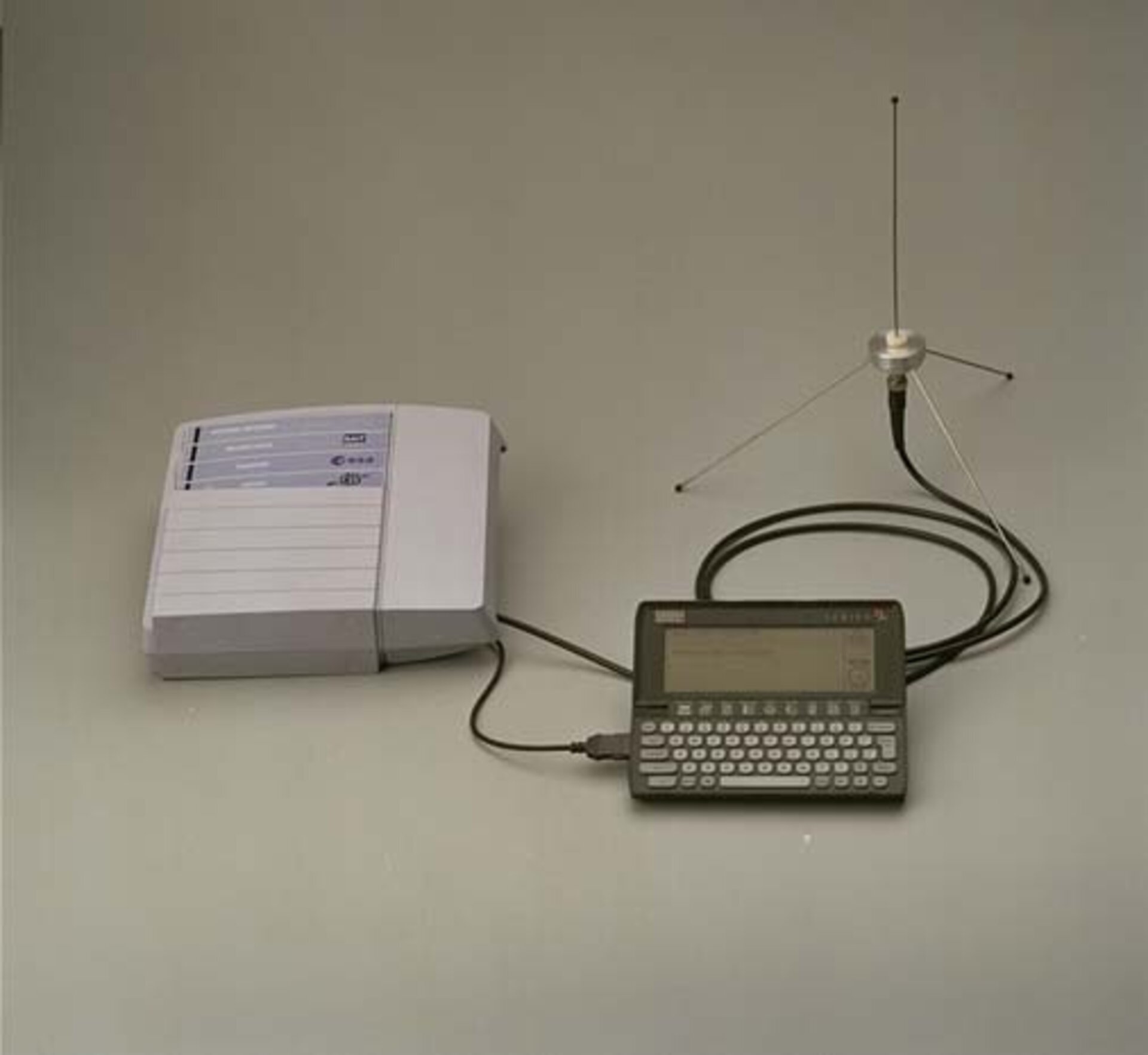

Esa Llms User Terminal

Esa Llms User Terminal Llms was developed under an agency contract with sait systems in belgium heading a team of companies from belgium, germany, spain and the uk. the project was financed under esa's artes telecommunications programme, mainly by belgium with a contribution from germany. Platform e learning universitas esa unggul untuk mendukung kegiatan pembelajaran online bagi mahasiswa dan dosen.

Esa Llms User Terminal The terminal is manufactured by hughes in its factory in maryland, usa. for low latency applications and service in hard to reach places, the hughes managed leo service provides a reliable, high speed option. Esa is an ai powered command line tool that lets you create powerful personalized small agents. by connecting large language models (llms) with shell scripts as functions, esa lets you control your system, automate tasks, and query information using plain english commands. 2025 and beyond: the polished app era: while earlier years were for developers primarily, 2025 saw the rise of user friendly apps like ollama, gpt4all, lm studio, and jan (which we will explore more below). these turned complex terminal commands into simple download buttons, bringing local llm use to everyone. At intellian’s advanced development center (adc) in maryland, the demonstration showcased an integrated, small form factor electronically scanned array (esa) user terminal, operating in a live commercial network environment facilitated by oneweb.

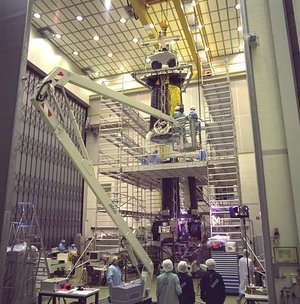

Esa Llms Flight Hardware 2025 and beyond: the polished app era: while earlier years were for developers primarily, 2025 saw the rise of user friendly apps like ollama, gpt4all, lm studio, and jan (which we will explore more below). these turned complex terminal commands into simple download buttons, bringing local llm use to everyone. At intellian’s advanced development center (adc) in maryland, the demonstration showcased an integrated, small form factor electronically scanned array (esa) user terminal, operating in a live commercial network environment facilitated by oneweb. These were the hottest & fastest growing repositories around llms for the week! whether you run cloud models or you host your own locally, there is a lot to play with here, notably turboquant plus (from @no stp on snek) and its tq4 1s turboquant weight compression on models directly now (not only the kv cache!), i've seen experiments with it. Running open source llms locally: complete hardware and setup guide 2026 everything you need to run llms on your own machine. gpu requirements, ram needs, quantization explained, ollama and llama.cpp setup, plus budget and high end build recommendations. Here in this guide, you will learn the step by step process to run any llm models chatgpt, deepseek and others, locally. this guide covers three proven methods to install llm models locally on mac, windows or linux. so, choose the method that suits your workflow and hardware. Running llms locally on macos: the complete 2026 comparison # ai # macos # llm # ollama if you're a developer building ai powered applications, you've probably wondered: can i just run these models on my mac? the answer is a resounding yes — and you have more options than ever. but choosing between them can be confusing. ollama? lm studio.

Esa Llms Flight Hardware These were the hottest & fastest growing repositories around llms for the week! whether you run cloud models or you host your own locally, there is a lot to play with here, notably turboquant plus (from @no stp on snek) and its tq4 1s turboquant weight compression on models directly now (not only the kv cache!), i've seen experiments with it. Running open source llms locally: complete hardware and setup guide 2026 everything you need to run llms on your own machine. gpu requirements, ram needs, quantization explained, ollama and llama.cpp setup, plus budget and high end build recommendations. Here in this guide, you will learn the step by step process to run any llm models chatgpt, deepseek and others, locally. this guide covers three proven methods to install llm models locally on mac, windows or linux. so, choose the method that suits your workflow and hardware. Running llms locally on macos: the complete 2026 comparison # ai # macos # llm # ollama if you're a developer building ai powered applications, you've probably wondered: can i just run these models on my mac? the answer is a resounding yes — and you have more options than ever. but choosing between them can be confusing. ollama? lm studio.

Esa Llms Flight Hardware Here in this guide, you will learn the step by step process to run any llm models chatgpt, deepseek and others, locally. this guide covers three proven methods to install llm models locally on mac, windows or linux. so, choose the method that suits your workflow and hardware. Running llms locally on macos: the complete 2026 comparison # ai # macos # llm # ollama if you're a developer building ai powered applications, you've probably wondered: can i just run these models on my mac? the answer is a resounding yes — and you have more options than ever. but choosing between them can be confusing. ollama? lm studio.

Esa Llms Flight Hardware

Comments are closed.