Ensemble Learning Bagging Sinhala

Ensemble Learning Bagging Boosting Aigloballab Ensemble learning | bagging | sinhala🔥support us: patreon codeprolk t i m e s t a m p s ⏰ 00:00 introduction01:01 ensemble lear. Types of ensembles learning there are three main types of ensemble methods: bagging (bootstrap aggregating): models are trained independently on different random subsets of the training data. their results are then combined—usually by averaging (for regression) or voting (for classification). this helps reduce variance and prevents overfitting.

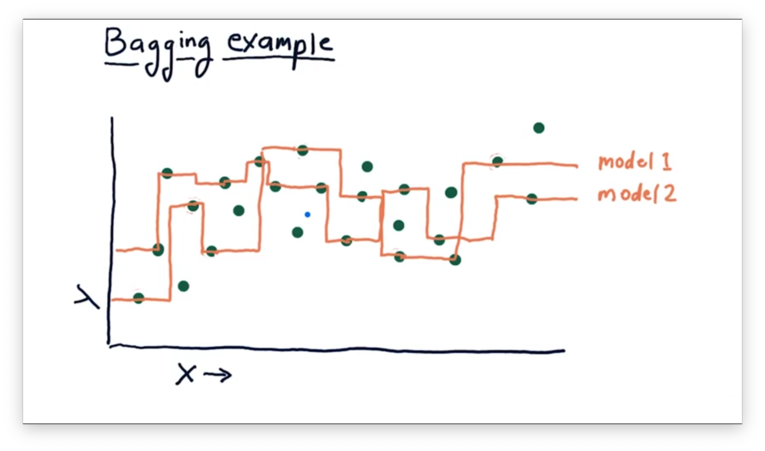

Bagging Ensemble Learning Algorithm Download Scientific Diagram Bagging is a gem in the ensemble learning crown—a simple yet effective way to boost model performance. by sampling data with replacement, training a crew of models, and merging their insights, it cuts variance and curbs overfitting. Subscribed 12 245 views 4 years ago machine learning| bagging in sinhala anjana wijesinghe more. විවිද supervised learning algorithms, unsupervised learning algorithms සහ reinforcement learning පිලිබදව සම්පූර්ණයෙන්ම මෙහි අඩංගු වේ. ඒ වගේම data handling and preprocessing වලට භාවිතා කරන විවිද. Boosting versus bagging rf while bagging and rf reduce the variance by fitting independent trees, boosting reduces the bias by sequentially fitting classifiers that depend on each other.

The Block Diagram Of Ensemble Learning Bagging Download Scientific විවිද supervised learning algorithms, unsupervised learning algorithms සහ reinforcement learning පිලිබදව සම්පූර්ණයෙන්ම මෙහි අඩංගු වේ. ඒ වගේම data handling and preprocessing වලට භාවිතා කරන විවිද. Boosting versus bagging rf while bagging and rf reduce the variance by fitting independent trees, boosting reduces the bias by sequentially fitting classifiers that depend on each other. In this guide, you’ll learn the concept, types, and techniques of ensemble learning—bagging, boosting, stacking, and blending—along with practical examples and tips for implementation. Bagging, short for bootstrap aggregating, is an ensemble learning technique introduced by leo breiman in 1994 to improve the stability and accuracy of machine learning models, particularly those prone to high variance, such as decision trees. The fundamental idea behind ensemble learning is to create a robust and accurate predictive model by combining predictions of multiple simpler models, which are referred to as base models. Bagging (breiman, 1996): fit many large trees to bootstrap resampled versions of the training data, and classify by majority vote. boosting (freund & shapire, 1996): fit many large or small trees to reweighted versions of the training data. classify by weighted majority vote.

Ensemble Learners Bagging And Boosting Omscs Notes In this guide, you’ll learn the concept, types, and techniques of ensemble learning—bagging, boosting, stacking, and blending—along with practical examples and tips for implementation. Bagging, short for bootstrap aggregating, is an ensemble learning technique introduced by leo breiman in 1994 to improve the stability and accuracy of machine learning models, particularly those prone to high variance, such as decision trees. The fundamental idea behind ensemble learning is to create a robust and accurate predictive model by combining predictions of multiple simpler models, which are referred to as base models. Bagging (breiman, 1996): fit many large trees to bootstrap resampled versions of the training data, and classify by majority vote. boosting (freund & shapire, 1996): fit many large or small trees to reweighted versions of the training data. classify by weighted majority vote.

Comments are closed.