Enable Model Quantization For Onnx And Tensorrt

Github Hongjinseong Quantization Tensorrt Onnx The tensorrt model optimizer is a python toolkit designed to facilitate the creation of quantization aware training (qat) models. these models are fully compatible with tensorrt’s optimization and deployment workflows. the toolkit also provides a post training quantization (ptq) recipe. In general, it is recommended to use dynamic quantization for rnns and transformer based models, and static quantization for cnn models. if neither post training quantization method can meet your accuracy goal, you can try using quantization aware training (qat) to retrain the model.

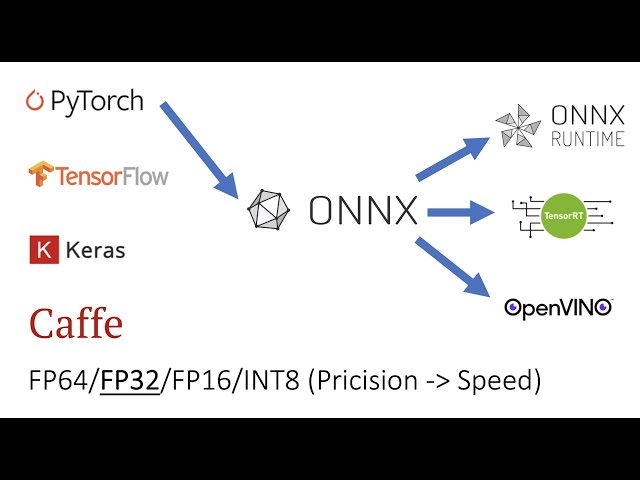

Fake Quantization Onnx Model Parse Error Using Tensorrt Tensorrt In order to leverage those specific optimization, you need to optimize your models with transformer model optimization tool before quantizing the model. this notebook demonstrates the e2e process. A unified library of sota model optimization techniques like quantization, pruning, distillation, speculative decoding, etc. it compresses deep learning models for downstream deployment frameworks like tensorrt llm, tensorrt, vllm, etc. to optimize inference speed. The quantized onnx models are optimized for deployment with tensorrt. this page focuses on quantizing existing onnx models or models exported from pytorch to onnx. In order to leverage these optimizations, you need to optimize your models using the transformer model optimization tool before quantizing the model. this notebook demonstrates the process.

Tensorrt Conversion Issues Of Onnx Model Trained With Quantization The quantized onnx models are optimized for deployment with tensorrt. this page focuses on quantizing existing onnx models or models exported from pytorch to onnx. In order to leverage these optimizations, you need to optimize your models using the transformer model optimization tool before quantizing the model. this notebook demonstrates the process. 🤗 optimum provides an optimum.onnxruntime package that enables you to apply quantization on many models hosted on the hugging face hub using the onnx runtime quantization tool. the quantization process is abstracted via the ortconfig and the ortquantizer classes. Ten field tested tensorrt and onnx runtime tips to shrink python inference latency with smart shapes, i o binding, cuda graphs, quantization, and thread tuning. By carefully converting your quantized model and selecting the appropriate execution providers, onnx runtime offers a powerful and flexible path for deploying efficient llms into production environments. The process of speeding up a quantized model in nni is that 1) the model with quantized weights and configuration is converted into onnx format, 2) the onnx model is fed into tensorrt to generate an inference engine.

Tensorrt Conversion Issues Of Onnx Model Trained With Quantization 🤗 optimum provides an optimum.onnxruntime package that enables you to apply quantization on many models hosted on the hugging face hub using the onnx runtime quantization tool. the quantization process is abstracted via the ortconfig and the ortquantizer classes. Ten field tested tensorrt and onnx runtime tips to shrink python inference latency with smart shapes, i o binding, cuda graphs, quantization, and thread tuning. By carefully converting your quantized model and selecting the appropriate execution providers, onnx runtime offers a powerful and flexible path for deploying efficient llms into production environments. The process of speeding up a quantized model in nni is that 1) the model with quantized weights and configuration is converted into onnx format, 2) the onnx model is fed into tensorrt to generate an inference engine.

Tensorrt Quantization Optimization Tensorrt Nvidia Developer Forums By carefully converting your quantized model and selecting the appropriate execution providers, onnx runtime offers a powerful and flexible path for deploying efficient llms into production environments. The process of speeding up a quantized model in nni is that 1) the model with quantized weights and configuration is converted into onnx format, 2) the onnx model is fed into tensorrt to generate an inference engine.

Pytorch To Onnx To Tensorrt Reason Town

Comments are closed.