Embeddings Vector Databases How Ai Understands Context 2025 Guide

Ai Embeddings And Vectordb A Simple Guide Discover how embeddings and vector databases power ai development by enabling contextual similarity, semantic search, and machine learning in ai applications. In today’s world of ai and natural language processing (nlp), finding information quickly and accurately is more important than ever. traditional keyword searches often fail when you need.

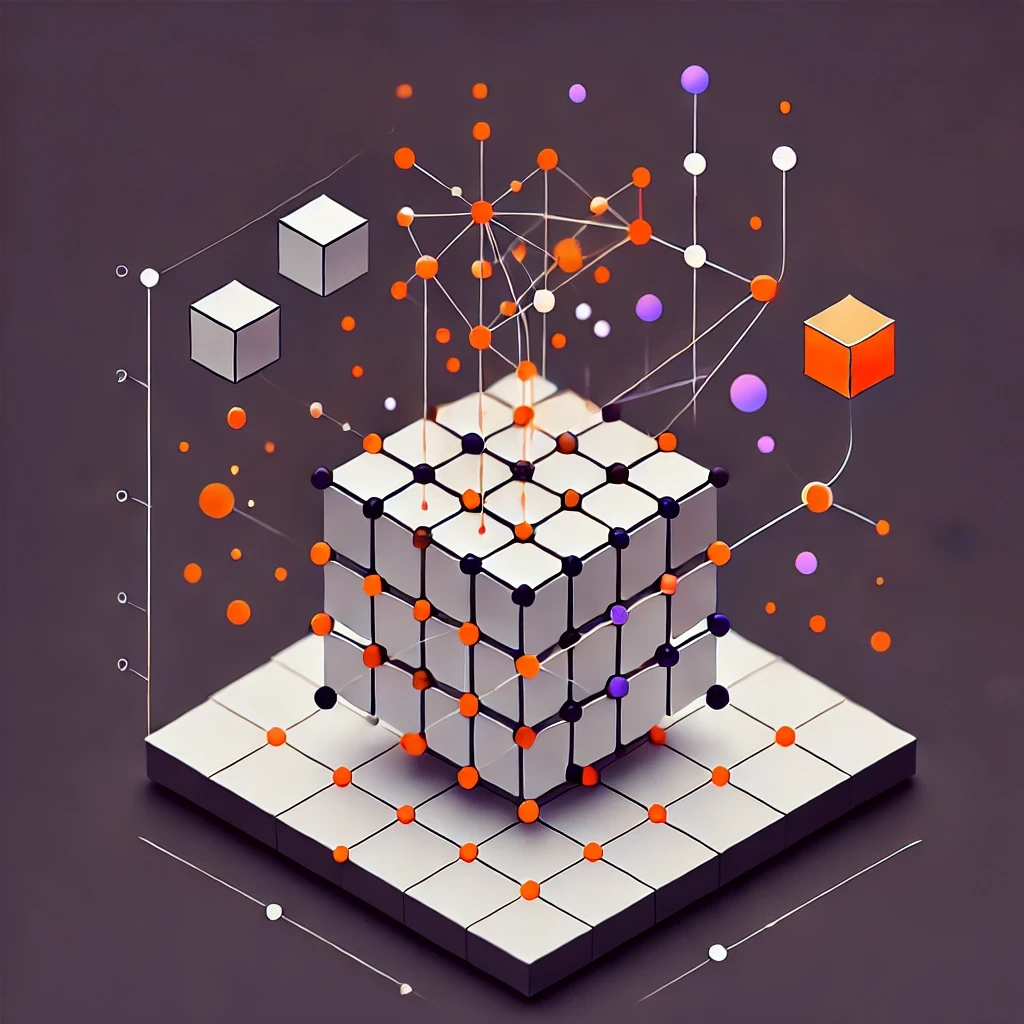

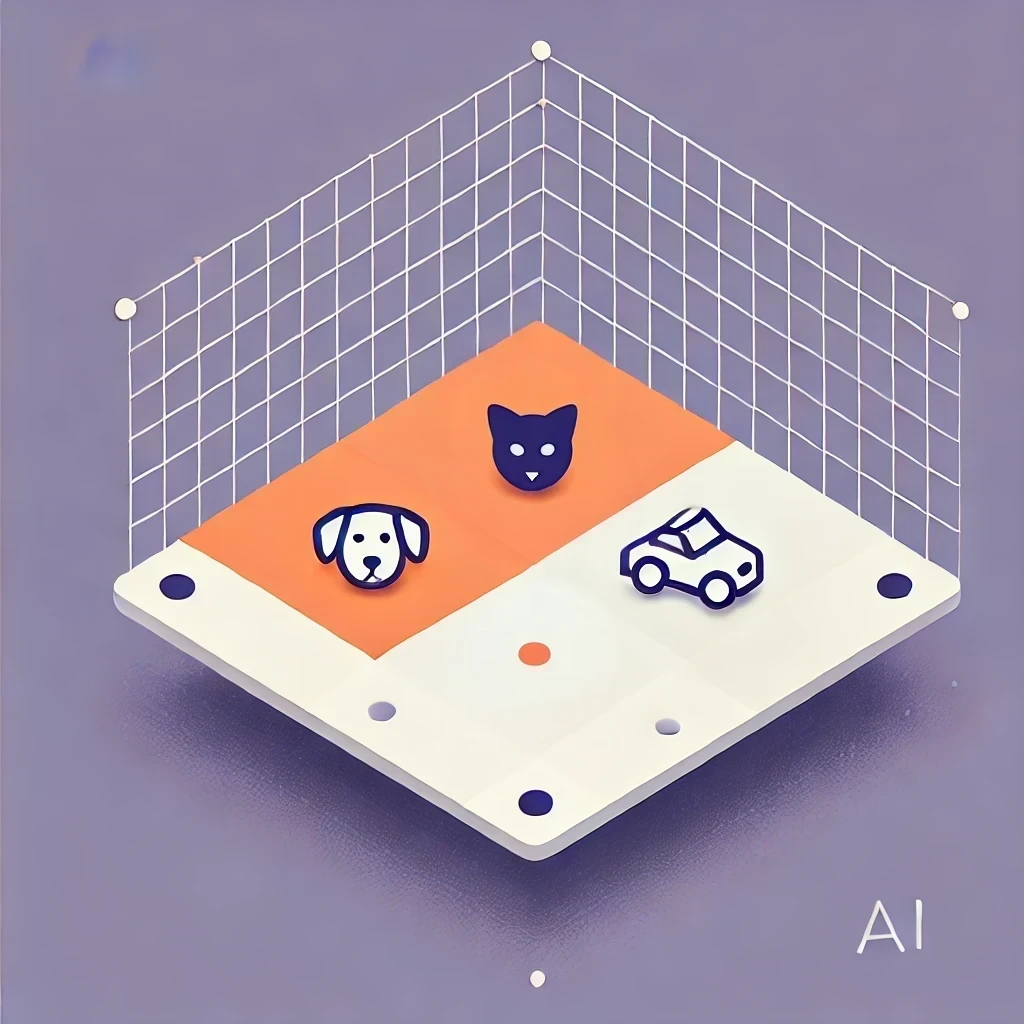

Embeddings Vector Databases How Ai Understands Context 2025 Guide Embeddings and vector databases — the complete guide turn text into searchable vectors and build semantic search that actually understands meaning — the foundation for rag, recommendations, and intelligent retrieval. The integration of embeddings, vector databases, and semantic search is revolutionizing data retrieval and analysis. from enabling context aware searches to powering advanced applications like rag, these technologies are shaping the future of information processing. Vector databases provide a way to utilize embeddings models, they allow developers to create unique experiences, such as visual, semantic, and multimodal search. Key takeaways vector databases store information as high dimensional vectors, which help machine learning (ml) models understand meaning and remember context. vector databases work by first converting multimodal data into vectors, indexing them into new data structures for efficient search, and performing nearest neighbor searches to retrieve results most similar to the query. while.

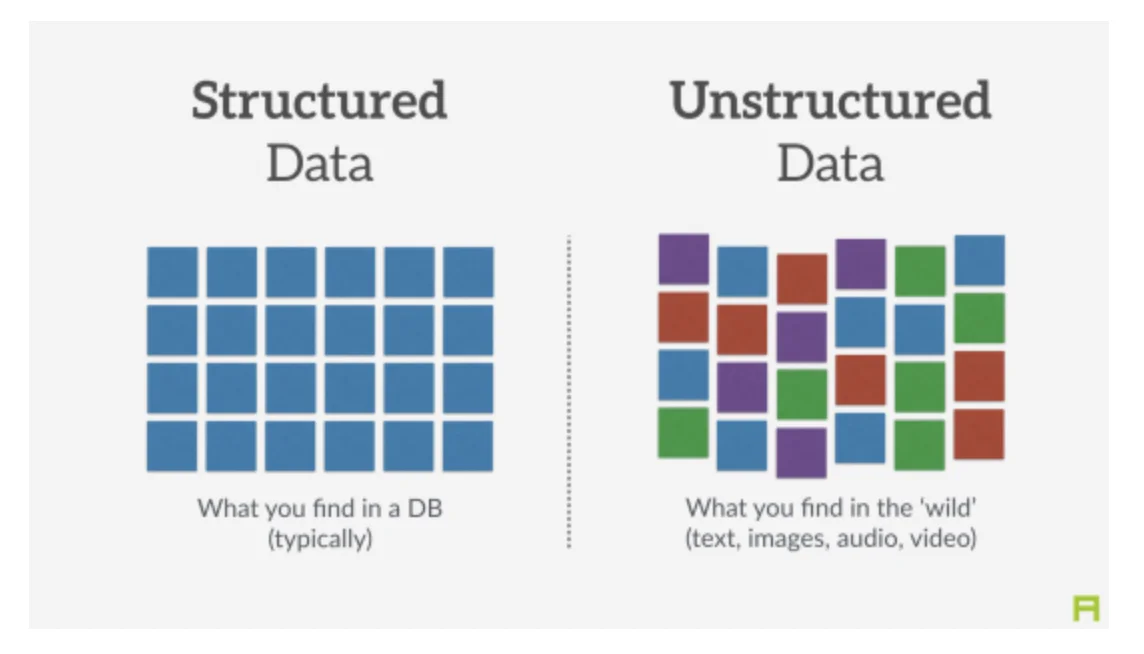

Embeddings Vector Databases How Ai Understands Context 2025 Guide Vector databases provide a way to utilize embeddings models, they allow developers to create unique experiences, such as visual, semantic, and multimodal search. Key takeaways vector databases store information as high dimensional vectors, which help machine learning (ml) models understand meaning and remember context. vector databases work by first converting multimodal data into vectors, indexing them into new data structures for efficient search, and performing nearest neighbor searches to retrieve results most similar to the query. while. First, it explains what an embedding really is and how to think about it without hand waving. second, it connects the math to the vector search systems you run in production. third, it gives you a practical checklist for evaluating and shipping a reliable retrieval pipeline. Embeddings are the hidden layer of math that allows ai to understand meaning rather than just matching text. if you want to understand how rag (retrieval augmented generation) or modern search works, you have to understand embeddings first. note: this article is for educational purposes only. Understand vector databases and embedding models for semantic search, rag, and ai chatbots, plus when to use pinecone, qdrant, chroma, and more. Embeddings transform data like text or images into numerical representations, allowing computers to understand semantic relationships. vector databases specialize in storing and querying these embeddings for semantic search, moving beyond traditional keyword matching.

Comments are closed.