Elu Activation Function

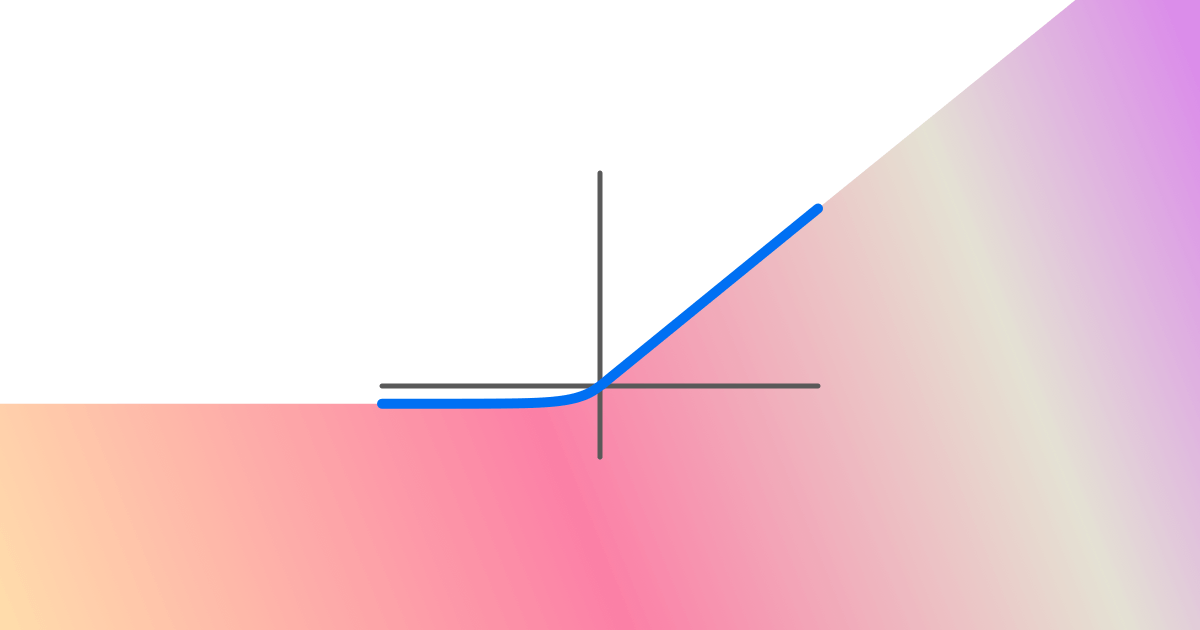

Elu Activation Function Applies the exponential linear unit (elu) function, element wise. method described in the paper: fast and accurate deep network learning by exponential linear units (elus). Exponential linear unit (elu) is an activation function that modifies the negative part of relu by applying an exponential curve. it allows small negative values instead of zero which improves learning dynamics.

Elu Activation Function Image Download Scientific Diagram Learn what elu is, how it differs from relu, and how to use it in pytorch and tensorflow. elu is a smooth and differentiable activation function that improves convergence and generalization. Compare relu vs elu activation functions in deep learning. learn their differences, advantages, and how to choose the right one for your neural network. When it comes to activation functions, i’ve always believed in learning by doing. so, let’s skip the theory and dive straight into implementing the elu activation function in pytorch. Exponential linear unit or its widely known name elu is a function that tend to converge cost to zero faster and produce more accurate results. different to other activation functions, elu has a extra alpha constant which should be positive number.

Using Elu As The Activation Function Download Scientific Diagram When it comes to activation functions, i’ve always believed in learning by doing. so, let’s skip the theory and dive straight into implementing the elu activation function in pytorch. Exponential linear unit or its widely known name elu is a function that tend to converge cost to zero faster and produce more accurate results. different to other activation functions, elu has a extra alpha constant which should be positive number. Exponential linear units are used to increase (make closer to zero) the mean activation of each layer. an alpha constant value is an important parameter which needs to be a positive number. the elu algorithm has been shown to provide more accurate results than relu and also converges faster. The exponential linear unit (elu) activation function is a type of activation function commonly used in deep neural networks. it was introduced as an alternative to the rectified linear unit (relu) and addresses some of its limitations. Learn about elu, a variant of relu nonlinearity, and its advantages, disadvantages, and performance on various datasets and tasks. see experiments, results, and comparisons with other activations. Exponential linear unit (elu) is an activation function that improves a model's accuracy and reduces the training time. it is mathematically represented as follows: in the formula above, α α is usually set to 1.0. it determines the saturation level of the negative inputs.

Exponential Linear Units Elu Activation Function Praudyog Exponential linear units are used to increase (make closer to zero) the mean activation of each layer. an alpha constant value is an important parameter which needs to be a positive number. the elu algorithm has been shown to provide more accurate results than relu and also converges faster. The exponential linear unit (elu) activation function is a type of activation function commonly used in deep neural networks. it was introduced as an alternative to the rectified linear unit (relu) and addresses some of its limitations. Learn about elu, a variant of relu nonlinearity, and its advantages, disadvantages, and performance on various datasets and tasks. see experiments, results, and comparisons with other activations. Exponential linear unit (elu) is an activation function that improves a model's accuracy and reduces the training time. it is mathematically represented as follows: in the formula above, α α is usually set to 1.0. it determines the saturation level of the negative inputs.

Comments are closed.