Efficient Training On A Single Gpu

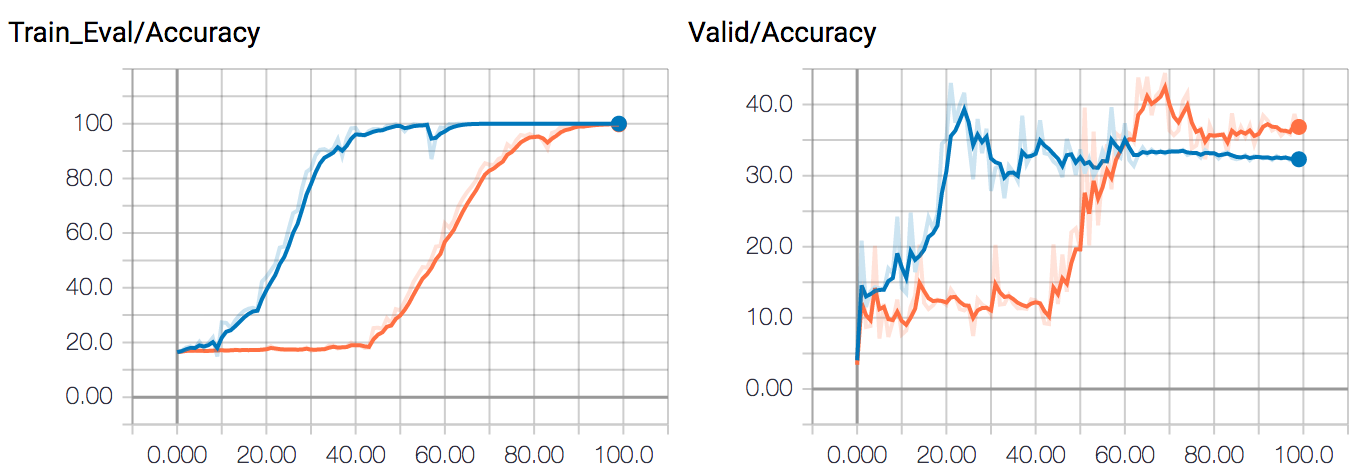

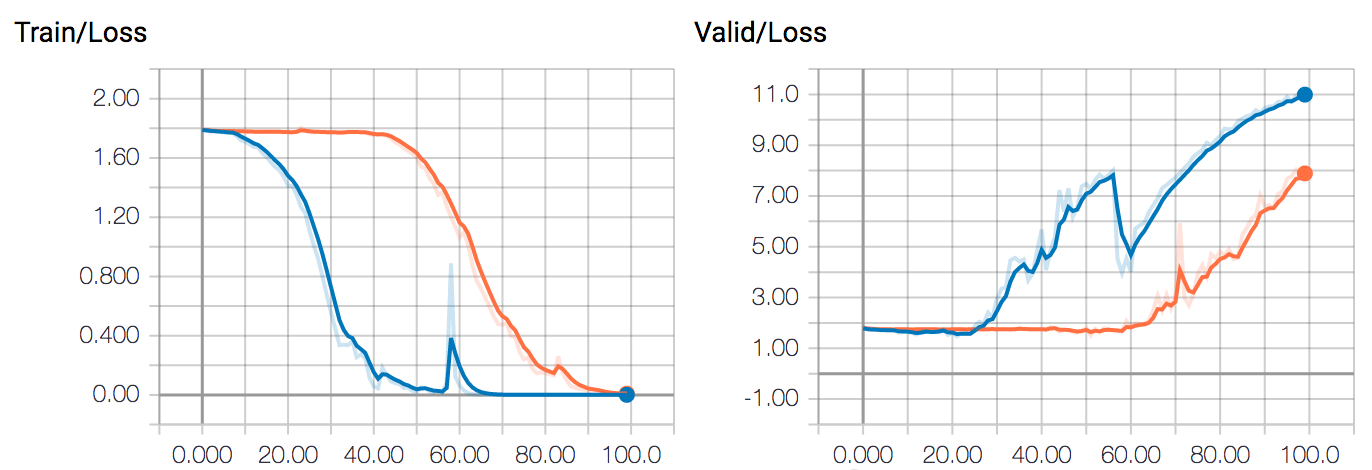

Single Gpu Training Process Bottlenecks And Scalability Stable When training large models, there are two aspects that should be considered at the same time: maximizing the throughput (samples second) leads to lower training cost. this is generally achieved by utilizing the gpu as much as possible and thus filling gpu memory to its limit. This paper examines in detail how various state of the art llms train on a single graphical processing unit (gpu), paying close attention to crucial elements like throughput, memory utilization and training time.

Training Speed On Single Gpu Vs Multi Gpus Pytorch Forums However, even on a single gpu, there are many ways to train larger models and make them more efficient. in this notebook, we’ll explore some of these techniques, including mixed precision training, activation checkpointing, gradient accumulation, and more. Let’s now walk through each layer in detail, and then examine how data flows between cpu and gpu, how the gpu actually runs training computations, and how to optimize and monitor. Megatrain full precision training single gpu: what the paper actually proposes the base problem is well understood: training large llms requires distributing the model across dozens or hundreds of gpus because the parameters, gradients, and optimizer states simply don't fit in the vram of a single card. However, even on a single gpu, there are many ways to train larger models and make them more efficient. in this notebook, we'll explore some of these techniques, including mixed precision.

Training Speed On Single Gpu Vs Multi Gpus Pytorch Forums Megatrain full precision training single gpu: what the paper actually proposes the base problem is well understood: training large llms requires distributing the model across dozens or hundreds of gpus because the parameters, gradients, and optimizer states simply don't fit in the vram of a single card. However, even on a single gpu, there are many ways to train larger models and make them more efficient. in this notebook, we'll explore some of these techniques, including mixed precision. You want to train a deep learning model and you want to take advantage of multiple gpus, a tpu or even multiple workers for some extra speed or larger batch size. When training transformer models on a single gpu, it’s important to optimize for both speed and memory efficiency to make the most of limited resources. here are some key parameters and techniques to consider:. The single gpu training problem nobody solved for years, training massive language models has felt like a luxury reserved for well funded labs with warehouse scale clusters. but what if the real bottleneck wasn't compute power, but how we've organized our memory? megatrain flips conventional wisdom on its head. instead of cramming everything onto the gpu, it treats the gpu as a temporary. Pytorch launches a gpu kernel for each tensor op fine for large ops, but inefficient for many small ones. fuses multiple small tensor ops into one optimized kernel.

Comments are closed.