Efficient Rl Via Disentangled Environment And Agent Representations

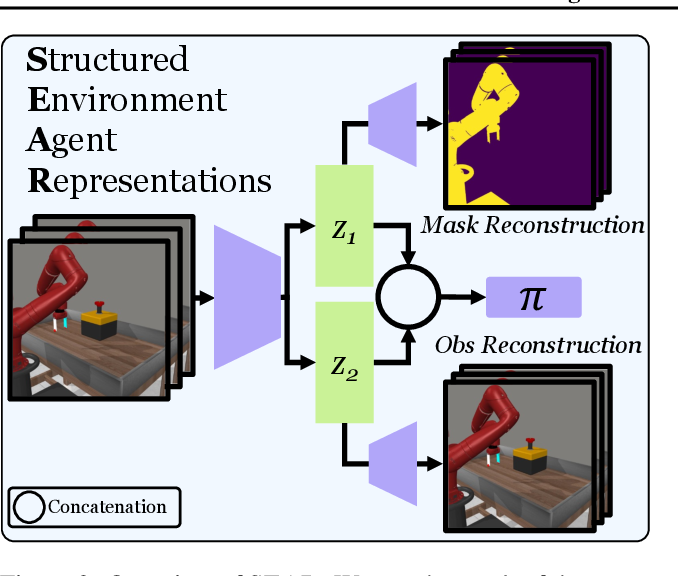

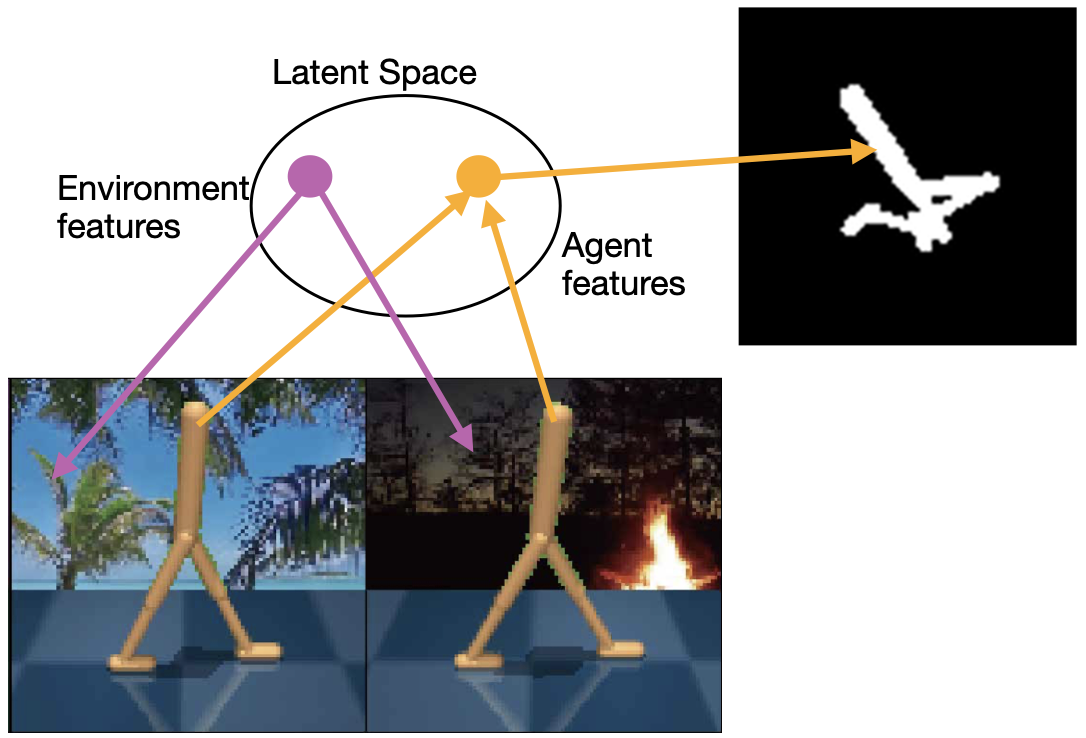

Figure 2 From Efficient Rl Via Disentangled Environment And Agent We propose an approach for learning such structured representations for rl algorithms, using visual knowledge of the agent, such as its shape or mask, which is often inexpensive to obtain. this is incorporated into the rl objective using a simple auxiliary loss. We propose an approach for learning such structured representations for rl algorithms, using visual knowledge of the agent, which is often inexpensive to obtain, such as its shape or mask.

Figure 1 From Efficient Rl Via Disentangled Environment And Agent We propose an approach for learning such structured representations for rl algorithms, using visual knowledge of the agent, such as its shape or mask, which is often inexpensive to obtain. We propose an approach for learning such structured representations for rl algorithms, using visual knowledge of the agent, which is often inexpensive to obtain, such as its shape or mask. Reinforcement learning (rl) algorithms can learn robotic control tasks from visual observations, but they often require a large amount of data, especially when. This is an implementation of sear (structured environment agent representations) from efficient rl via disentangled environment and agent representations, by kevin gmelin, shikhar bahl, russell mendonca, and deepak pathak.

Dear Disentangled Environment And Agent Representations For Reinforcement learning (rl) algorithms can learn robotic control tasks from visual observations, but they often require a large amount of data, especially when. This is an implementation of sear (structured environment agent representations) from efficient rl via disentangled environment and agent representations, by kevin gmelin, shikhar bahl, russell mendonca, and deepak pathak. Efficient rl via disentangled environment and agent representations . kevin gmelin* shikhar bahl*russell mendonca deepak pathak. control robot world environment. robots that can do thousands of tasks in thousands of environments. goal: general purpose robots. visual reinforcement learning. This work proposes an approach for learning structured representations for rl algorithms, using visual knowledge of the agent, such as its shape or mask, which is often inexpensive to obtain, and shows that this method outperforms state of the art model free approaches.

Pdf Dear Disentangled Environment And Agent Representations For Efficient rl via disentangled environment and agent representations . kevin gmelin* shikhar bahl*russell mendonca deepak pathak. control robot world environment. robots that can do thousands of tasks in thousands of environments. goal: general purpose robots. visual reinforcement learning. This work proposes an approach for learning structured representations for rl algorithms, using visual knowledge of the agent, such as its shape or mask, which is often inexpensive to obtain, and shows that this method outperforms state of the art model free approaches.

Comments are closed.