Ecs 154b 201a Computer Architecture Parallel Memory Systems

Ecs 154b Computer Architecture Parallel Memory Systems In the processor section we learned about instruction level parallelism and how there are limits to how much parallelism there is in our applications. however, we can overcome this, by leveraging multiple processors. Ecs 154b 201a wq 24 created by jason lowe power • 35 items • updated march 19, 2024.

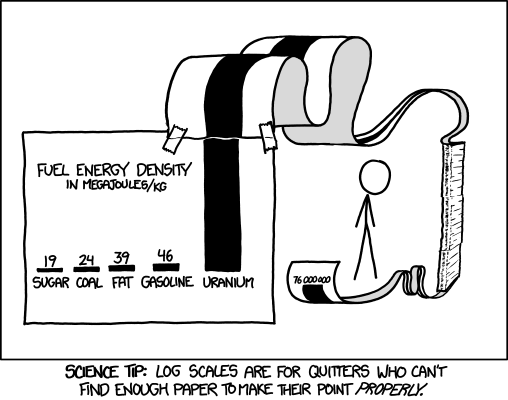

Ecs 154b Computer Architecture Parallel Systems Performance A good resource is a recent mit course on "the missing semester" which teaches these important tools that aren't necessarily covered in a "normal" computer science curriculum. Access study documents, get answers to your study questions, and connect with real tutors for ecs 154b : computer architecture at university of california, davis. Ecs 201a computer architecture description modern research topics and methods in computer architecture. design implications of memory latency and bandwidth limitations. performance enhancement via within processor and between processor parallelism. Simd – single instruction multiple data: the machines, also called array processors, possess an instruction set and multiple independent arithmetic units each is connected to its own memory.

Github Saiflakhani Ecs 201c Parallel Architecture Code For The Class Ecs 201a computer architecture description modern research topics and methods in computer architecture. design implications of memory latency and bandwidth limitations. performance enhancement via within processor and between processor parallelism. Simd – single instruction multiple data: the machines, also called array processors, possess an instruction set and multiple independent arithmetic units each is connected to its own memory. While coherence ensures that caches stay transparent to the programmer, the memory consistency model is actually something that’s part of the isa and defines how the programmer should expect multi threaded programs to behave. The class is broken into four main components: introduction to computer architecture, processor architecture, memory architecture, and parallel architecture. this quarter (winter 2025) lecture will be in person in teaching and learning complex 1010. This video talks about how to implement a barrier in a shared memory system. this implementation is going to be the motivation our next few videos about the hardware implementation of these system. Since no one technology is the best, we want to create a memory hierarchy with caches. we will discuss the underlying principles that make caches efficient, and how hardware caches are designed.

Comments are closed.