E Transparency Explainability Contestability Ai Governance Framework

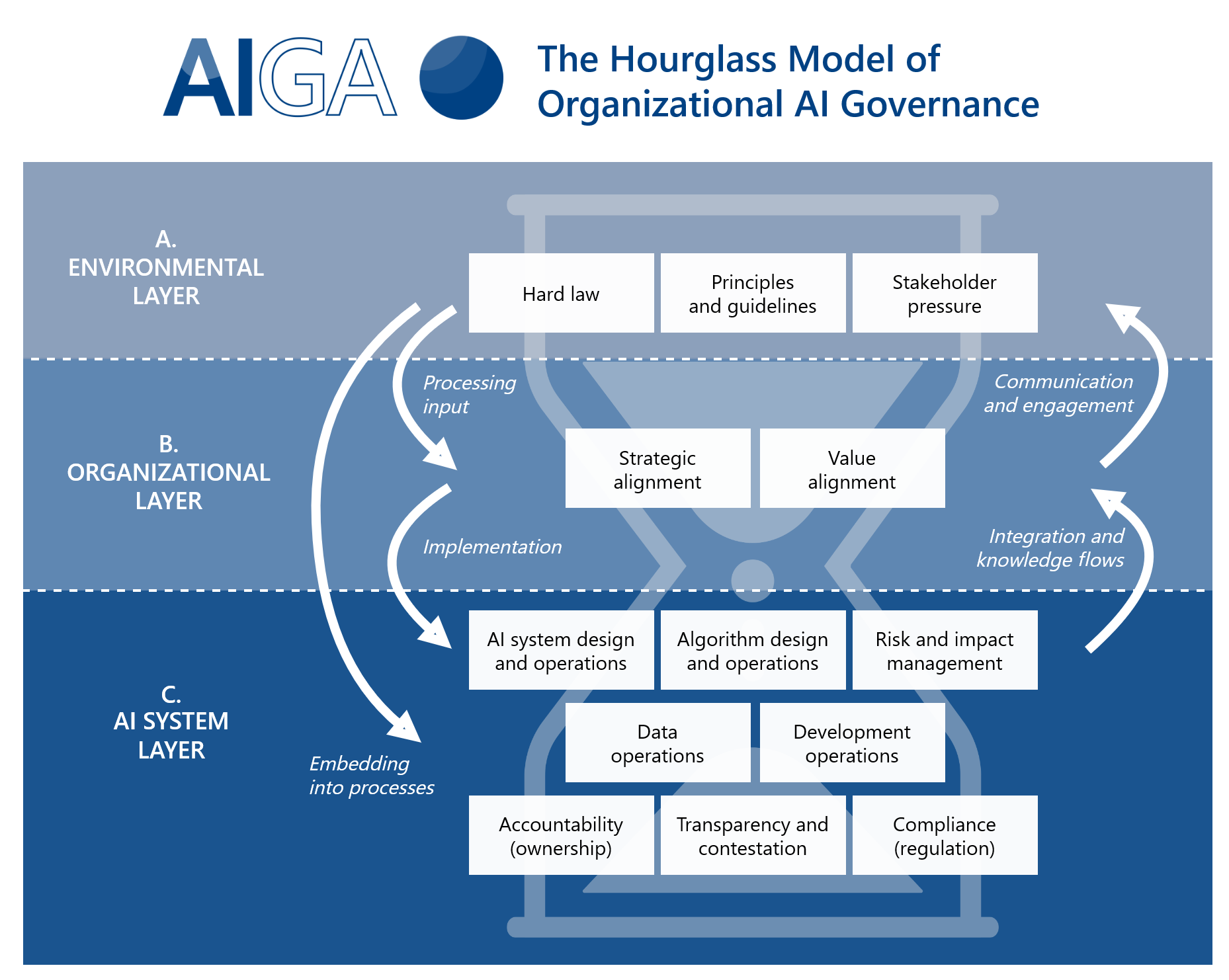

Ai Governance Framework Artificial Intelligence Governance And Auditing After identifying the appropriate transparency, explainability, and contestability expectations, the ai system owner should ensure that the organization designs appropriate technical and organizational structures to satisfy the tce expectations and align the ai system with the organization’s values and risk tolerance. This paper introduces the teut framework—integrating transparency, explainability, uncertainty, and trust calibration—to enhance trust in ai systems while aligning with existing governance initiatives, thereby paving the way for more accountable and ethical ai deployment. this is an ai generated summary, check important information.

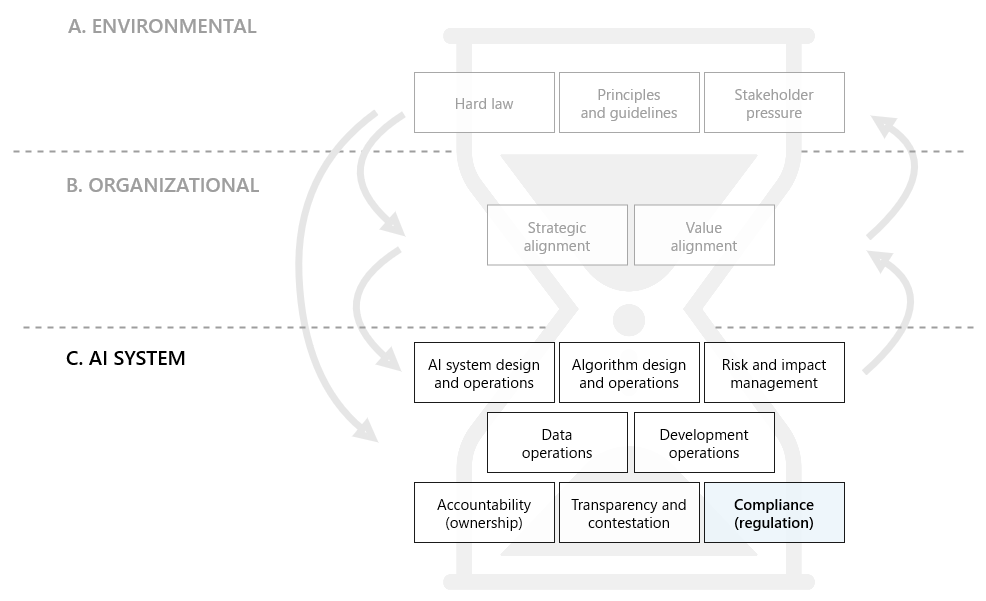

H Compliance Ai Governance Framework Grounded in a systematic literature review, this paper presents the first rigorous formal definition of contestability in ex plainable ai, directly aligned with stakeholder requirements and regulatory mandates. Learn how explainable ai governance makes ai transparent, interpretable, and accountable. discover benefits, key components, tools, and best practices to reduce bias, meet regulations, and build trust. My research aims to inform policy and public institutions on how to implement responsible ai by designing for explainability and contestability. the remote doctoral consortium would allow me to discuss with peers how these principles can be realized and account for human factors in their design. The goal of this study was to investigate what ethical guidelines organizations have defined for the development of transparent and explainable ai systems and evaluate how explainability requirements can be defined in practice.

The Ai Governance Lifecycle Ai Governance Framework My research aims to inform policy and public institutions on how to implement responsible ai by designing for explainability and contestability. the remote doctoral consortium would allow me to discuss with peers how these principles can be realized and account for human factors in their design. The goal of this study was to investigate what ethical guidelines organizations have defined for the development of transparent and explainable ai systems and evaluate how explainability requirements can be defined in practice. Transparency is the organizational practice of making ai information available to stakeholders: model cards, datasheets, decision rationale, limitation disclosures, and governance visibility. you can be transparent about using opaque models (disclosing the opacity), or use interpretable models without being transparent (failing to communicate). Transparency and explainability (principle 1.3) this principle is about transparency and responsible disclosure around ai systems to ensure that people understand when they are engaging with them and can challenge outcomes. This framework is built on two key principles: ai decision making must be transparent, explainable, and fair, and ai solutions should focus on human needs. instead of rigid regulations, the framework aims to build public trust while encouraging innovation, positioning itself as a global model for ethical ai development. Ai transparency is the disclosure of an ai system’s data sources, development processes, limitations, and operational use in a way that allows stakeholders to understand what the system does, who is responsible for it, and how it is governed—without necessarily explaining its internal logic.

Comments are closed.