Docker Basic Data Ingestion To Postgres Database Using Python By

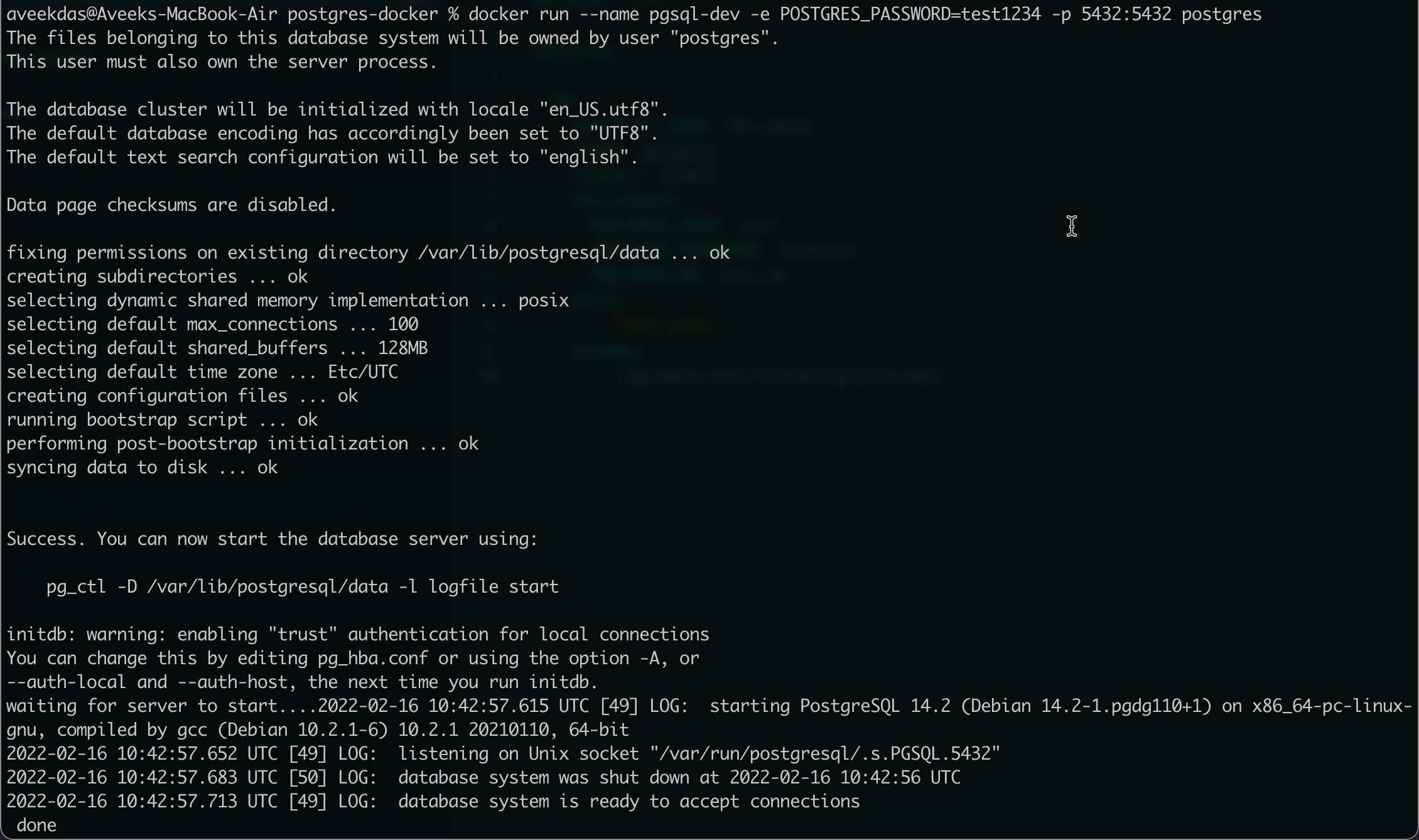

Docker Basic Data Ingestion To Postgres Database Using Python By This project was built as a data engineering practice project to demonstrate how to build a simple containerized ingestion pipeline using docker and postgresql. To start ingesting we first need somewhere to ingest it to, so let’s start by setting up the postgres database. we are going to do that by using docker because it’s a lot easier than.

Docker Basic Data Ingestion To Postgres Database Using Python By It demonstrates how to set up a docker environment with postgresql, create a database schema, and use a builder pattern to establish a database provider for postgresql. Using docker to encapsulate and run the pipeline. ingesting nyc taxi data into postgresql. 2. convert jupyter notebook to a python script. converts the jupyter notebook into a python script (upload data.py). moving all imports to the top. removing unnecessary lines like %time magic and inline prints. 3. add command line arguments with argparse. In my upcoming article, i’ll take you through the process of dockerizing your ingestion script to create a pipeline and running postgres and pgadmin using docker compose. The aim of this project is to demonstrate how to create a reliable data pipeline with apache airflow that reads data from csv files and writes it into a postgresql database. we will explore the integration of various airflow components to ensure effective data handling and maintain data integrity.

How To Efficiently Load Data Into Postgres Using Python In my upcoming article, i’ll take you through the process of dockerizing your ingestion script to create a pipeline and running postgres and pgadmin using docker compose. The aim of this project is to demonstrate how to create a reliable data pipeline with apache airflow that reads data from csv files and writes it into a postgresql database. we will explore the integration of various airflow components to ensure effective data handling and maintain data integrity. The aim of this project is to demonstrate how to create a reliable data pipeline with apache airflow that reads data from csv files and writes it into a postgresql database. This page describes the configuration and implementation of the custom docker container used by the sagemaker processing job to perform distributed data ingestion into the aurora postgresql pgvector store. Using python and pandas, we will extract the data from a public repository and upload the raw data to a postgresql database. this assumes that we have an existing postgresql database running in a docker container. This first part involved setting up postgresql with docker and loading data with python, but the most useful part was honestly the debugging process around it. 𝐀𝐥𝐨𝐧𝐠 𝐭𝐡𝐞.

Using Docker With Postgres Tutorial And Best Practices Earthly Blog The aim of this project is to demonstrate how to create a reliable data pipeline with apache airflow that reads data from csv files and writes it into a postgresql database. This page describes the configuration and implementation of the custom docker container used by the sagemaker processing job to perform distributed data ingestion into the aurora postgresql pgvector store. Using python and pandas, we will extract the data from a public repository and upload the raw data to a postgresql database. this assumes that we have an existing postgresql database running in a docker container. This first part involved setting up postgresql with docker and loading data with python, but the most useful part was honestly the debugging process around it. 𝐀𝐥𝐨𝐧𝐠 𝐭𝐡𝐞.

Comments are closed.