Distilbert Model Tutorial Transformers In Nlp Natural Language Processing Nlu Chatbots

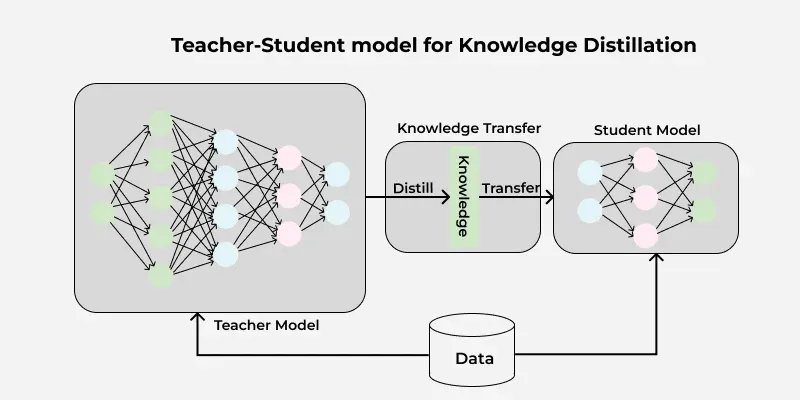

Natural Language Processing Nlp Transformers Pdf Let’s implement distilbert for a text classification task using the transformers library by hugging face. we’ll use the imdb movie review dataset to classify reviews as positive or negative. In this work, we propose a method to pre train a smaller general purpose language representation model, called distilbert, which can then be fine tuned with good performances on a wide range of tasks like its larger counterparts.

Transformers In Nlp Revolutionizing Language Processing In this video i am discussing about distilbert. how it works. Learn how to leverage distilbert for efficient nlp tasks, including text classification, sentiment analysis, and more, with this comprehensive guide. The primary motivation behind distilbert is the need for compact and more efficient models for nlp applications that operate in real time on edge devices. In summary, bert and distilbert exemplify the trajectory from accuracy centric, resource intensive transformer models to resource efficient, deployment ready representations.

Distilbert In Natural Language Processing Geeksforgeeks The primary motivation behind distilbert is the need for compact and more efficient models for nlp applications that operate in real time on edge devices. In summary, bert and distilbert exemplify the trajectory from accuracy centric, resource intensive transformer models to resource efficient, deployment ready representations. You have seen in the previous post how to use a model such as distilbert for natural language processing tasks. in this post, you will learn how to fine tune the model for your own purpose. Distilbert is trained with a triple loss function (distillation, mlm, and cosine similarity). it removes the token type embeddings and pooler layers from bert to keep things lean. Models to perform neural summarization (extractive and abstractive) using machine learning transformers and a tool to convert abstractive summarization datasets to the extractive task. Using distilbert is easy with libraries like hugging face's transformers. it can be fine tuned for different tasks and performs well with lower computational costs.

Comments are closed.