Dimensionality Reduction In Machine Learning Using Python Basics Explained Quick Implementation

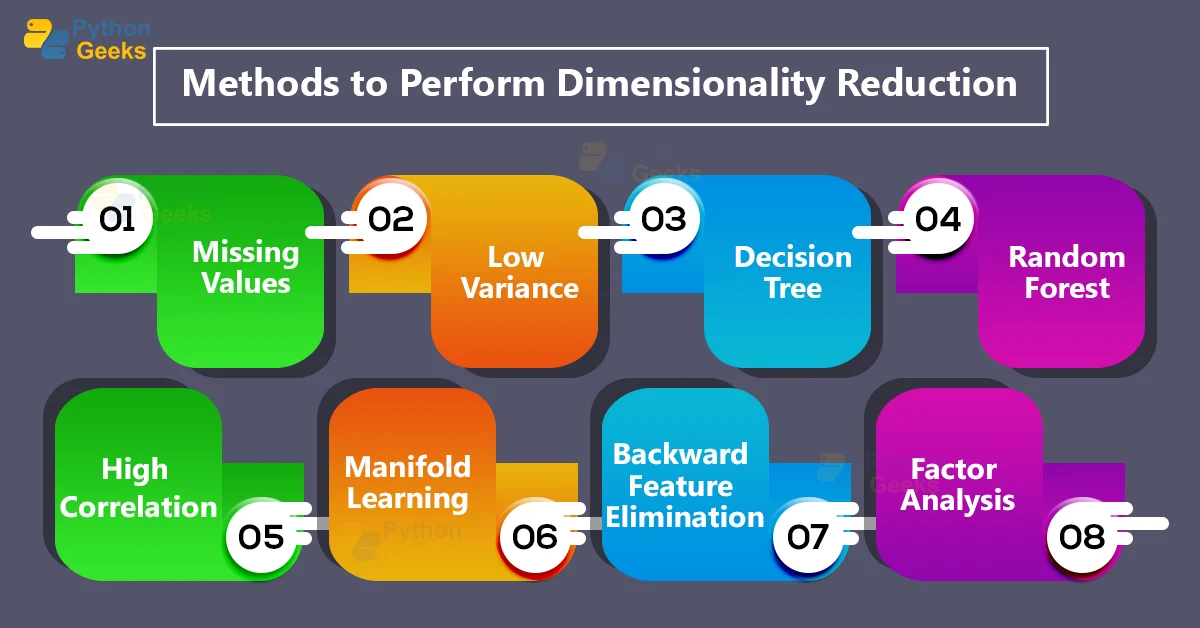

Dimensionality Reduction In Machine Learning Python Geeks Dimensionality reduction is a statistical ml based technique wherein we try to reduce the number of features in our dataset and obtain a dataset with an optimal number of dimensions. Learn how to perform different dimensionality reduction using feature extraction methods such as pca, kernelpca, truncated svd, and more using scikit learn library in python.

Dimensionality Reduction In Machine Learning Python Geeks What is dimensionality reduction? dimensionality reduction is the process of reducing the number of input features in a dataset while preserving as much important information as possible. In this step by step python dimensionality reduction guide, you’ll learn how to set up your environment, load datasets, preprocess data, and apply algorithms like pca, t sne, and umap. Dimensionality reduction in machine learning is the process of transforming data from a high dimensional space into a lower dimensional one while preserving its most meaningful properties. In the first part of this article, we'll discuss some dimensionality reduction theory and introduce various algorithms for reducing dimensions in various types of datasets.

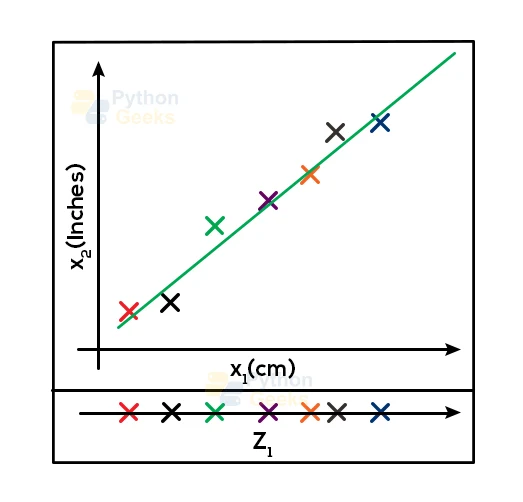

Dimensionality Reduction Machine Learning In Python Studybullet Dimensionality reduction in machine learning is the process of transforming data from a high dimensional space into a lower dimensional one while preserving its most meaningful properties. In the first part of this article, we'll discuss some dimensionality reduction theory and introduce various algorithms for reducing dimensions in various types of datasets. This article will explore the theoretical foundations and the python implementation of the most used dimensionality reduction algorithm: principal component analysis (pca). Learn how to apply pca, t sne, umap, autoencoders, and feature selection methods to simplify high dimensional data, improve model performance, enhance visualization, and reduce computational cost—with clear math, python examples, and practical best practices. Summary: dimensionality reduction simplifies large data sets while also preserving key patterns. using python tools like random forests for feature selection and pca for unsupervised analysis, data scientists can streamline models and uncover trends, even without labeled outcomes. Dimensionality reduction is a technique used to reduce the number of features in a dataset while attempting to retain the meaningful information. for instance, you might have a dataset with 100 features (input) and wish to simplify it to 10 features (desired output), without losing critical patterns that affect predictions.

Visualization Dimensionality Reduction In Python For Machine Learning This article will explore the theoretical foundations and the python implementation of the most used dimensionality reduction algorithm: principal component analysis (pca). Learn how to apply pca, t sne, umap, autoencoders, and feature selection methods to simplify high dimensional data, improve model performance, enhance visualization, and reduce computational cost—with clear math, python examples, and practical best practices. Summary: dimensionality reduction simplifies large data sets while also preserving key patterns. using python tools like random forests for feature selection and pca for unsupervised analysis, data scientists can streamline models and uncover trends, even without labeled outcomes. Dimensionality reduction is a technique used to reduce the number of features in a dataset while attempting to retain the meaningful information. for instance, you might have a dataset with 100 features (input) and wish to simplify it to 10 features (desired output), without losing critical patterns that affect predictions.

Comments are closed.