Designing Effective Cache Eviction Algorithms

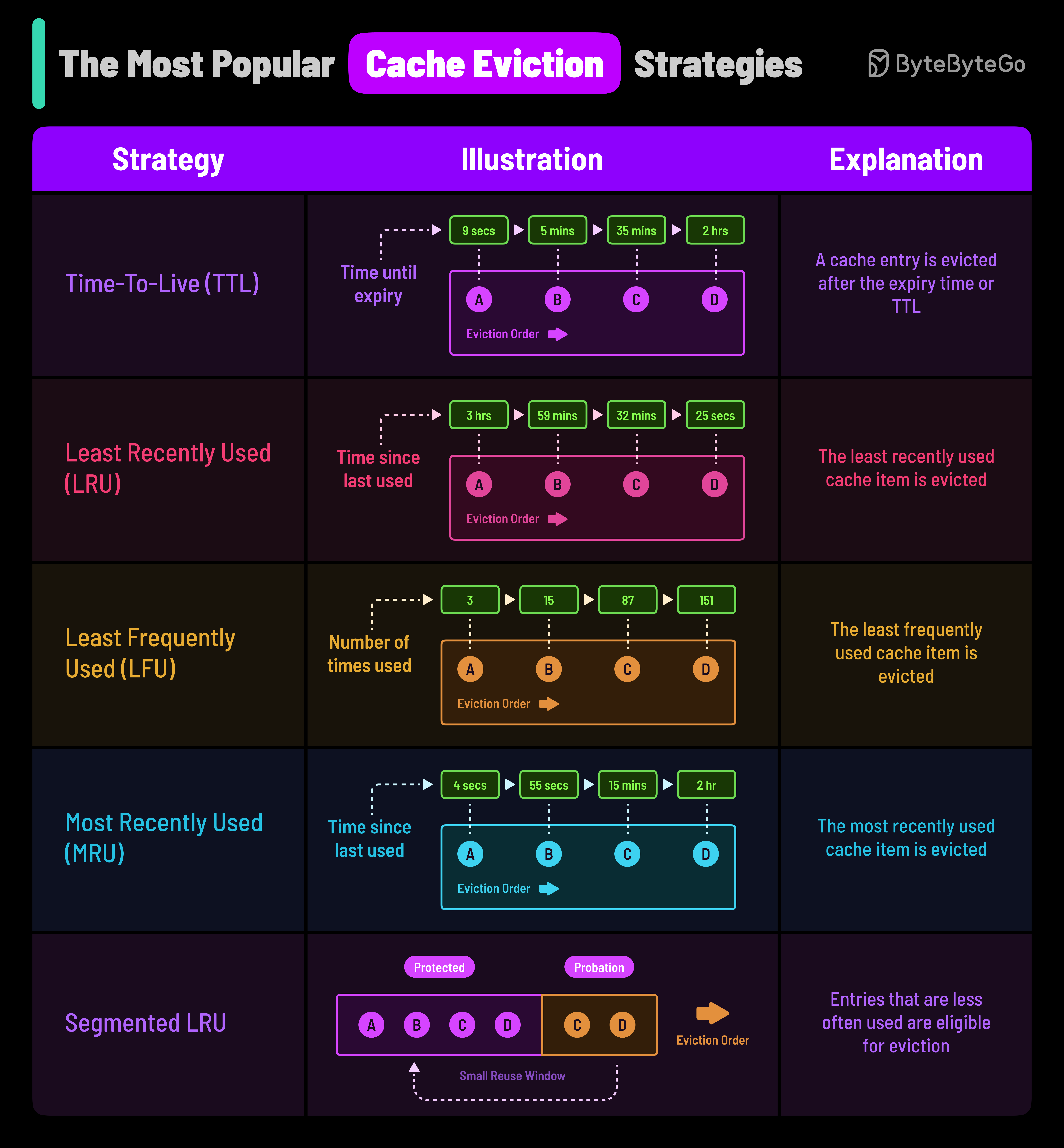

Cache Eviction Policies Pdf Cache Computing Data Cache eviction is the process of removing data from a cache when it becomes full to make space for new or more relevant data. since cache memory is limited, the system must decide which entries to delete while keeping frequently accessed data. Fifo is one of the simplest caching strategies, where the cache behaves in a queue like manner, evicting the oldest items first, regardless of their access patterns or frequency. while not strictly an eviction algorithm, ttl is a strategy where each cache item is given a specific lifespan.

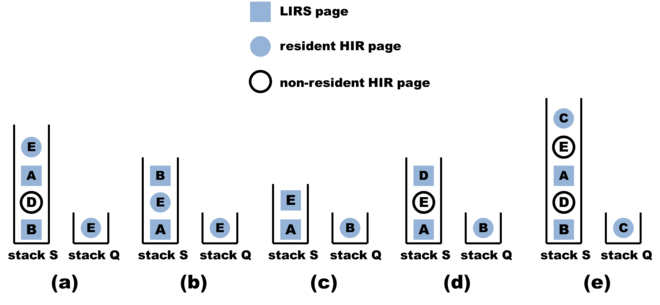

Brief Summary Of Cache Modes Cache Eviction Algorithms Guanzhou Hu This paper presents a practical approach to cache eviction algorithm design, called mobius, that optimizes the concurrent throughput of caches and reduces cache operation latency by utilizing lock free data structures, while maintaining comparable hit ratios. It turns out that lazy promotion and fast demotion are two important properties of good cache eviction algorithms. the principle of lazy promotion refers to the strategy of promoting items to be retained only when space needs to be freed in the cache. Sieve can facilitate the design of advanced eviction algorithms. Cache eviction policies are algorithms or strategies used to determine which data to remove from the cache when it is full.

Bytebytego Cache Eviction Policies Sieve can facilitate the design of advanced eviction algorithms. Cache eviction policies are algorithms or strategies used to determine which data to remove from the cache when it is full. Cache eviction algorithms play a critical role in the performance of a cache system. in our hybrid approach, each item in the cache lru and lfu has a counter. Learn the classic lru cache implementation, understand its limitations, and explore modern alternatives like lru k, 2q, arc, and sieve for building high performance caching systems. Fifo is one of the simplest caching strategies, where the cache behaves in a queue like manner, evicting the oldest items first, regardless of their access patterns or frequency. while not strictly an eviction algorithm, ttl is a strategy where each cache item is given a specific lifespan. The idea here is, given a limit on the number of items to cache, how to choose the thing to evict that gives the best result. in 1966 laszlo belady showed that the most efficient caching algorithm would be to always discard the information that will not be needed for the longest time in the future.

Comments are closed.