Deploy Local Llms Like Containers Ollama Docker Foss Engineer

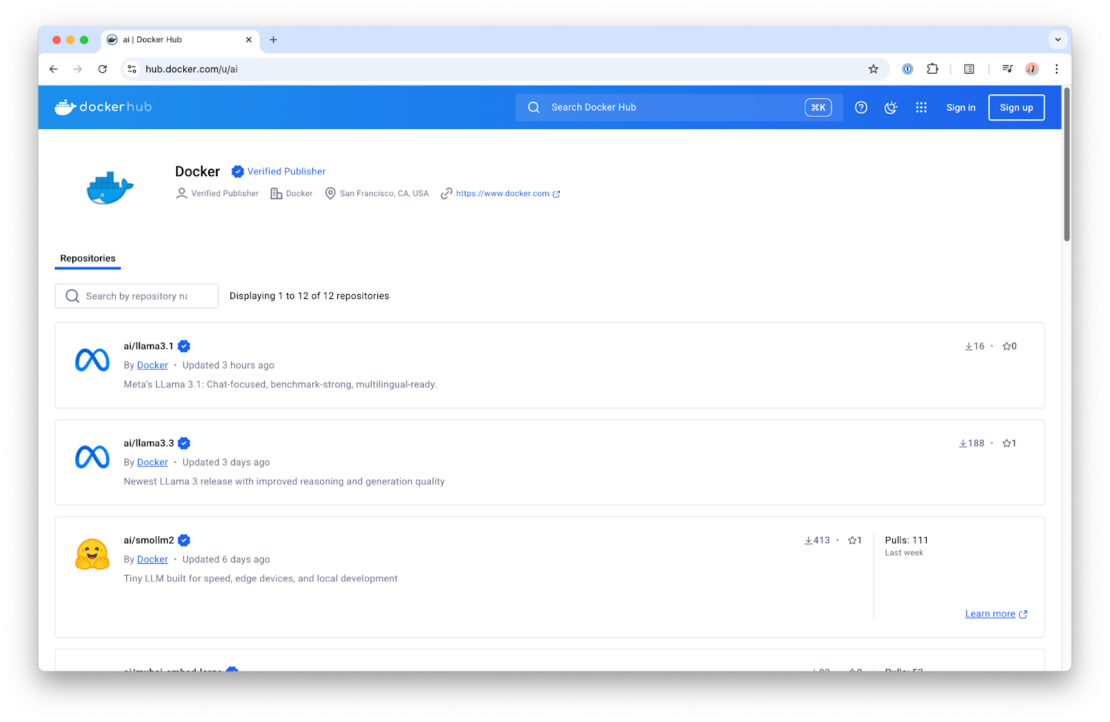

Deploy Local Llms Like Containers Ollama Docker Foss Engineer Deploy local llms like containers ollama docker using ollama with docker to deploy llms locally for free. Running large language models (llms) locally is no longer just for research labs. with tools like ollama, docker, and openwebui, you can spin up your own private chatgpt like experience on.

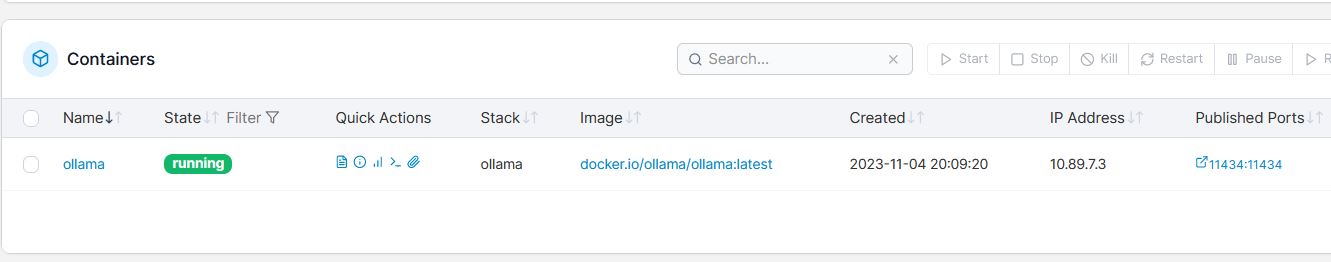

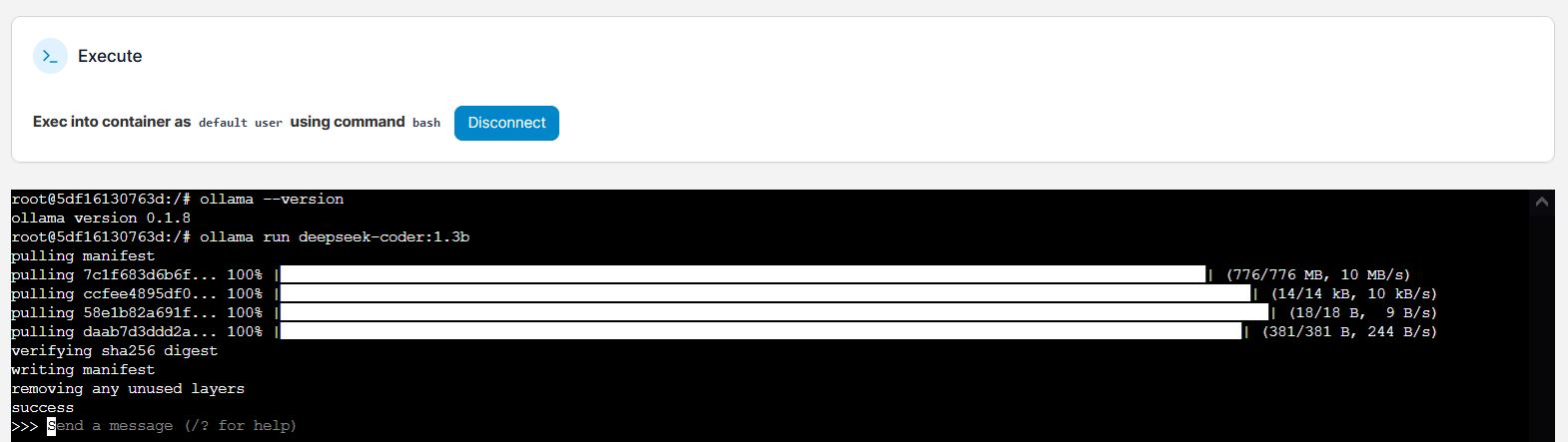

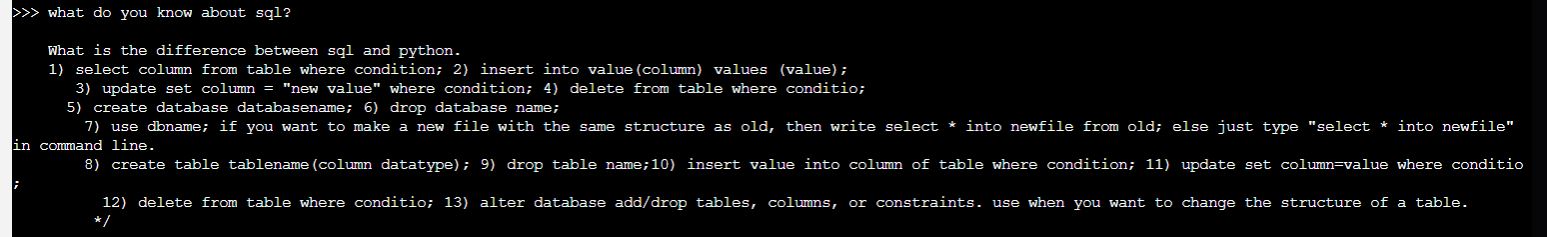

Deploy Local Llms Like Containers Ollama Docker Foss Engineer Learn how to set up ollama in docker containers for scalable ai model deployment. complete guide with configuration examples and best practices. This tutorial aims to demystify the process and illustrate a straightforward path to deploy these models on local infrastructure utilizing ollama. before we jump into the setup, it’s essential to grasp the inherent advantages of running llms locally. In this guide, i'll show you how to set up ollama in docker, pull your first model, and start running local llms that you can access from any application. if you are completely new to docker, we recommend you enroll in our introduction to docker course to grasp the fundamentals. This repository provides a streamlined setup to run ollama's api locally with a user friendly web ui. it leverages docker to manage both the ollama api service and the web interface, allowing for easy deployment and interaction with models like llama3.2:1b.

Deploy Local Llms Like Containers Ollama Docker Foss Engineer In this guide, i'll show you how to set up ollama in docker, pull your first model, and start running local llms that you can access from any application. if you are completely new to docker, we recommend you enroll in our introduction to docker course to grasp the fundamentals. This repository provides a streamlined setup to run ollama's api locally with a user friendly web ui. it leverages docker to manage both the ollama api service and the web interface, allowing for easy deployment and interaction with models like llama3.2:1b. By configuring ollama to listen on all interfaces and running open webui in a container, we created a flexible and accessible environment for interacting with large language models locally. Running ollama in docker lets you deploy local llms on any machine or server without installing anything directly on the host. it's the cleanest approach for server deployments, ci pipelines, or anyone who wants a portable, reproducible ai environment. A comprehensive guide to running large language models on your own hardware using ollama and docker for better privacy and lower costs. This docker configuration creates a self contained llm server that exposes an openai compatible api endpoint, allowing existing applications to switch from cloud based models to local inference without code changes.

Deploy Local Llms Like Containers Ollama Docker Foss Engineer By configuring ollama to listen on all interfaces and running open webui in a container, we created a flexible and accessible environment for interacting with large language models locally. Running ollama in docker lets you deploy local llms on any machine or server without installing anything directly on the host. it's the cleanest approach for server deployments, ci pipelines, or anyone who wants a portable, reproducible ai environment. A comprehensive guide to running large language models on your own hardware using ollama and docker for better privacy and lower costs. This docker configuration creates a self contained llm server that exposes an openai compatible api endpoint, allowing existing applications to switch from cloud based models to local inference without code changes.

Run Llms Locally With Docker A Quickstart Guide To Model Runner Docker A comprehensive guide to running large language models on your own hardware using ollama and docker for better privacy and lower costs. This docker configuration creates a self contained llm server that exposes an openai compatible api endpoint, allowing existing applications to switch from cloud based models to local inference without code changes.

How To Run Open Source Llms Locally Using Ollama Pdf Open Source

Comments are closed.