Deploy Google S Gemma With Tensorrt

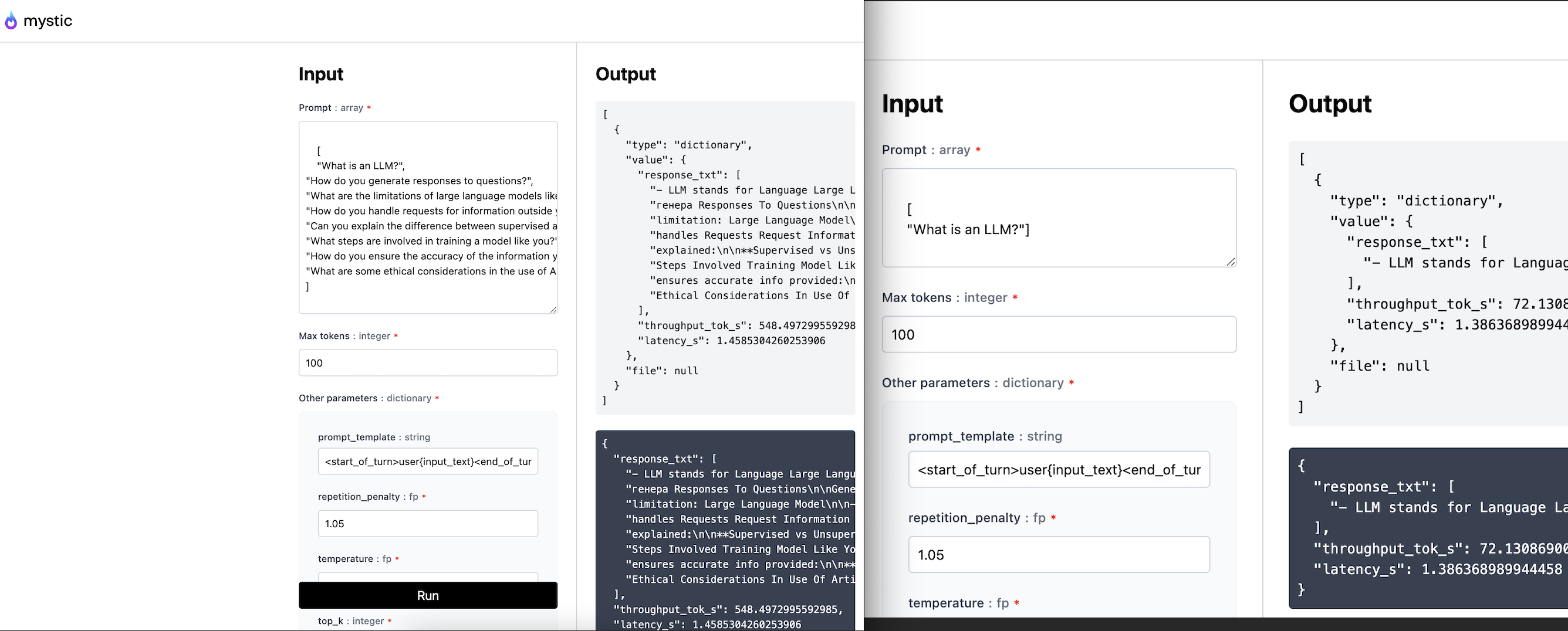

Deploy Google S Gemma With Tensorrt In this tutorial, i will cover how to convert a pytorch model (google's gemma 7b) into tensorrt llm format, and deploy on our serverless cloud or on your own private cloud with mystic. This tutorial demonstrates how to deploy and serve a gemma large language model (llm) using gpus on google kubernetes engine (gke) with the nvidia triton and tensorrt llm serving stack.

Deploy Google S Gemma With Tensorrt Google cloud kubernetes engine provides a wide range of deployment options for running gemma models with high performance and low latency using preferred development frameworks. check out the following deployment guides for hugging face, vllm, tensorrt llm on gpus, and tpu execution with jetstream, plus application, and tuning guides:. Tensorrt llm provides users with an easy to use python api to define large language models (llms) and supports state of the art optimizations to perform inference efficiently on nvidia gpus. Explore high performance gemma hosting solutions for deploying google deepmind’s gemma3 4b, 12b, and 27b models using ollama, vllm, tgi, tensorrt llm, and ggml. Gemma hosting is the deployment and serving of google’s gemma language models (like gemma 2b and gemma 7b) on dedicated hardware or cloud infrastructure for various applications such as chatbots, apis, or research environments.

Google Gemma For Ai Coding Review Features Use Cases Explore high performance gemma hosting solutions for deploying google deepmind’s gemma3 4b, 12b, and 27b models using ollama, vllm, tgi, tensorrt llm, and ggml. Gemma hosting is the deployment and serving of google’s gemma language models (like gemma 2b and gemma 7b) on dedicated hardware or cloud infrastructure for various applications such as chatbots, apis, or research environments. Nvidia is collaborating with google to deliver gemma, a family of open models built using the same research and technology as gemini models, with optimized release using tensorrt llm. The gemma cookbook is a comprehensive collection of guides, examples, and tutorials for working with google's gemma family of open models. this repository provides practical, executable code demonstrating how to deploy, fine tune, and integrate gemma models across various platforms and use cases. Welcome to tensorrt llm’s documentation! what can you do with tensorrt llm? what is h100 fp8?. Nvidia tensorrt llm is an open source tool that allows you to considerably speed up execution of your models and in this talk we will demonstrate its application to gemma.

Nvidia Tensorrt Llm Revs Up Inference For Google Gemma Nvidia Nvidia is collaborating with google to deliver gemma, a family of open models built using the same research and technology as gemini models, with optimized release using tensorrt llm. The gemma cookbook is a comprehensive collection of guides, examples, and tutorials for working with google's gemma family of open models. this repository provides practical, executable code demonstrating how to deploy, fine tune, and integrate gemma models across various platforms and use cases. Welcome to tensorrt llm’s documentation! what can you do with tensorrt llm? what is h100 fp8?. Nvidia tensorrt llm is an open source tool that allows you to considerably speed up execution of your models and in this talk we will demonstrate its application to gemma.

Serverless Deployment With Google Gemma Using Beam Cloud Welcome to tensorrt llm’s documentation! what can you do with tensorrt llm? what is h100 fp8?. Nvidia tensorrt llm is an open source tool that allows you to considerably speed up execution of your models and in this talk we will demonstrate its application to gemma.

Google Unveils Google Gemma An Open Source Ai Model Appscribed

Comments are closed.