Defined Ai Answer Comparison Generative Ai Evaluation

Answer For Introduction To Generative Ai Quiz Pdf Artificial This guide delves into the most effective methods to assess generative models, covering both quantitative metrics and qualitative techniques. Ai assisted evaluation involves using ai models to automate the initial assessment of system outputs, process results, and suggest refinements, thereby enhancing and adapting the overall evaluation process.

Efficient Subjective Answer Evaluation In E Learning Leveraging Ai For After you create an evaluation dataset, the next step is to define the metrics used to measure model performance. generative ai models can create applications for a wide range of tasks, and. This paper comprehensively reviews evaluation methods for generative ai, beginning with its evolution and major applications, including advanced models like gpt, dall·e, and alphacode. Learn how to evaluate generative ai output effectively using the right methods, quality metrics, and best practices for accurate performance analysis. Achieving this requires thorough testing and evaluation before implementing the ai solution. however, evaluating the outputs of generative ai (genai) presents unique challenges not encountered in traditional software development.

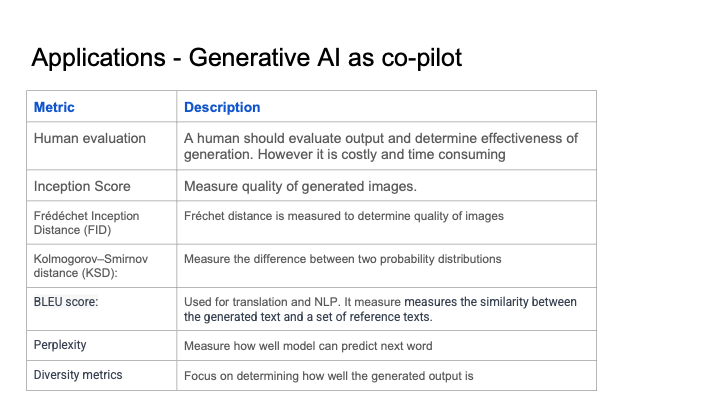

Generative Ai Evaluation Metrics Learn how to evaluate generative ai output effectively using the right methods, quality metrics, and best practices for accurate performance analysis. Achieving this requires thorough testing and evaluation before implementing the ai solution. however, evaluating the outputs of generative ai (genai) presents unique challenges not encountered in traditional software development. In this post, we discuss best practices for applying llms to generate ground truth for evaluating question answering assistants with fmeval on an enterprise scale. The gen ai evaluation service in vertex ai lets you evaluate any generative model or application and benchmark the evaluation results against your own judgment, using your own evaluation criteria. Llm comparator helps you analyze side by side evaluation results. it visually summarizes model performance from multiple angles, while letting you interactively inspect individual model outputs for a deeper understanding. Nist genai is a new evaluation program administered by the nist information technology laboratory to assess generative ai technologies developed by the research community from around the world.

Generative Ai Assessment For Organizations In this post, we discuss best practices for applying llms to generate ground truth for evaluating question answering assistants with fmeval on an enterprise scale. The gen ai evaluation service in vertex ai lets you evaluate any generative model or application and benchmark the evaluation results against your own judgment, using your own evaluation criteria. Llm comparator helps you analyze side by side evaluation results. it visually summarizes model performance from multiple angles, while letting you interactively inspect individual model outputs for a deeper understanding. Nist genai is a new evaluation program administered by the nist information technology laboratory to assess generative ai technologies developed by the research community from around the world.

Comments are closed.