Deepspeed High Level Parallelism And Memory Optimization Library

Memory Level Parallelism Semantic Scholar This document provides a high level overview of the deepspeed library, its architecture, major subsystems, and key entry points. it is intended to orient new developers and users to the codebase structure and design principles. The deepspeed library (this repository) implements and packages the innovations and technologies in deepspeed training, inference and compression pillars into a single easy to use, open sourced repository.

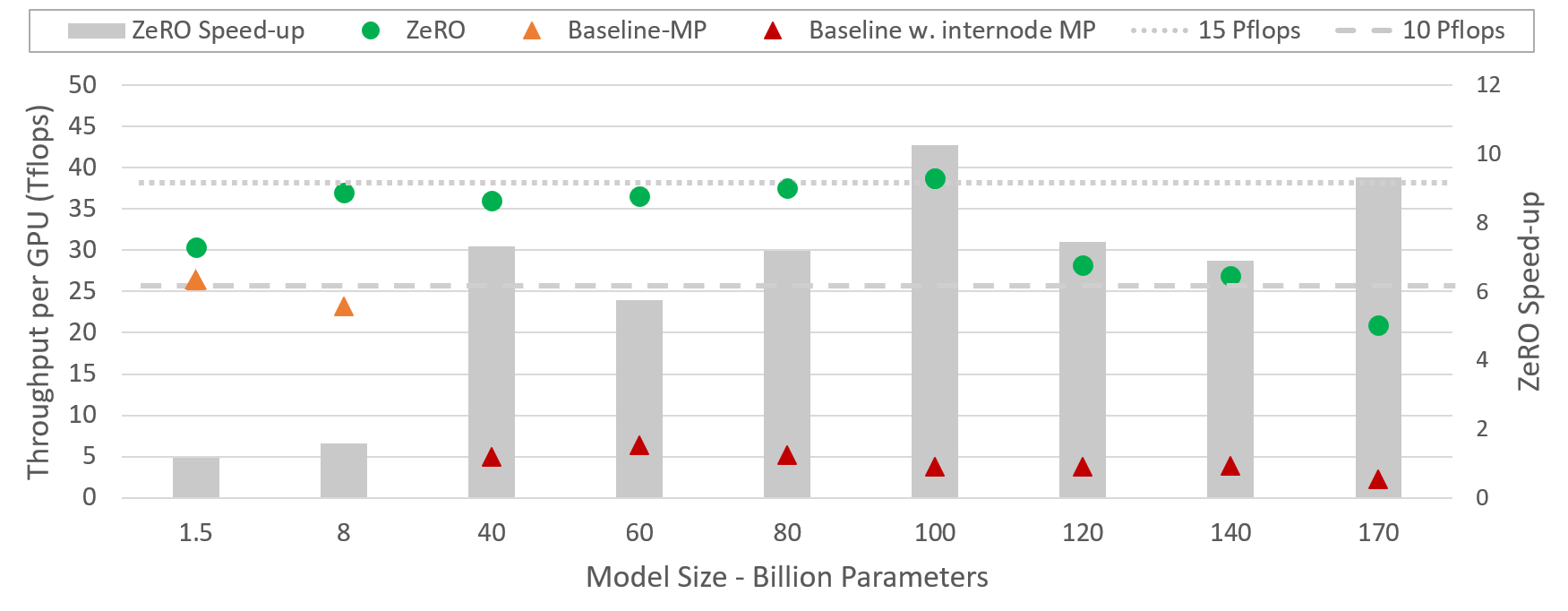

Pdf Optimization Of Multiprocessors Memory System Performance Deepspeed offers a confluence of system innovations, that has made large scale dl training effective, and efficient, greatly improved ease of use, and redefined the dl training landscape in terms of scale that is possible. Model parallelism in deepspeed offers a powerful way to scale your training to massive models without blowing your gpu memory budget. while the initial setup may seem intimidating, the payoff in terms of speed and memory efficiency is well worth it. Deepspeed provides memory efficient data parallelism and enables training models without model parallelism. for example, deepspeed can train models with up to 13 billion parameters on a single gpu. Deepspeed is an open source deep learning optimization library from microsoft with big ambitions to make large scale model training faster, more efficient, and more accessible.

Deepspeed High Level Parallelism And Memory Optimization Library Deepspeed provides memory efficient data parallelism and enables training models without model parallelism. for example, deepspeed can train models with up to 13 billion parameters on a single gpu. Deepspeed is an open source deep learning optimization library from microsoft with big ambitions to make large scale model training faster, more efficient, and more accessible. What enables deepspeed to train a trillion parameter (or more) model is the technique called zero redundancy optimizer (zero optimizer), which builds upon model parallelism, pipeline parallelism, and data parallelism, alongside memory and bandwidth optimizations. Deepspeed is a deep learning optimization library developed by microsoft to simplify and enhance distributed training and inference of large scale models. Deepspeed is an open source deep learning optimization library developed by microsoft. when combined with pytorch, it offers a wide range of tools and techniques to train large scale deep learning models more efficiently. The deepspeed library (this repository) implements and packages the innovations and technologies in deepspeed training, inference and compression pillars into a single easy to use, open sourced repository.

High Performance Embedded Computing Parallelism And Compiler What enables deepspeed to train a trillion parameter (or more) model is the technique called zero redundancy optimizer (zero optimizer), which builds upon model parallelism, pipeline parallelism, and data parallelism, alongside memory and bandwidth optimizations. Deepspeed is a deep learning optimization library developed by microsoft to simplify and enhance distributed training and inference of large scale models. Deepspeed is an open source deep learning optimization library developed by microsoft. when combined with pytorch, it offers a wide range of tools and techniques to train large scale deep learning models more efficiently. The deepspeed library (this repository) implements and packages the innovations and technologies in deepspeed training, inference and compression pillars into a single easy to use, open sourced repository.

Deep Learning Optimization Library Aigloballabaigloballab Deepspeed is an open source deep learning optimization library developed by microsoft. when combined with pytorch, it offers a wide range of tools and techniques to train large scale deep learning models more efficiently. The deepspeed library (this repository) implements and packages the innovations and technologies in deepspeed training, inference and compression pillars into a single easy to use, open sourced repository.

Pdf Are We Ready For High Memory Level Parallelism

Comments are closed.