Deepseek V4 Is Disappointing Heres Why

Deepseek V4 Benchmark Leaks Here S What The Numbers Actually Show Hey guys, welcome back to another new exciting video! i'm sharing my initial disappointment with the new deepseek v4 model, despite its recent official release and open sourcing. Deepseek says v4 has been optimized for use with popular agent tools including claude code and openclaw cnbc , which signals the team is building for production agentic deployment, not just benchmark positioning. why this is a bigger deal than it looks the capability story is interesting.

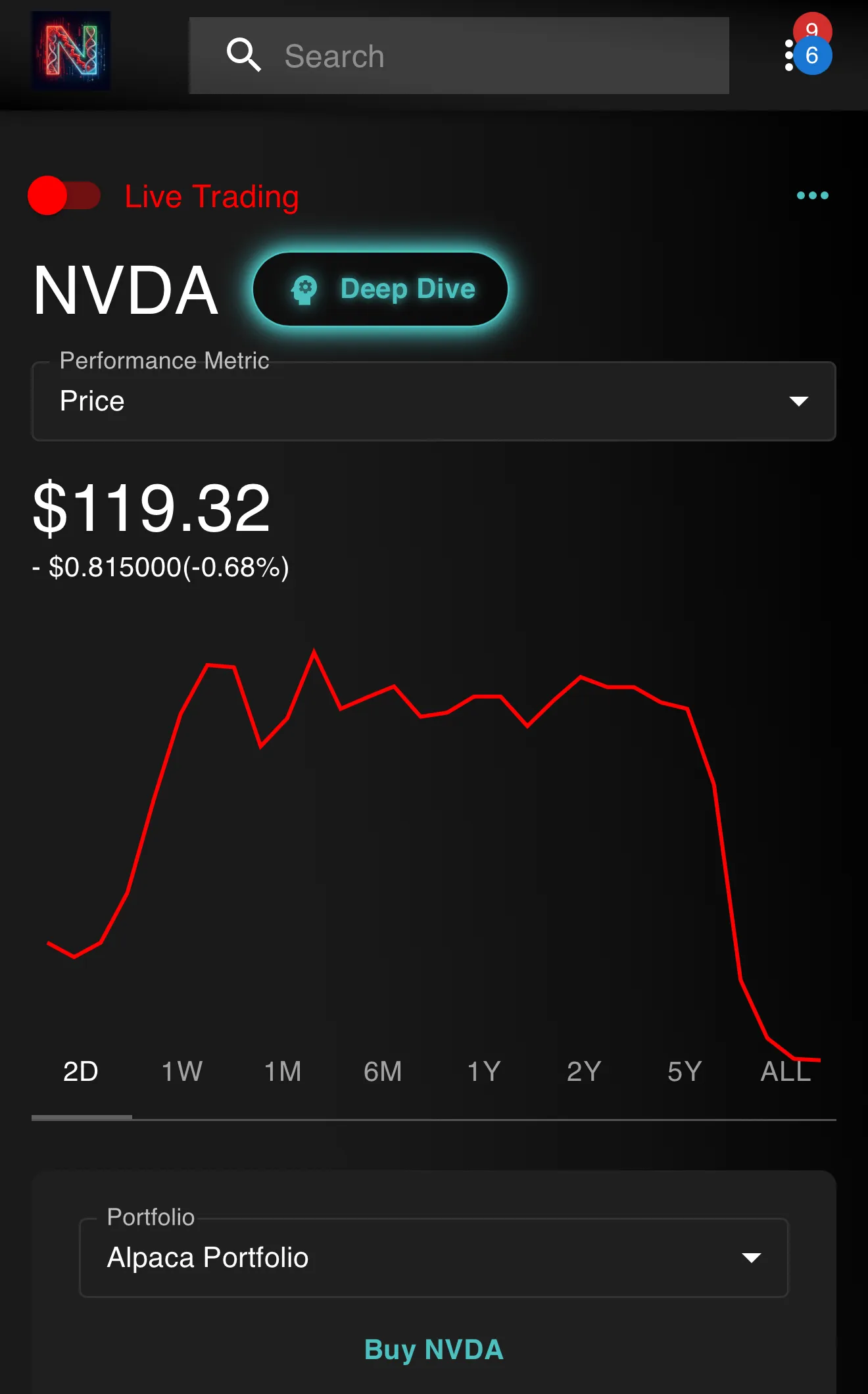

Deepseek V4 Benchmark Leaks Here S What The Numbers Actually Show Explore the strengths and weaknesses of deepseek v4 through a closer look at its pricing structure, task specific performance and how it compares to competitors like kimi k2.6 and opus 4.6. After the open source preview version of deepseek v4 was launched, the first wave of evaluation results from third party lists has been released. multiple evaluations show that the. Deepseek v4: a rundown of compute & why this is the overlooked aspect i won't go into the technical details of deepseek's v4 model, but rather focus entirely on the compute infrastructure. china. Deepseek v4 launched on april 24, 2026, but banned chip allegations, data theft accusations, and serious questions about china's hardware future mean the risks are far from over.

Deepseek V4 Benchmark Leaks Here S What The Numbers Actually Show Deepseek v4: a rundown of compute & why this is the overlooked aspect i won't go into the technical details of deepseek's v4 model, but rather focus entirely on the compute infrastructure. china. Deepseek v4 launched on april 24, 2026, but banned chip allegations, data theft accusations, and serious questions about china's hardware future mean the risks are far from over. Deepseek v4’s real breakthrough is cost efficient long context intelligence: it makes million token reasoning cheaper and pushes open models closer to frontier systems. When china’s deepseek released a competitive new artificial intelligence model called r1 last january purportedly built for less than many rivals, some feared the achievement posed a threat to. Deepseek has kept a relatively low profile since then—but earlier this month, it effectively teased v4’s release when it added “expert” and “flash” modes to the online version of its. Why it matters . million token context at practical cost. the quadratic attention wall is the real bottleneck on test time scaling — dropping 1m context inference to ~10% of pri.

I Am Not Excited About The Brand New Deepseek V3 Model Here S Why Deepseek v4’s real breakthrough is cost efficient long context intelligence: it makes million token reasoning cheaper and pushes open models closer to frontier systems. When china’s deepseek released a competitive new artificial intelligence model called r1 last january purportedly built for less than many rivals, some feared the achievement posed a threat to. Deepseek has kept a relatively low profile since then—but earlier this month, it effectively teased v4’s release when it added “expert” and “flash” modes to the online version of its. Why it matters . million token context at practical cost. the quadratic attention wall is the real bottleneck on test time scaling — dropping 1m context inference to ~10% of pri.

Deepseek V3 Review Performance In Benchmarks Evals Deepseek has kept a relatively low profile since then—but earlier this month, it effectively teased v4’s release when it added “expert” and “flash” modes to the online version of its. Why it matters . million token context at practical cost. the quadratic attention wall is the real bottleneck on test time scaling — dropping 1m context inference to ~10% of pri.

Comments are closed.