Deepseek R1 Distilled Quantized Models Explained

Quantized Models For Ertghiu256 Deepseek R1 0528 Distilled Qwen3 This page documents the distilled model variants in the deepseek r1 family. these models are smaller, more efficient versions that preserve the reasoning capabilities of the full sized deepseek r1 model. This video explores deepseek r1, how distilled versions and quantization make it more accessible, and the trade offs between model size, performance, and accuracy .more.

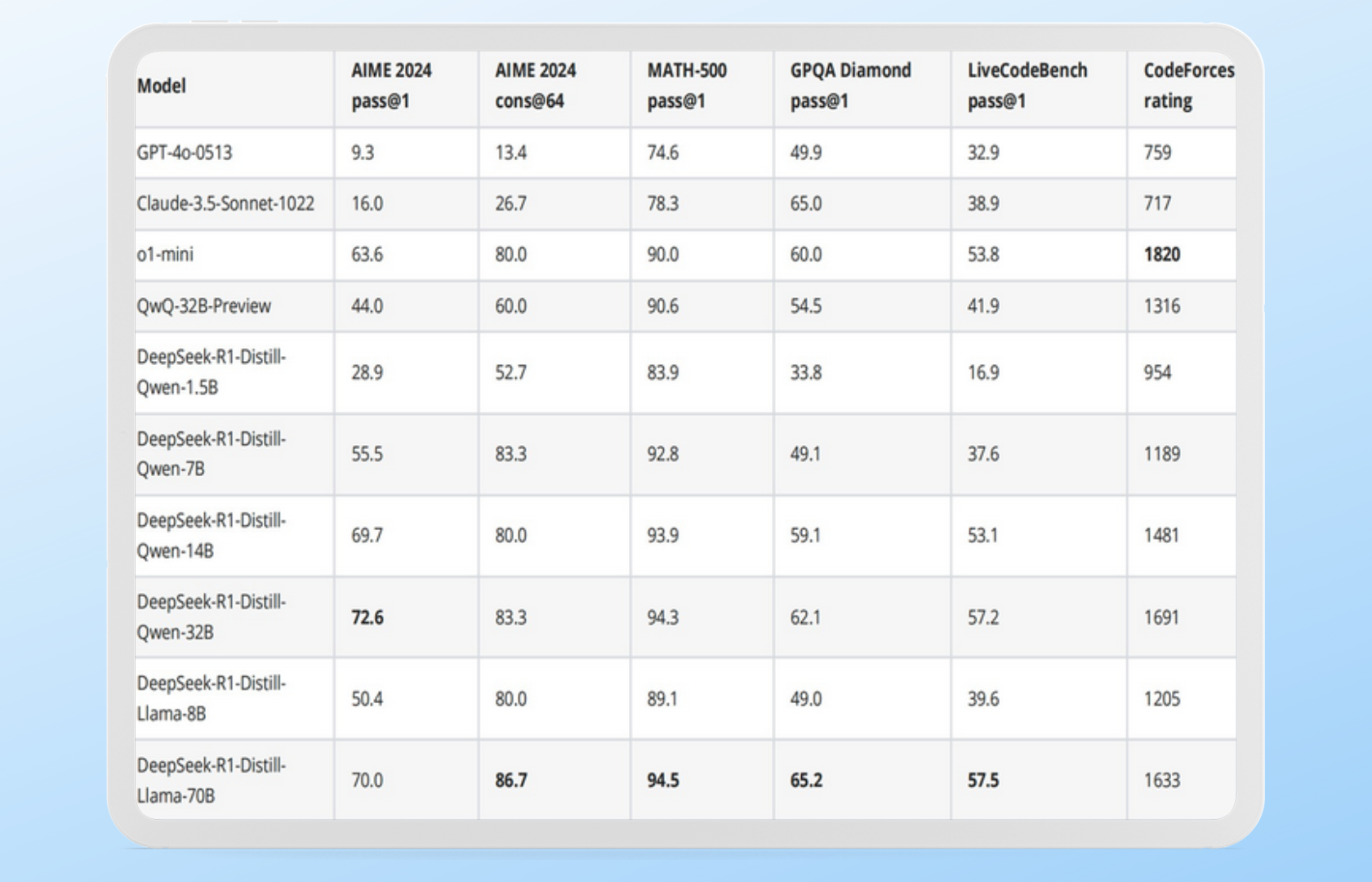

Chat With Deepseek R1 V3 Gitmind Ai The distilled models are created by fine tuning smaller base models (e.g., qwen and llama series) using 800,000 samples of reasoning data generated by deepseek r1. To support the research community, we have open sourced deepseek r1 zero, deepseek r1, and six dense models distilled from deepseek r1 based on llama and qwen. deepseek r1 distill qwen 32b outperforms openai o1 mini across various benchmarks, achieving new state of the art results for dense models. Understand the differences between deepseek v3, r1, v3.1, v3.2, and distilled models. learn how to choose the right model and deploy them securely with bentoml. Deepseek r1 distilled models are a family of compact llms derived via knowledge distillation from the deepseek r1 line of high parameter, reasoning optimized moe llms.

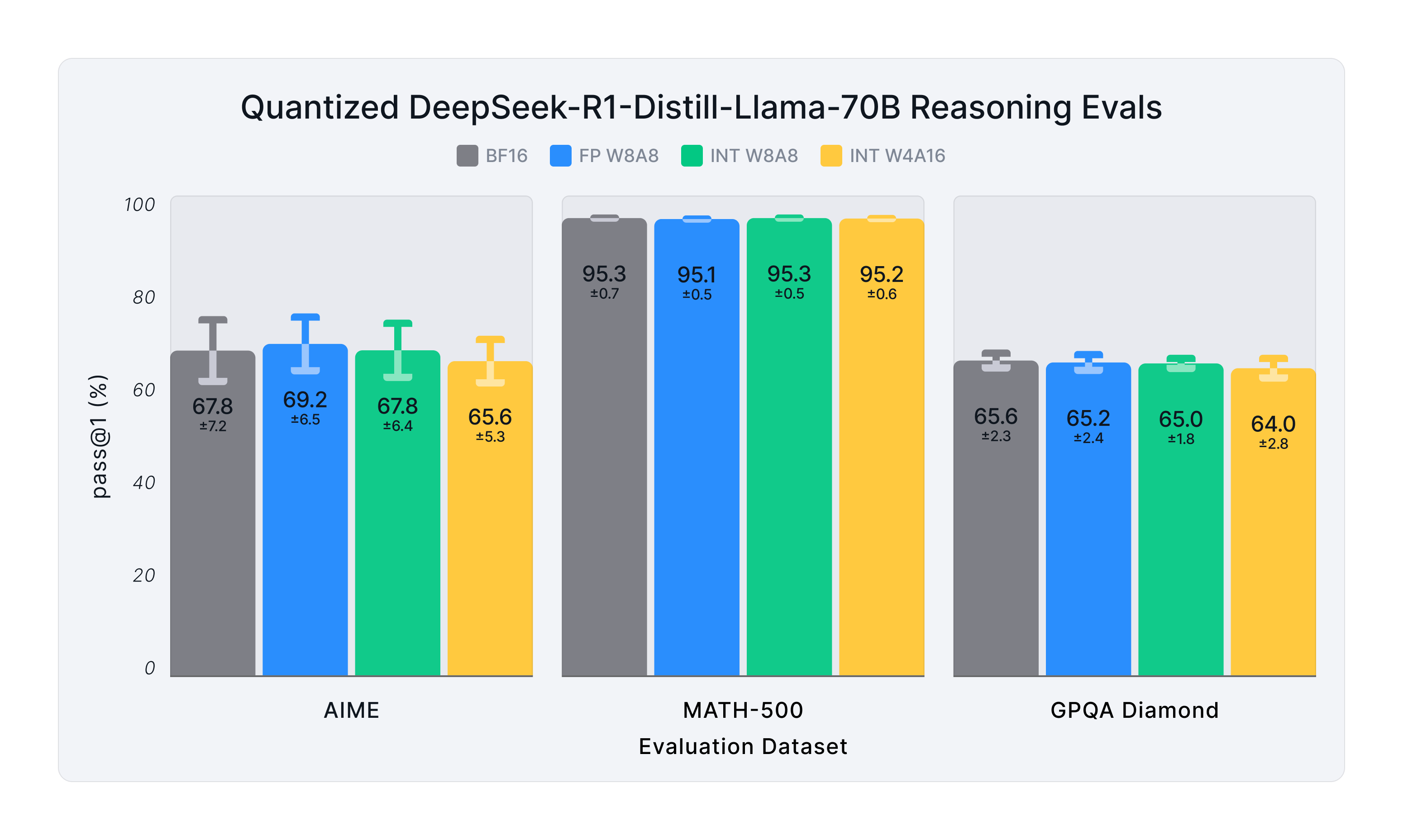

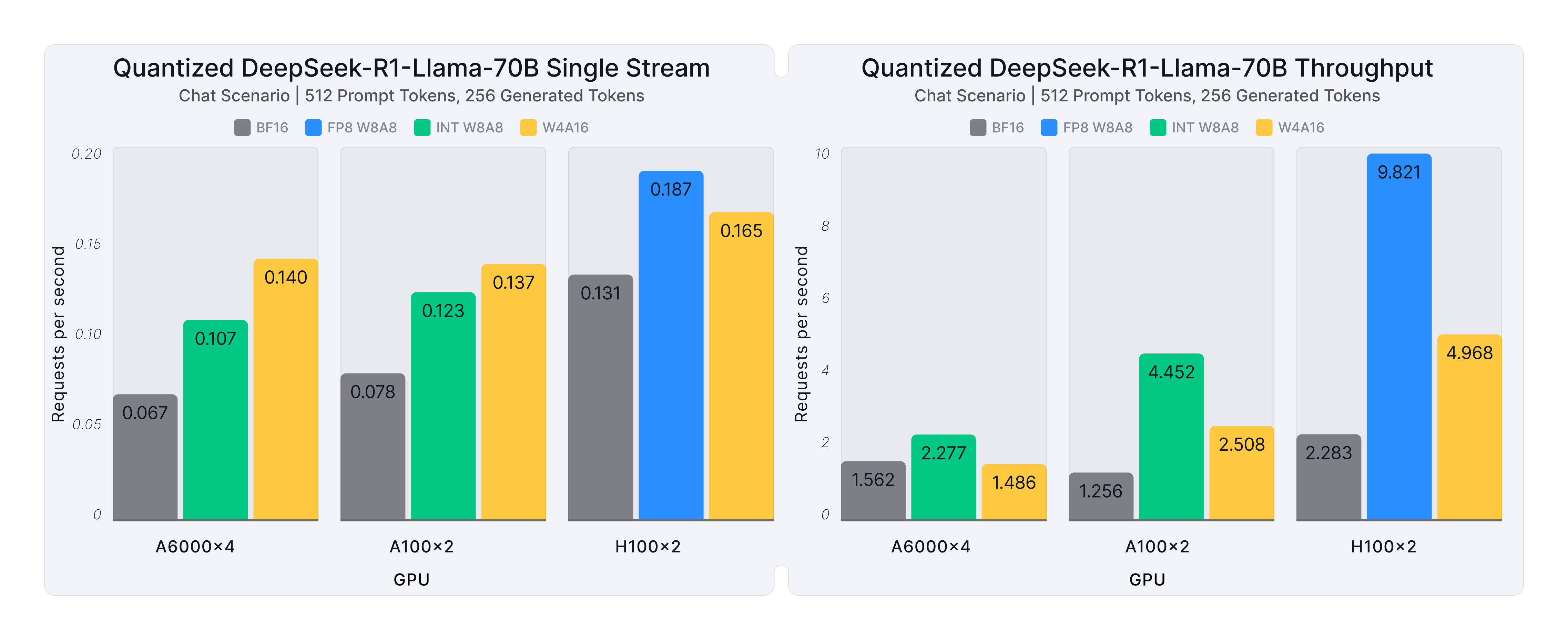

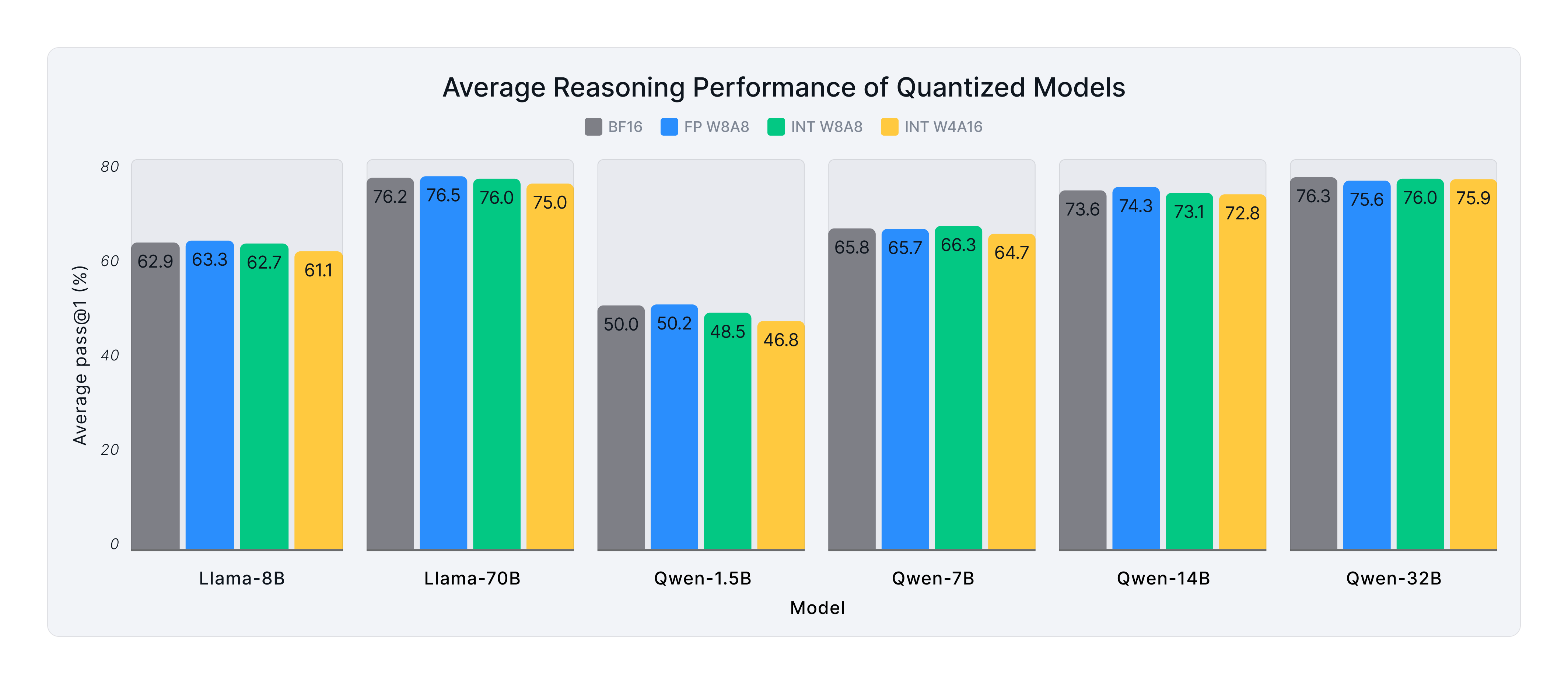

Deployment Ready Reasoning With Quantized Deepseek R1 Models Red Hat Understand the differences between deepseek v3, r1, v3.1, v3.2, and distilled models. learn how to choose the right model and deploy them securely with bentoml. Deepseek r1 distilled models are a family of compact llms derived via knowledge distillation from the deepseek r1 line of high parameter, reasoning optimized moe llms. Two techniques—distillation and quantization—have emerged to shrink models while retaining performance. let’s break down how they work, their differences, and when to use them, with examples. Deepseek r1 is a family of reasoning first large language models built by the chinese ai lab deepseek. unlike typical chat models that mainly optimize for fluent text, r1 is designed to: it does this using large scale reinforcement learning (rl), not just supervised fine tuning. The distilled models (1.5b, 7b, 8b, 14b, 32b, 70b) were produced by fine tuning qwen2.5 and llama 3 series checkpoints on reasoning traces generated by the full r1 model. they are dense transformer networks, not moe, which makes them easier to quantize and deploy on single gpus. This repository contains an implementation of knowledge distillation techniques specifically designed for deepseek r1 models. knowledge distillation allows us to transfer the capabilities of larger, more powerful "teacher" models to smaller, more efficient "student" models.

Deployment Ready Reasoning With Quantized Deepseek R1 Models Red Hat Two techniques—distillation and quantization—have emerged to shrink models while retaining performance. let’s break down how they work, their differences, and when to use them, with examples. Deepseek r1 is a family of reasoning first large language models built by the chinese ai lab deepseek. unlike typical chat models that mainly optimize for fluent text, r1 is designed to: it does this using large scale reinforcement learning (rl), not just supervised fine tuning. The distilled models (1.5b, 7b, 8b, 14b, 32b, 70b) were produced by fine tuning qwen2.5 and llama 3 series checkpoints on reasoning traces generated by the full r1 model. they are dense transformer networks, not moe, which makes them easier to quantize and deploy on single gpus. This repository contains an implementation of knowledge distillation techniques specifically designed for deepseek r1 models. knowledge distillation allows us to transfer the capabilities of larger, more powerful "teacher" models to smaller, more efficient "student" models.

Deployment Ready Reasoning With Quantized Deepseek R1 Models Red Hat The distilled models (1.5b, 7b, 8b, 14b, 32b, 70b) were produced by fine tuning qwen2.5 and llama 3 series checkpoints on reasoning traces generated by the full r1 model. they are dense transformer networks, not moe, which makes them easier to quantize and deploy on single gpus. This repository contains an implementation of knowledge distillation techniques specifically designed for deepseek r1 models. knowledge distillation allows us to transfer the capabilities of larger, more powerful "teacher" models to smaller, more efficient "student" models.

Comments are closed.