Deepeval Tutorial Unit Testing Llm Ai Applications

Unit Testing Llm Powered Applications With Deepeval Textify Analytics In this comprehensive tutorial, we dive deep into deepeval, often called the "pytest for llms," to ensure your ai applications are accurate, safe, and reliable before deployment. In these tutorials we'll show you how you can use deepeval to improve your llm application one step at a time. these tutorials walk you through the process of evaluating and testing your llm applications — from initial development to post production.

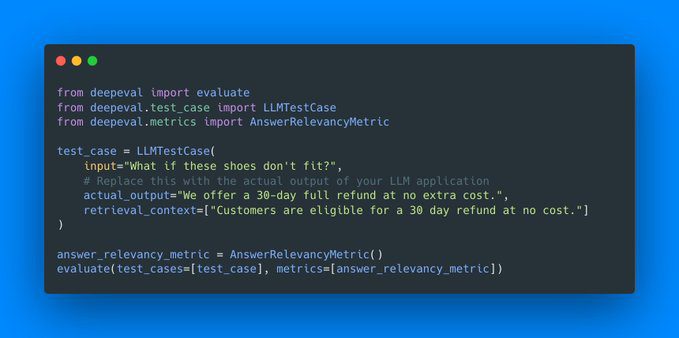

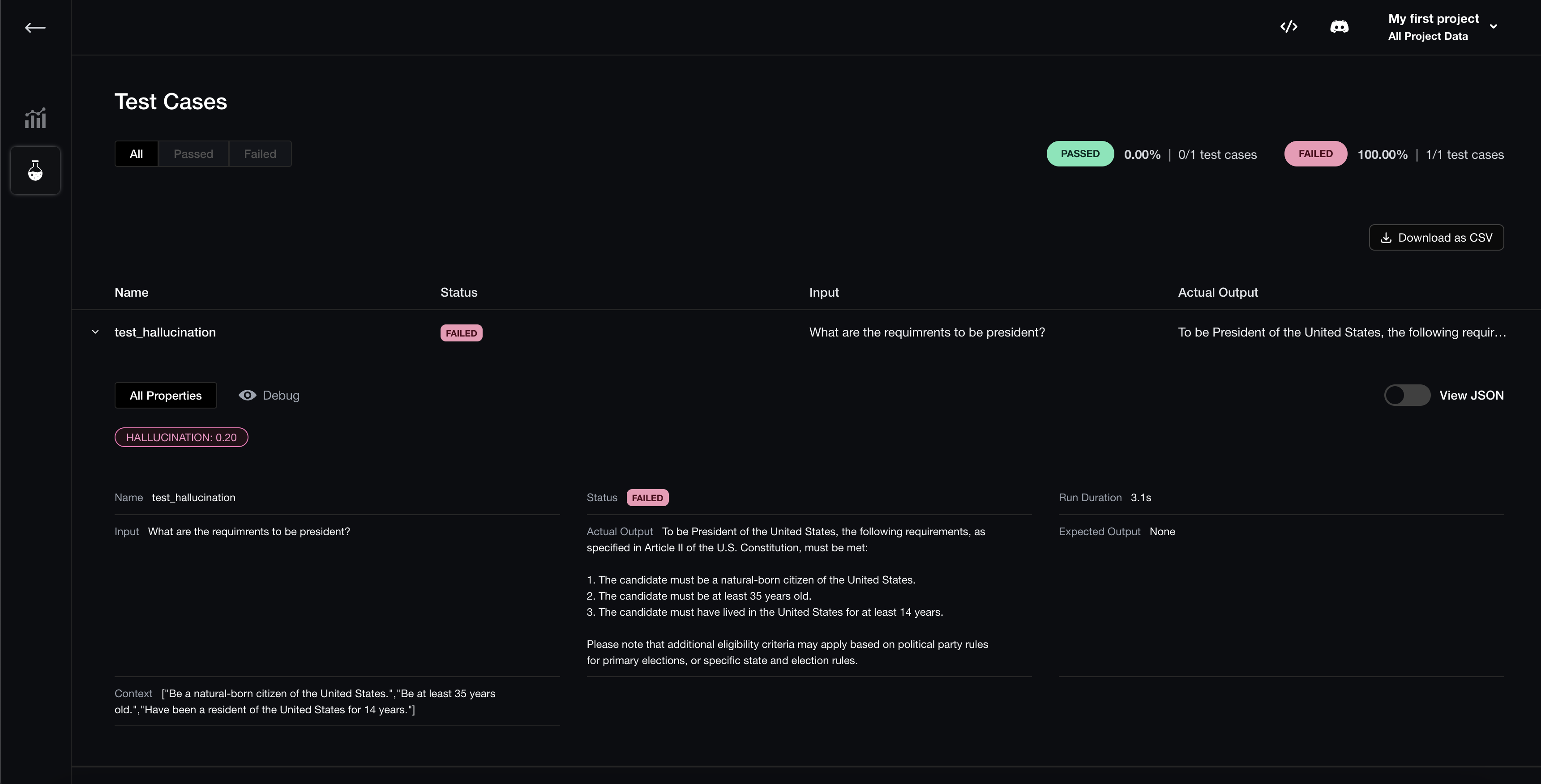

How To Evaluate Ai Llm Models With Test Prompts In 2025 Writingmate Blog Deepeval is a simple to use, open source evaluation framework for llm applications. it is similar to pytest but specialized for unit testing llm applications. deepeval evaluates performance based on metrics such as hallucination, answer relevancy, ragas, etc., using llms and various other nlp models locally on your machine. Learn deepeval: llm evaluation framework tutorial interactive ai tutorial with hands on examples, code snippets, and practical applications. master ai engineering with step by step guidance. It provides a simple and intuitive way to "unit test" llm outputs, similar to how developers use pytest for traditional software testing. with deepeval, you can easily create test cases, define metrics, and evaluate the performance of your llm applications. In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model.

Deepeval Llm Evaluation Framework Tutorial Ai Builders Tutorial It provides a simple and intuitive way to "unit test" llm outputs, similar to how developers use pytest for traditional software testing. with deepeval, you can easily create test cases, define metrics, and evaluate the performance of your llm applications. In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model. In this article, i’ll explore practical approaches to testing generative ai applications, with a special focus on using deepevals to ensure your llm systems perform reliably. This hands on course equips qa, ai qa, developers, data scientists, and ai practitioners with cutting edge techniques to assess ai performance, identify biases, and ensure robust application development. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. This document will represent my takeaways from doing a deep dive on deepeval, an open source llm evaluation framework.

Github Ai App Deepeval The Evaluation Framework For Llms In this article, i’ll explore practical approaches to testing generative ai applications, with a special focus on using deepevals to ensure your llm systems perform reliably. This hands on course equips qa, ai qa, developers, data scientists, and ai practitioners with cutting edge techniques to assess ai performance, identify biases, and ensure robust application development. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. This document will represent my takeaways from doing a deep dive on deepeval, an open source llm evaluation framework.

Comments are closed.