Deduplicate Data Streaming Events With Sql Upsert

Upsert In Sql This blog will show how to use the upsert operation with the sql connector. you will learn how to set up an environment to use the sql connector and how to apply the new upsert functionality. Three ways to handle duplicate data in streaming pipelines. learn the benefits, use cases and more in this article.

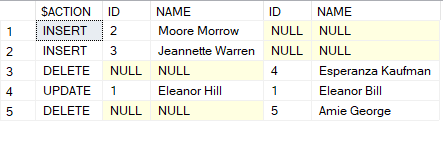

Upsert In Sql Server Sudhanshu Shekhar S Blog Deduplication removes rows that duplicate over a set of columns, keeping only the first one or the last one. in some cases, the upstream etl jobs are not end to end exactly once; this may result in duplicate records in the sink in case of failover. In this way, we can deduplicate data through incoming streaming which it decrease number duplications into one single data point based on defined primary key in hudi table ddl definitions. I am using spark structured streaming with azure databricks delta where i am writing to delta table (delta table name is raw).i am reading from azure files where i am receiving out of order data an. Every insertion event is an immutable fact. every event is insert only. events can be distributed in a round robin fashion across workers shards because they are unrelated. upsert events are related using a primary key. every event is either an upsert or delete event for a primary key. events for the same primary key should land at the same.

Deduplicate Data Streaming Events With Sql Upsert I am using spark structured streaming with azure databricks delta where i am writing to delta table (delta table name is raw).i am reading from azure files where i am receiving out of order data an. Every insertion event is an immutable fact. every event is insert only. events can be distributed in a round robin fashion across workers shards because they are unrelated. upsert events are related using a primary key. every event is either an upsert or delete event for a primary key. events for the same primary key should land at the same. Before, using streaming merge, i could use foreachbatch and de duplicate the batch before merging the data to the target. that worked quite well, although a bit fiddly. A.j. hunyady reposted this infinyon 1,388 followers 1y use sql upsert operation to remove duplicates in event streams using #fluvio and #infinyon cloud. lnkd.in gch9v9ac. 20 subscribers in the datastreaming community. community focused on general data streaming technologies. We are having a hudi spark pipeline which constantly does upsert on a hudi table. incoming traffic is 5k records per sec on the table. we use cow table type but after upsert we could see lot of duplicate rows for same record key. we do set the precombine field which is date string field.

Sql Server Insight Upsert Statement In T Sql Before, using streaming merge, i could use foreachbatch and de duplicate the batch before merging the data to the target. that worked quite well, although a bit fiddly. A.j. hunyady reposted this infinyon 1,388 followers 1y use sql upsert operation to remove duplicates in event streams using #fluvio and #infinyon cloud. lnkd.in gch9v9ac. 20 subscribers in the datastreaming community. community focused on general data streaming technologies. We are having a hudi spark pipeline which constantly does upsert on a hudi table. incoming traffic is 5k records per sec on the table. we use cow table type but after upsert we could see lot of duplicate rows for same record key. we do set the precombine field which is date string field.

Comments are closed.