Decoding Ai Mastery The 10 Key Elements Of Language Model Evaluation

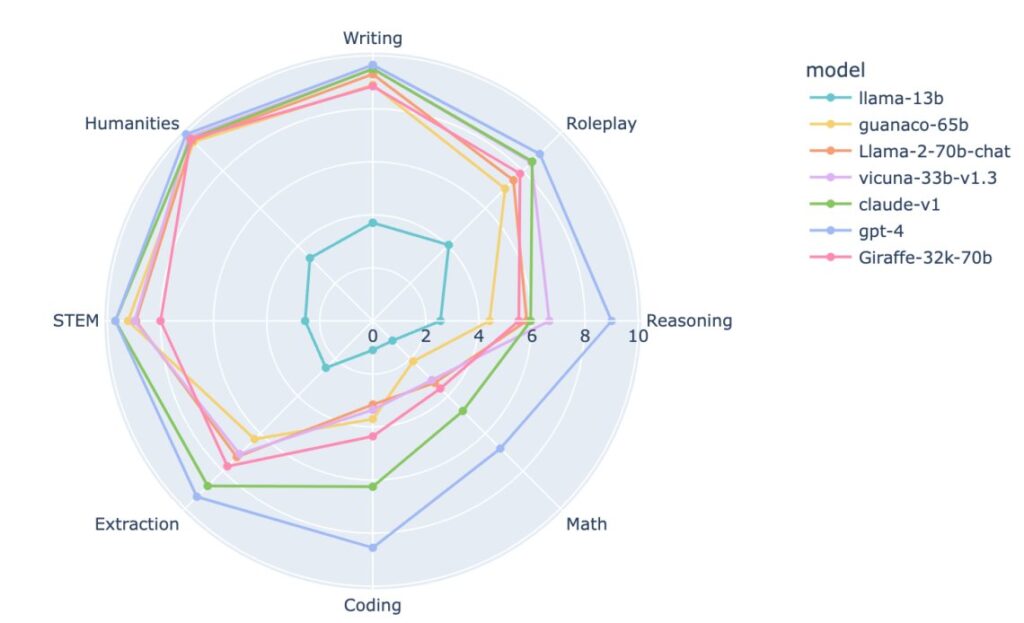

Decoding Ai Mastery The 10 Key Elements Of Language Model Evaluation But what makes an ai model not just good, but great? beyond the buzzwords and the technical jargon, here are ten critical elements to consider when evaluating the prowess of language ai models. Evaluating large language models is crucial throughout their entire lifecycle, encompassing selection, fine tuning, and secure, dependable deployment. as the capabilities of llms increase, it becomes inadequate to depend solely on a single metric (like perplexity) or benchmark.

Decoding Ai Mastery The 10 Key Elements Of Language Model Evaluation By strategically applying these metrics, developers and researchers can more effectively measure and enhance the performance of llms across a wide array of language understanding tasks. Learn about key metrics and best practices for assessing large language model performance. It is no secret that evaluating the outputs of large language models (llms) is essential for anyone building robust llm applications. whether you're fine tuning for accuracy, enhancing contextual relevance in a rag pipeline, or increasing task completion rate in an ai agent, choosing the right evaluation metrics is critical. yet, llm evaluation remains notoriously difficult—especially when. Summary: this article discusses various evaluation methods for language models, including perplexity, bleu score, rouge score, human evaluation, and word error rate (wer), highlighting how each method assesses the performance and quality of text generated by the models.

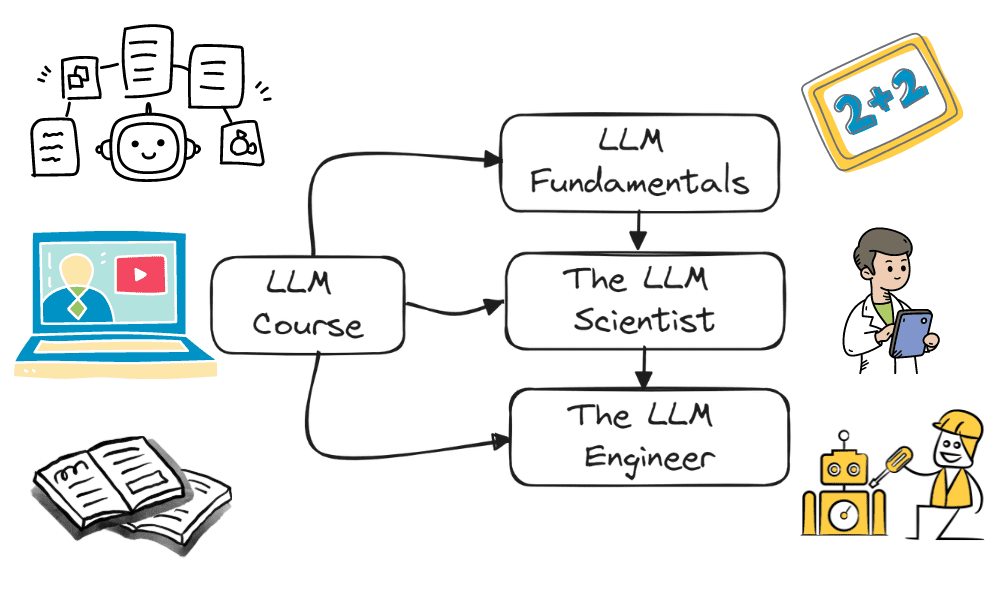

Free Mastery Course Become A Large Language Model Expert Ai Digitalnews It is no secret that evaluating the outputs of large language models (llms) is essential for anyone building robust llm applications. whether you're fine tuning for accuracy, enhancing contextual relevance in a rag pipeline, or increasing task completion rate in an ai agent, choosing the right evaluation metrics is critical. yet, llm evaluation remains notoriously difficult—especially when. Summary: this article discusses various evaluation methods for language models, including perplexity, bleu score, rouge score, human evaluation, and word error rate (wer), highlighting how each method assesses the performance and quality of text generated by the models. This article demystifies how some popular metrics for evaluating language tasks performed by llms work from inside, supported by python code examples that illustrate how to leverage them with hugging face libraries easily. In the first part of this blog series, we explored a range of evaluation metrics for large language models (llms), covering everything from traditional linguistic measures like bleu and. Abstract the rapid advancement of large language models (llms) has revolutionized various fields, yet their deployment presents unique evaluation challenges. this whitepaper details the. Discover how models for language tasks such as text classification, generation, or machine translation can be evaluated. in depth exploration of essential classification metrics like precision, recall, and f1 score, and introductions to further metrics and benchmarks.

An Ai Technique Enhances The Credibility Of Language Models This article demystifies how some popular metrics for evaluating language tasks performed by llms work from inside, supported by python code examples that illustrate how to leverage them with hugging face libraries easily. In the first part of this blog series, we explored a range of evaluation metrics for large language models (llms), covering everything from traditional linguistic measures like bleu and. Abstract the rapid advancement of large language models (llms) has revolutionized various fields, yet their deployment presents unique evaluation challenges. this whitepaper details the. Discover how models for language tasks such as text classification, generation, or machine translation can be evaluated. in depth exploration of essential classification metrics like precision, recall, and f1 score, and introductions to further metrics and benchmarks.

Large Language Model Evaluation In 2024 5 Methods Abstract the rapid advancement of large language models (llms) has revolutionized various fields, yet their deployment presents unique evaluation challenges. this whitepaper details the. Discover how models for language tasks such as text classification, generation, or machine translation can be evaluated. in depth exploration of essential classification metrics like precision, recall, and f1 score, and introductions to further metrics and benchmarks.

Evaluating Large Language Models Methods Best Practices Tools

Comments are closed.