Decision Trees Explained Why Overfitting Kills Your Model

Week7 Decision Trees Overfitting Pdf Decision tree models are capable of learning very detailed decision rules but this often causes them to fit too closely to the training data. as a result, their accuracy drops significantly when evaluated on new, unseen samples. In this article, we examine three common reasons why a trained decision tree model may fail, and we outline simple yet effective strategies to cope with these issues.

Ml Lec 07 Decision Tree Overfitting Download Free Pdf Machine Watch the visual example and learn how to avoid overfitting in minutes. these concepts work for any classification task, from email spam filters to medical diagnosis. Overfitting occurs when a decision tree model becomes overly complex, capturing noise or irrelevant patterns in the training data, and fails to generalize well to unseen data. a decision. You might ace that specific test, but fail on new questions. that's exactly what overfitting is in machine learning! overfitting occurs when a decision tree becomes too complex and memorizes the training data instead of learning general patterns. this leads to poor performance on new, unseen data. The problem decision trees solve is deceptively simple: given a pile of labelled examples, figure out a set of rules that correctly categorises new, unseen examples. the magic is in how those rules are chosen. a bad algorithm might split data arbitrarily.

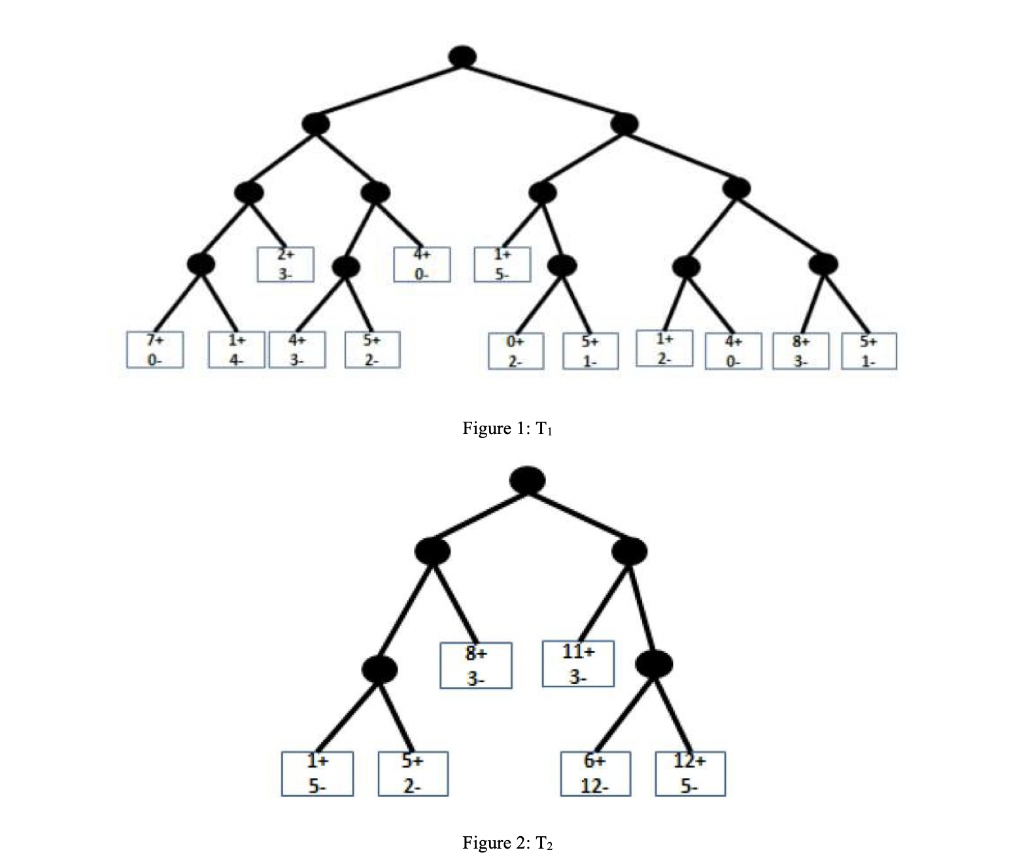

Model Overfitting Consider The Decision Trees T1 Chegg You might ace that specific test, but fail on new questions. that's exactly what overfitting is in machine learning! overfitting occurs when a decision tree becomes too complex and memorizes the training data instead of learning general patterns. this leads to poor performance on new, unseen data. The problem decision trees solve is deceptively simple: given a pile of labelled examples, figure out a set of rules that correctly categorises new, unseen examples. the magic is in how those rules are chosen. a bad algorithm might split data arbitrarily. If we keep dividing, we might make purer groups, but there's a risk of overfitting. overfitting is like trying too hard to fit everything perfectly, making the model less useful for new data that the algorithm has yet to see. Overfitting occurs when a decision tree becomes overly complex, capturing noise instead of meaningful patterns. addressing overfitting is crucial for building reliable and generalizable models. Explaining visually what it means for a decision tree to overfit training data, and using pruning techniques to fix it. Overfitting happens when your model learns your training data too well. like, obsessively well. it memorizes the details, even the noise and weird exceptions, but ends up struggling to make good predictions on new data. this is a general problem with machine learning and not only with decision trees.

Comments are closed.