Decision Transformer Reinforcement Learning Via Sequence Modeling Pdf

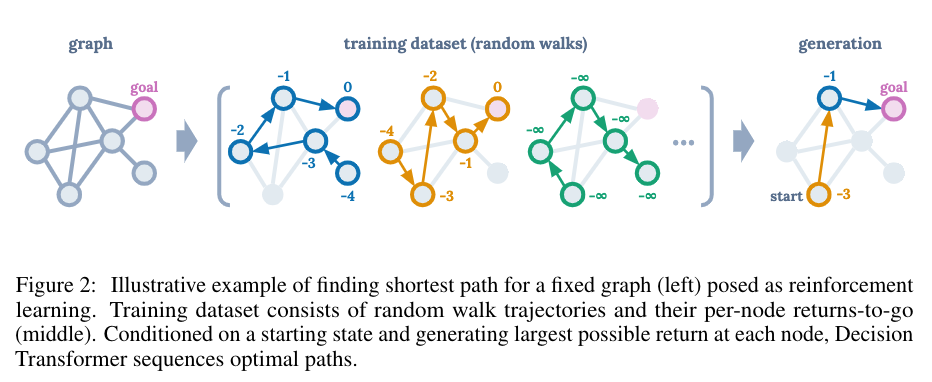

Decision Transformer Reinforcement Learning Via Sequence Modeling Deepai View a pdf of the paper titled decision transformer: reinforcement learning via sequence modeling, by lili chen and 8 other authors. In this section, we present decision transformer, which models trajectories autoregressively with minimal modification to the transformer architecture, as summarized in figure 1 and algorithm 1.

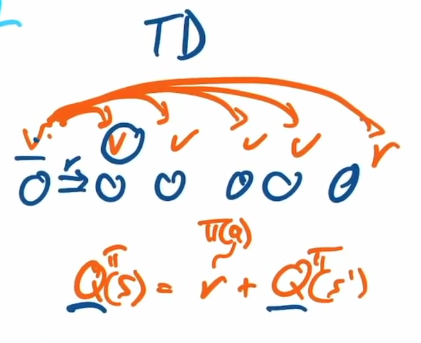

Lili Chen Decision Transformer Reinforcement Learning Via Sequence We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We present a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer. In particular, our primary points of comparison are model free offline rl algorithms based on td learning, since our decision transformer architecture is fundamentally model free in nature as well. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert.

Lili Chen Decision Transformer Reinforcement Learning Via Sequence In particular, our primary points of comparison are model free offline rl algorithms based on td learning, since our decision transformer architecture is fundamentally model free in nature as well. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. Download the full pdf of decision transformer: reinforcement learning via sequence. includes comprehensive summary, implementation details, and key takeaways.lili chen. Q6: does decision transformer perform well in sparse reward settings? effective model free supervised offline rl algorithm using sequence modelling. no reliance on any of the traditional rl concepts. solves credit assignment and distribution shift problems seen in other rl algorithms. Through experiments spanning a diverse set of offline rl benchmarks including atari, openai gym, and key to door, we show that our decision transformer model can learn to generate diverse behaviors by conditioning on desired returns.

Lili Chen Decision Transformer Reinforcement Learning Via Sequence We introduce a framework that abstracts reinforcement learning (rl) as a sequence modeling problem. this allows us to draw upon the simplicity and scalability of the transformer architecture, and associated advances in language modeling such as gpt x and bert. Download the full pdf of decision transformer: reinforcement learning via sequence. includes comprehensive summary, implementation details, and key takeaways.lili chen. Q6: does decision transformer perform well in sparse reward settings? effective model free supervised offline rl algorithm using sequence modelling. no reliance on any of the traditional rl concepts. solves credit assignment and distribution shift problems seen in other rl algorithms. Through experiments spanning a diverse set of offline rl benchmarks including atari, openai gym, and key to door, we show that our decision transformer model can learn to generate diverse behaviors by conditioning on desired returns.

Decision Transformer Reinforcement Learning Via Sequence Modeling Pdf Q6: does decision transformer perform well in sparse reward settings? effective model free supervised offline rl algorithm using sequence modelling. no reliance on any of the traditional rl concepts. solves credit assignment and distribution shift problems seen in other rl algorithms. Through experiments spanning a diverse set of offline rl benchmarks including atari, openai gym, and key to door, we show that our decision transformer model can learn to generate diverse behaviors by conditioning on desired returns.

Comments are closed.