Data Level Parallelism In Microprocessors Pptx Programming

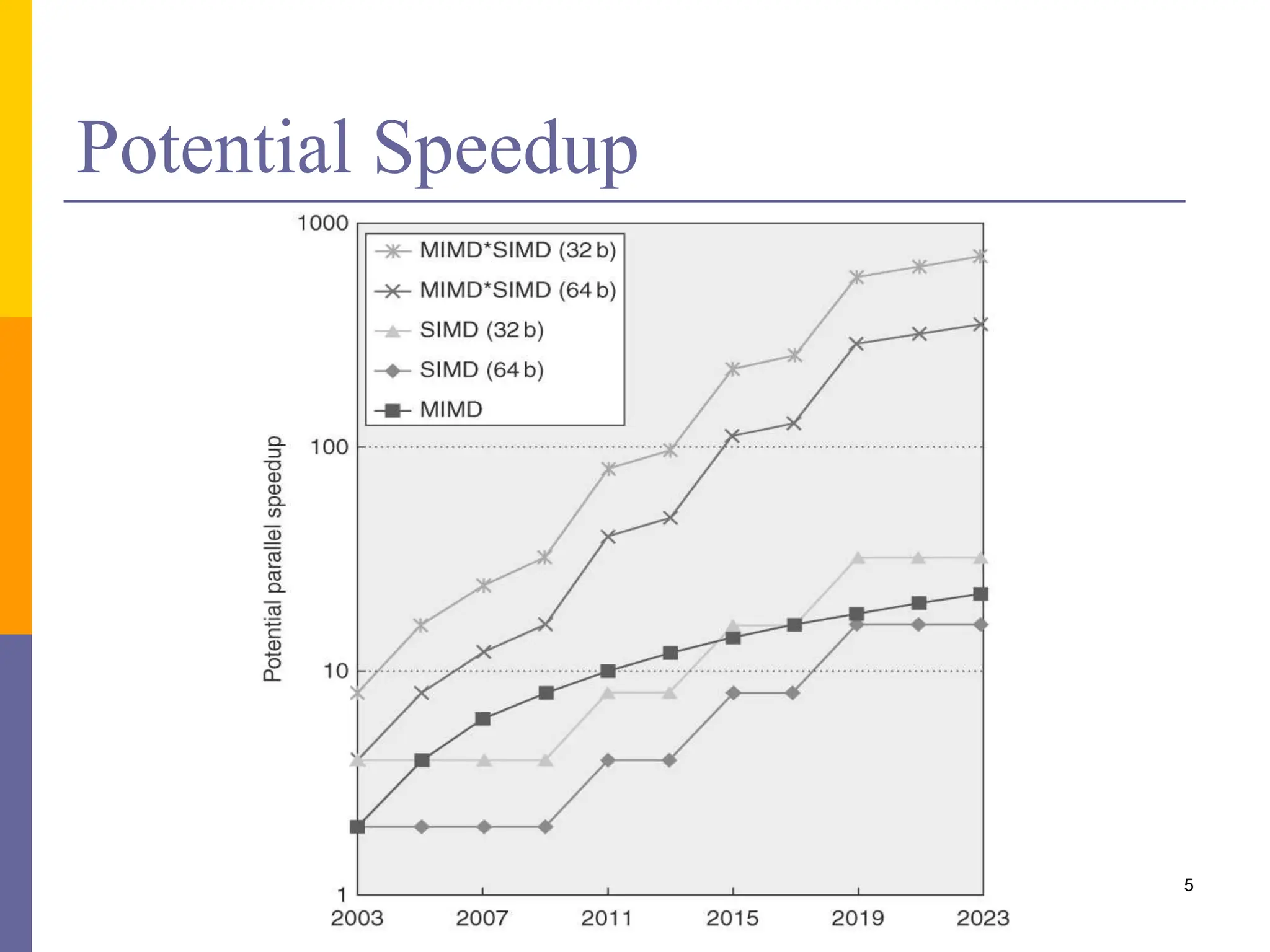

Data Level Parallelism In Microprocessors Pptx It also explains how simd extensions like sse exploit fine grained data parallelism and how gpus are optimized for data parallel applications through a multithreaded simd execution model. The document discusses data level parallelism in computer architecture, focusing on vector architectures and simd (single instruction multiple data) extensions. it covers the rv64v extension, vector execution time factors, and programming vector architectures to optimize performance.

Data Level Parallelism In Microprocessors Pptx This lecture discusses the various types of processors (cpu, gpu, fpga) and explores their differences in terms of parallelism. it covers topics such as instruction level parallelism, thread and data level parallelism, and the role of hardware and software in finding and exploiting parallelism. Data level parallelism | computation structures | electrical engineering and computer science | mit opencourseware. browse course material . syllabus . calendar . instructor insights . 1 basics of information . 1.1 annotated slides . 1.2 topic videos . 1.3 worksheet . Instruction level parallelism: in a single thread, allow unrelated instructions to execute in parallel thread level parallelism: giving multiple cores to a single machine data level parallelism: concurrently performing the same operation on multiple pieces of data (e.g., gpus!). “end” of uniprocessors speedup => multiprocessors parallelism challenges: % parallalizable, long latency to remote memory centralized vs. distributed memory small mp vs. lower latency, larger bw for larger mp message passing vs. shared address uniform access time vs. non uniform access time snooping cache over shared medium for smaller mp.

Data Level Parallelism In Microprocessors Pptx Instruction level parallelism: in a single thread, allow unrelated instructions to execute in parallel thread level parallelism: giving multiple cores to a single machine data level parallelism: concurrently performing the same operation on multiple pieces of data (e.g., gpus!). “end” of uniprocessors speedup => multiprocessors parallelism challenges: % parallalizable, long latency to remote memory centralized vs. distributed memory small mp vs. lower latency, larger bw for larger mp message passing vs. shared address uniform access time vs. non uniform access time snooping cache over shared medium for smaller mp. Microprocessor dependent optimizations in this product are intended for use with intel microprocessors. certain optimizations not specific to intel microarchitecture are reserved for intel microprocessors. Lecture 4: parallel programming basics (thought process of parallelizing a program in data parallel and shared address space models). This chapter discusses parallel programming platforms, focusing on architectural innovations that address bottlenecks in processing rates. it covers implicit parallelism, microprocessor trends, pipelining, superscalar execution, and memory system limitations, providing insights into optimizing serial and parallel code for improved performance. ̈ the programmer can write programs that are executed for every vertex as well as for every fragment ̈ this allows fully customizable geometry and shading effects that go well beyond the generic look and feel of older 3d applications.

Data Level Parallelism In Microprocessors Pptx Microprocessor dependent optimizations in this product are intended for use with intel microprocessors. certain optimizations not specific to intel microarchitecture are reserved for intel microprocessors. Lecture 4: parallel programming basics (thought process of parallelizing a program in data parallel and shared address space models). This chapter discusses parallel programming platforms, focusing on architectural innovations that address bottlenecks in processing rates. it covers implicit parallelism, microprocessor trends, pipelining, superscalar execution, and memory system limitations, providing insights into optimizing serial and parallel code for improved performance. ̈ the programmer can write programs that are executed for every vertex as well as for every fragment ̈ this allows fully customizable geometry and shading effects that go well beyond the generic look and feel of older 3d applications.

Comments are closed.