Data Intensive Computing

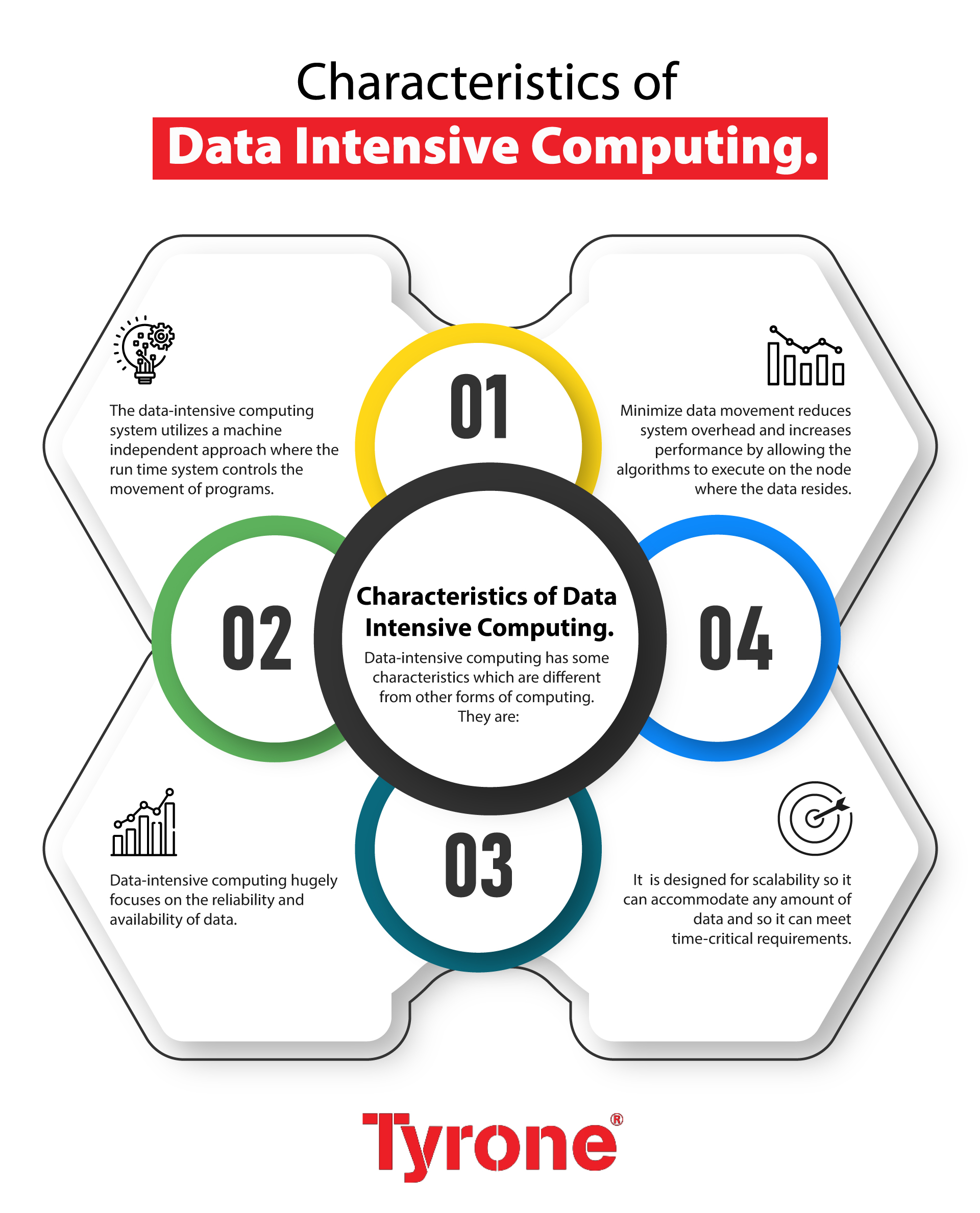

Characteristics Of Data Intensive Computing Tyrone Systems Data intensive computing is a class of parallel computing applications which use a data parallel approach to process large volumes of data typically terabytes or petabytes in size and typically referred to as big data. Data intensive applications are programs primarily focused on the manipulation of massive datasets. these applications are typically implemented as data parallel programs that exploit the distribution of data among nodes in a parallel computer for concurrent processing.

Ppt Data Intensive Computing Powerpoint Presentation Free Download Learn about the principles, methods, and applications of data intensive computing, a field that deals with massive amounts of data and complex problems. this book covers topics such as hardware architectures, data management, dimension reduction, classification, and visual analysis. What is a data intensive application? a data intensive application is one that primarily deals with large volumes of data, complex processing, and distributed architectures. Through the development of new classes of software, algorithms, and hardware, data intensive applications can provide timely and meaningful analytical results in response to exponentially growing data complexity and associated analysis requirements. Data intensive computing data intensive computing refers to the use of a data parallel approach in parallel computing applications to process large amounts of data.

Ppt Data Intensive Computing Powerpoint Presentation Free Download Through the development of new classes of software, algorithms, and hardware, data intensive applications can provide timely and meaningful analytical results in response to exponentially growing data complexity and associated analysis requirements. Data intensive computing data intensive computing refers to the use of a data parallel approach in parallel computing applications to process large amounts of data. What is data intensive computing? data intensive computing refers to the use of computational resources and techniques to process, analyze, and extract insights from large volumes of data. In this article, we advance the idea that data intensive computing would further cement semiconductor technology as a foundational technology with multidimensional pathways for growth. Data intensive computing is the process of using large amounts of data to solve complex problems. it is a rapidly expanding field of technology which utilizes large clusters of computers to analyze, manage, store and process a large amount of data. Data intensive computing uses techniques from other areas of research and further develops them for the specific application into data intensive computing. this includes in particular artificial intelligence and high performance computing methods.

Comments are closed.