Data Engineering With Aws Azure Databricks Apache Spark 3 Kafka

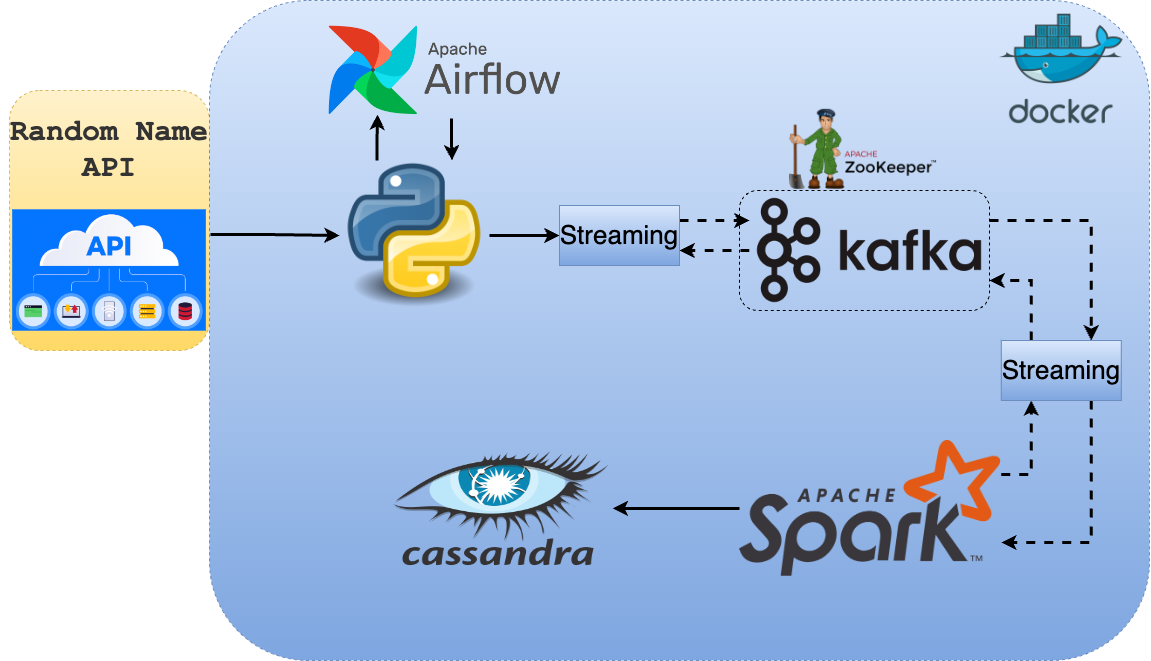

Data Engineering With Aws Azure Databricks Apache Spark 3 Kafka In this course i will talk about open source data processing technologies spark and kafka, which are the most used and most popular data processing frameworks for batch & stream processing. in this course you will learn spark from level 100 to level 400 with real life hands on and projects. The databricks runtime is a reliable and performance optimized compute environment for running spark workloads, including batch and streaming. databricks runtime provides photon, a high performance databricks native vectorized query engine, and various infrastructure optimizations like autoscaling.

Data Engineering With Azure Databricks Aws Apache Spark 3 Airflow Learn how to harness the power of apache spark and powerful clusters running on the azure databricks platform to run large data engineering workloads in the cloud. Training for complete data engineering course with big data hadoop and spark. the course focuses on various aspects of big data frameworks like hadoop and spark along with cloud technologies like aws data engineering stack databricks. The course focuses on various aspects of big data frameworks like hadoop and spark. we will be learning about many tools in the hadoop ecosystem such as hive, sqoop, flume, spark, and kafka. You can run your spark and structured streaming workloads on the databricks runtime by building your spark programs as notebooks, jars, or python wheels. see databricks runtime for apache spark.

Azure Data Engineer Databricks Content Pdf Apache Spark Databases The course focuses on various aspects of big data frameworks like hadoop and spark. we will be learning about many tools in the hadoop ecosystem such as hive, sqoop, flume, spark, and kafka. You can run your spark and structured streaming workloads on the databricks runtime by building your spark programs as notebooks, jars, or python wheels. see databricks runtime for apache spark. You’ll start with big data and hadoop foundations, master aws (ec2, s3, rds, iam, cloudwatch) and azure (adls, sql db, databricks, event hub), and deep dive into apache spark & pyspark for real world data processing. This project showcases a complete data engineering solution using microsoft azure, pyspark, and databricks. it involves building a scalable etl pipeline to process and transform data efficiently. Databricks is a managed spark cluster environment that uses compute resources from gcp. the databricks managed spark cluster can achieve higher parallelism using 1 driver and dynamically. This repository serves as a one stop reference for data engineers at all levels. it consolidates knowledge across the entire data engineering stack including sql, pyspark, python, snowflake, databricks, aws services, system design, and more. the materials are sourced from industry experts, personal interview experiences, and hands on practice.

Aws Cloud Data Engineering End To End Project Aws Glue Etl Job S3 You’ll start with big data and hadoop foundations, master aws (ec2, s3, rds, iam, cloudwatch) and azure (adls, sql db, databricks, event hub), and deep dive into apache spark & pyspark for real world data processing. This project showcases a complete data engineering solution using microsoft azure, pyspark, and databricks. it involves building a scalable etl pipeline to process and transform data efficiently. Databricks is a managed spark cluster environment that uses compute resources from gcp. the databricks managed spark cluster can achieve higher parallelism using 1 driver and dynamically. This repository serves as a one stop reference for data engineers at all levels. it consolidates knowledge across the entire data engineering stack including sql, pyspark, python, snowflake, databricks, aws services, system design, and more. the materials are sourced from industry experts, personal interview experiences, and hands on practice.

Aws Cloud Data Engineering End To End Project Aws Glue Etl Job S3 Databricks is a managed spark cluster environment that uses compute resources from gcp. the databricks managed spark cluster can achieve higher parallelism using 1 driver and dynamically. This repository serves as a one stop reference for data engineers at all levels. it consolidates knowledge across the entire data engineering stack including sql, pyspark, python, snowflake, databricks, aws services, system design, and more. the materials are sourced from industry experts, personal interview experiences, and hands on practice.

Comments are closed.