Data Engineering Simple And Complex Data Pipelines

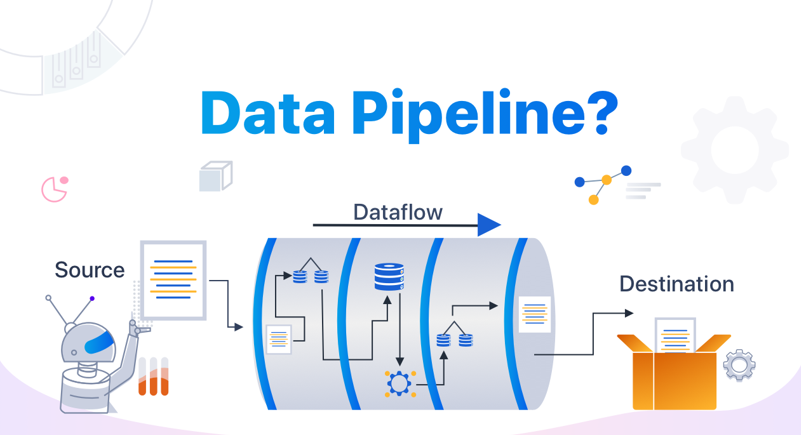

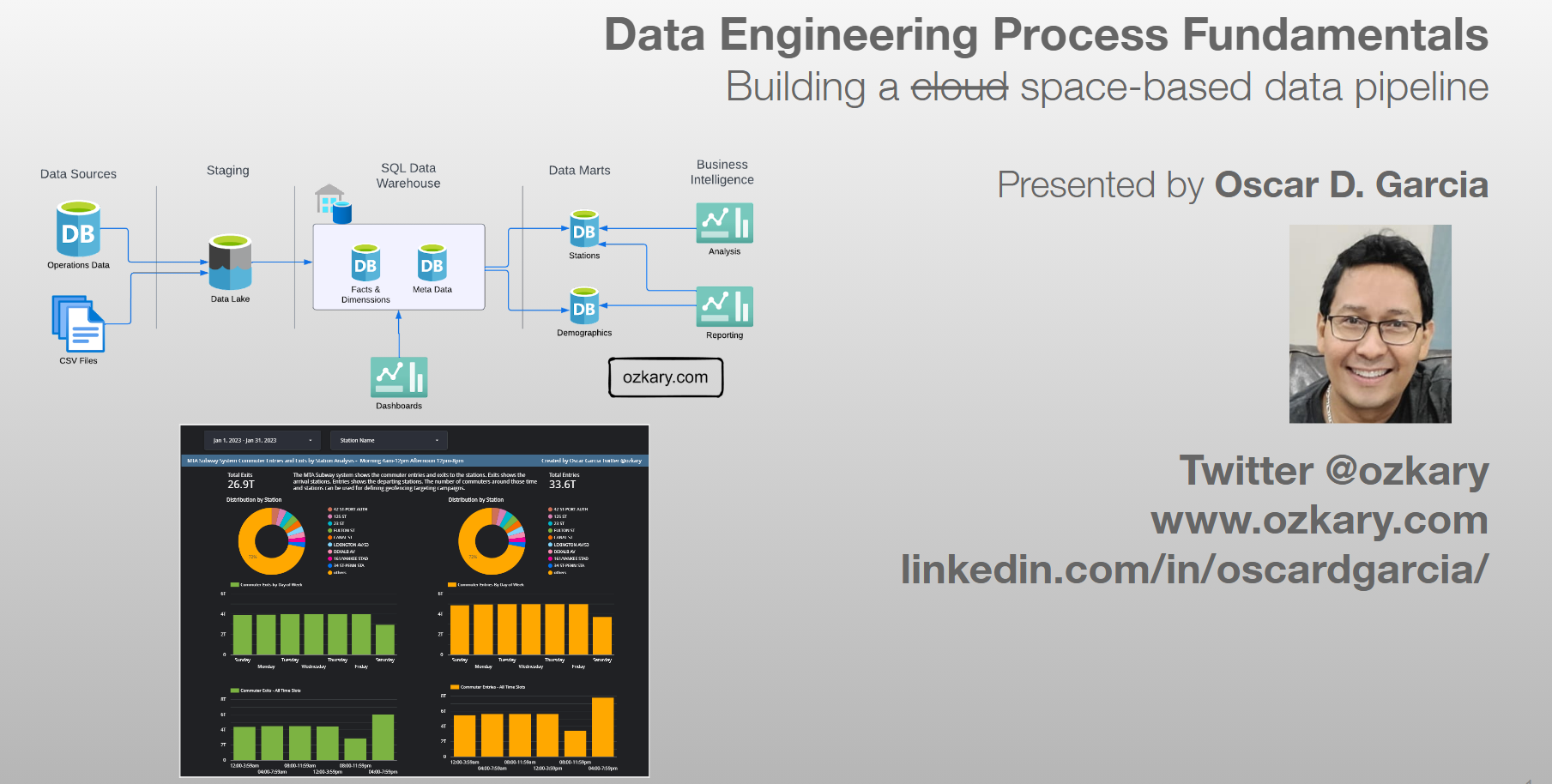

Powering Modern Data Pipelines Data Engineering With Python A data pipeline is a set of tools and processes for collecting, processing, and delivering data from one or more sources to a destination where it can be analyzed and used. In this article, we'll provide a comprehensive introduction to data pipelines, covering their components, design principles, common tools and technologies, and a step by step example of building a simple data pipeline.

Data Engineering 101 Best Practices For Building Scalable Data Understanding data engineering pipeline concepts is essential for designing, building, and managing efficient and reliable data workflows. here are the core concepts, components, and stages. In this guide, we’ll break down the key concepts behind data pipelines, explore common use cases, and share best practices for designing and managing them effectively. Explore the details of data pipeline architecture, the need for one in your organization, and essential best practices, along with practical examples. Learn the principles in data pipeline architecture and common patterns with examples. we show how to build reliable and scalable pipelines for your use cases.

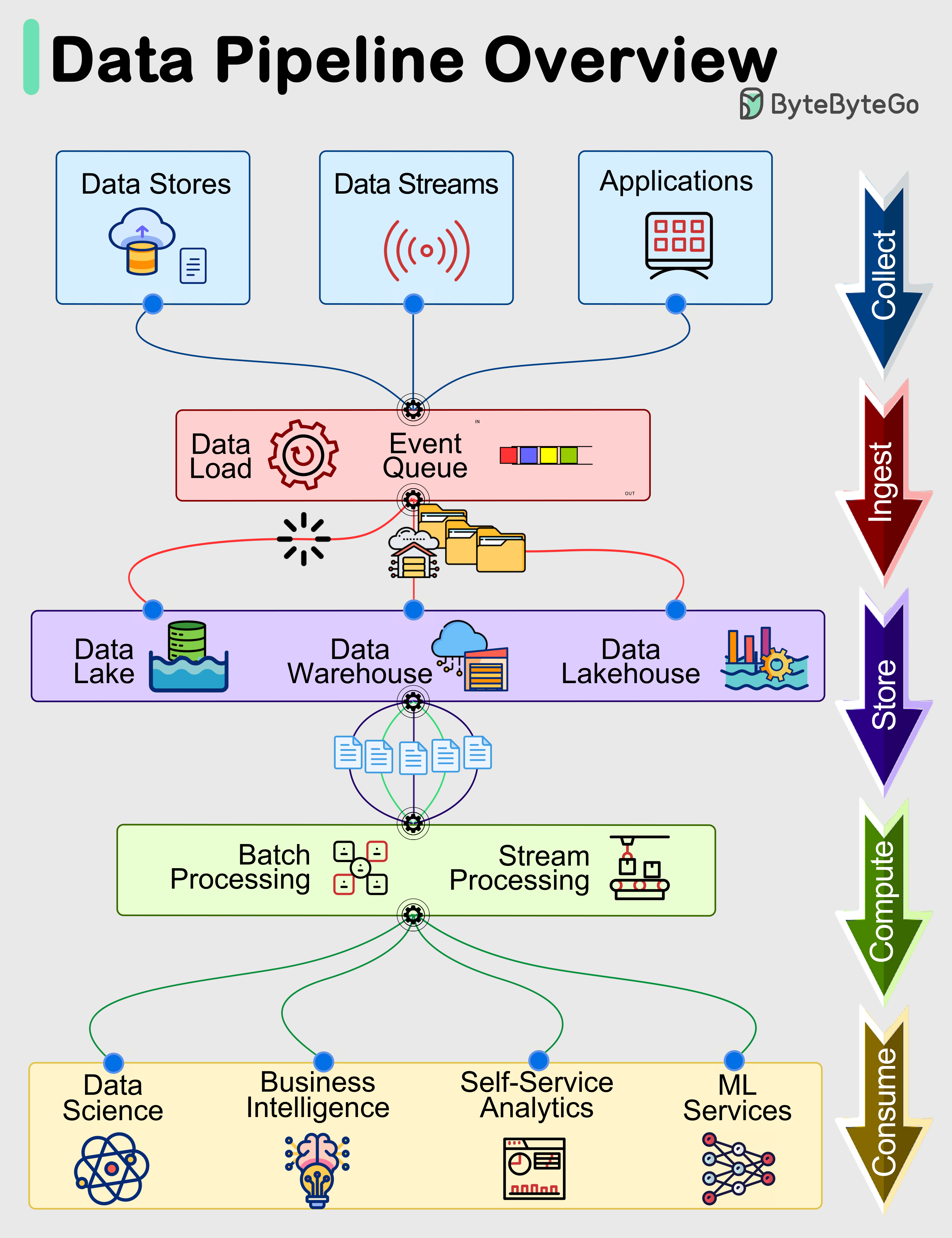

Bytebytego Data Pipelines Overview Explore the details of data pipeline architecture, the need for one in your organization, and essential best practices, along with practical examples. Learn the principles in data pipeline architecture and common patterns with examples. we show how to build reliable and scalable pipelines for your use cases. A simple data pipeline might be created by copying data from source to target without any changes. a complex data pipeline might include multiple transformation steps, lookup, updates,. Data pipeline frameworks have emerged as the backbone of modern data engineering, enabling seamless data flow and transformation processes that can handle these massive data volumes. A complete guide to data pipelines: understand their architecture, explore key types and discover use cases driving modern data operations. Here's a step by step guide to help you create a data pipeline from scratch that's both efficient and scalable. 1. define your objectives. before diving in, get clear on what you want to achieve with your data pipeline.

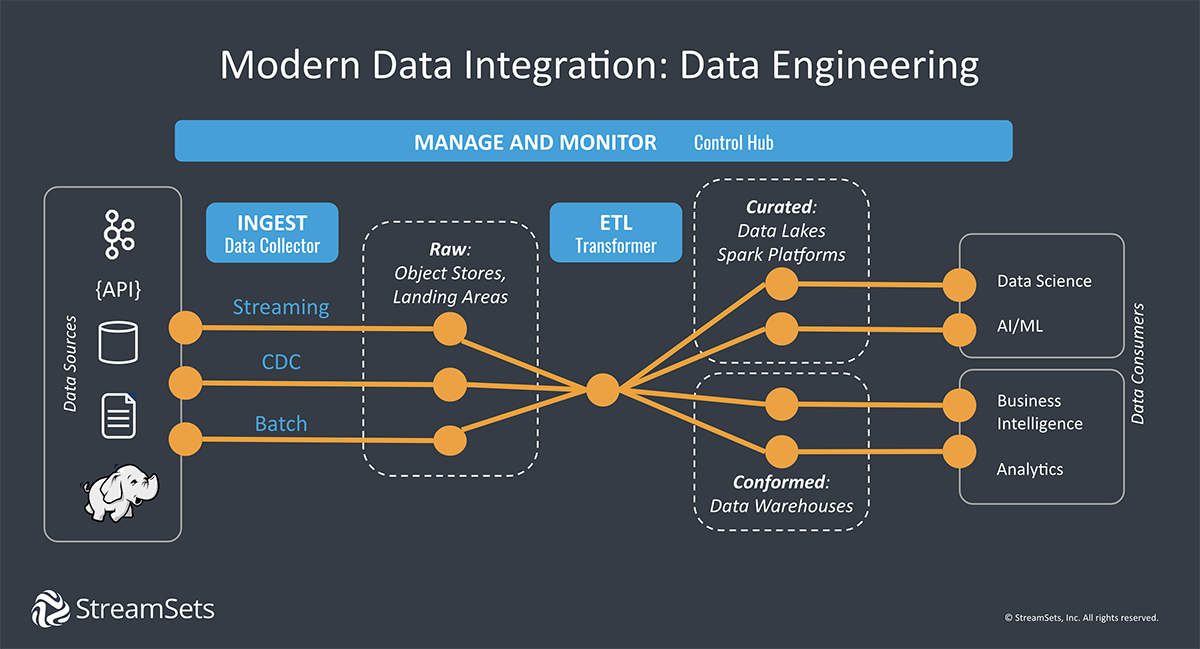

Smart Data Pipelines Architectures Tools Key Concepts Streamsets A simple data pipeline might be created by copying data from source to target without any changes. a complex data pipeline might include multiple transformation steps, lookup, updates,. Data pipeline frameworks have emerged as the backbone of modern data engineering, enabling seamless data flow and transformation processes that can handle these massive data volumes. A complete guide to data pipelines: understand their architecture, explore key types and discover use cases driving modern data operations. Here's a step by step guide to help you create a data pipeline from scratch that's both efficient and scalable. 1. define your objectives. before diving in, get clear on what you want to achieve with your data pipeline.

Streamlining Data Flow Building Cloud Based Data Pipelines Data A complete guide to data pipelines: understand their architecture, explore key types and discover use cases driving modern data operations. Here's a step by step guide to help you create a data pipeline from scratch that's both efficient and scalable. 1. define your objectives. before diving in, get clear on what you want to achieve with your data pipeline.

Error Handling And Logging In Data Pipelines Ensuring Data Reliability

Comments are closed.