Data Engineering Pipeline

Data Engineering Pipeline A data pipeline is a set of tools and processes for collecting, processing, and delivering data from one or more sources to a destination where it can be analyzed and used. Explore the details of data pipeline architecture, the need for one in your organization, and essential best practices, along with practical examples.

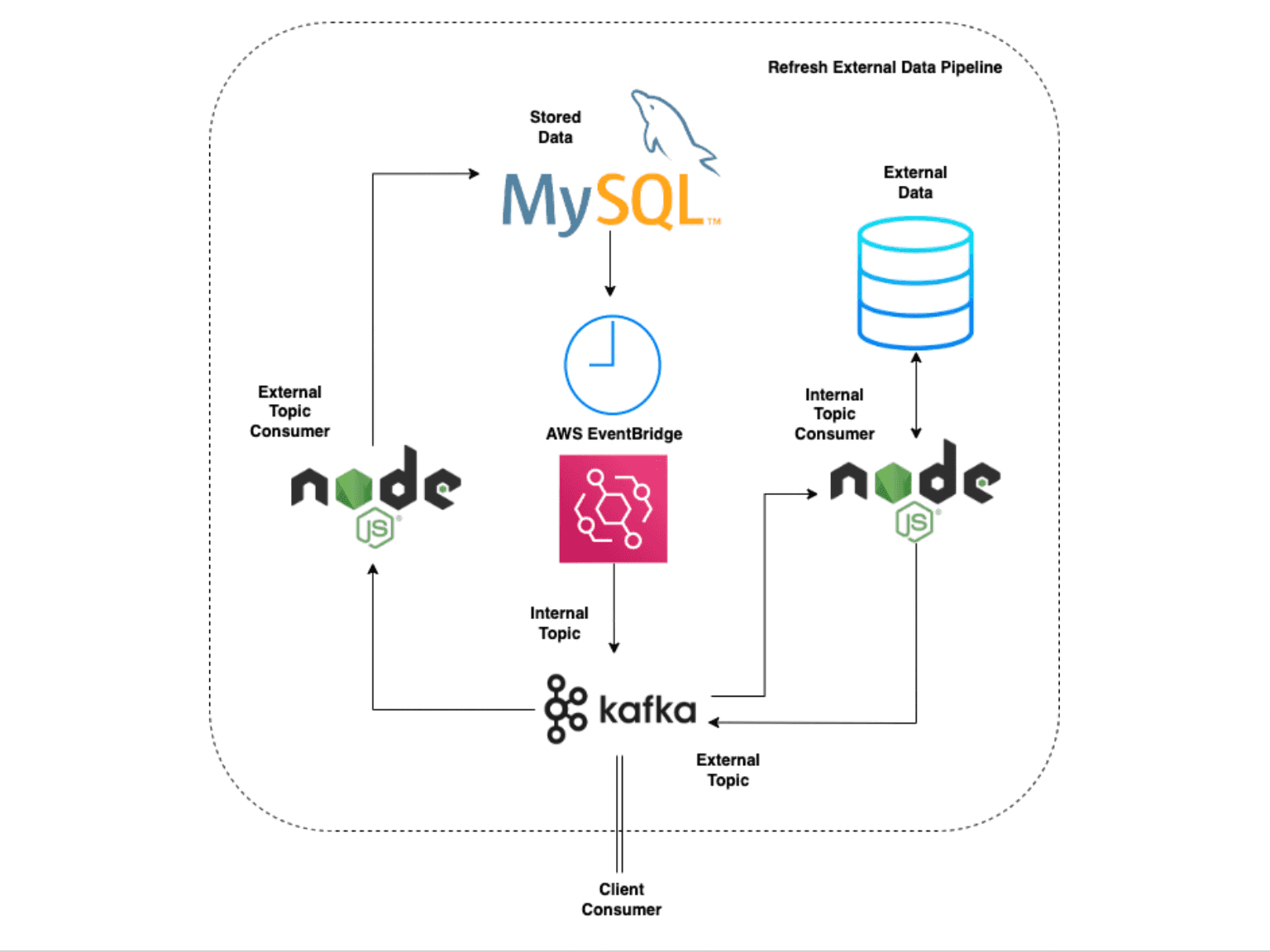

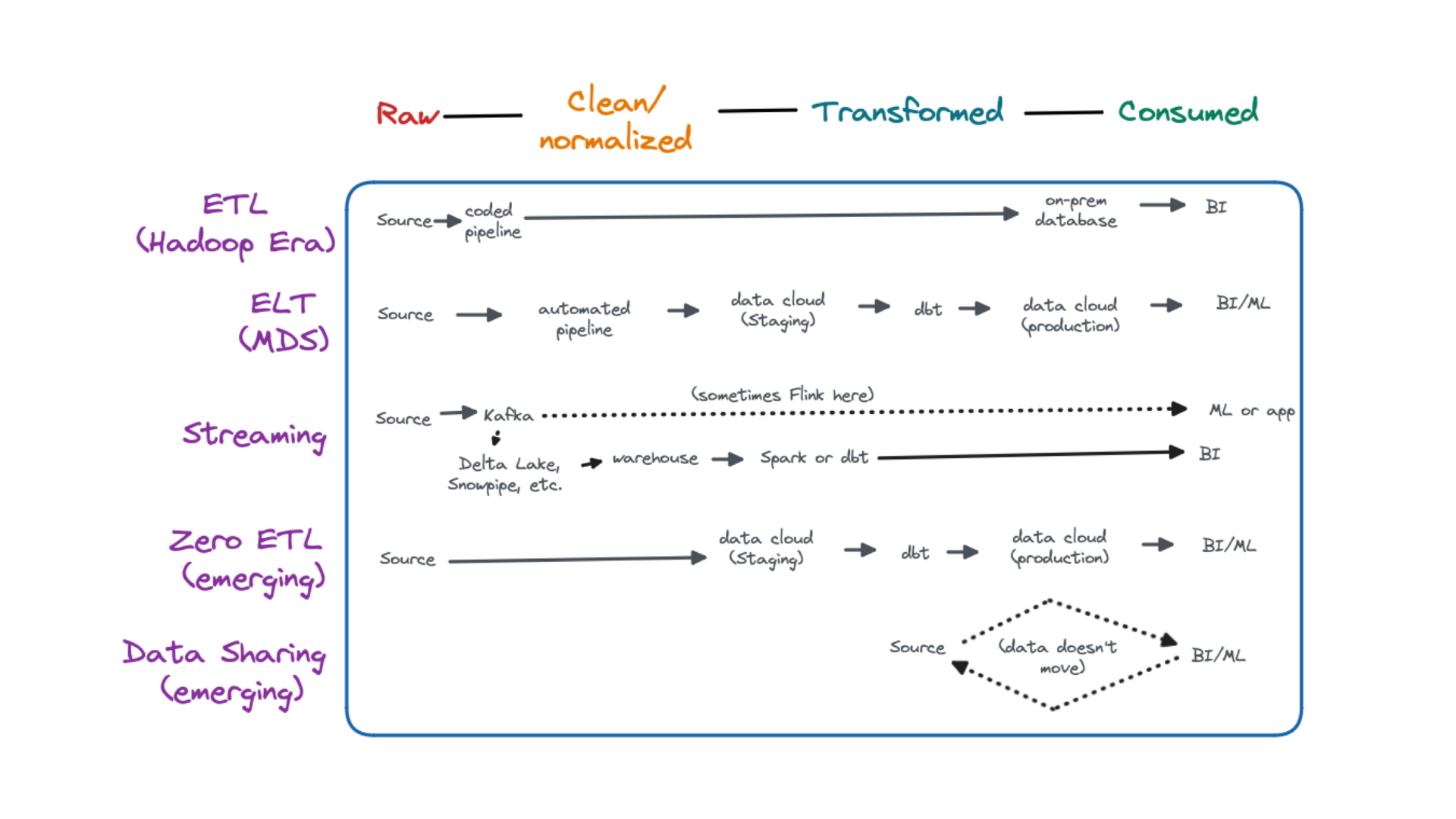

Data Pipeline In Data Engineering Tech Blogger There are usually three key elements to any data pipeline: the source, the data processing steps and the destination, or “sink.” data can be modified during the transfer process, and some pipelines may be used simply to transform data, with the source system and destination being the same. A data pipeline is a method in which raw data is ingested from various data sources, transformed and then ported to a data store, such as a data lake or data warehouse, for analysis. Data pipelines move data from a source to target system, often with some transformation along the way. it’s important to understand this movement is rarely linear, and instead is a series of. This tutorial covers the basics of data pipelines and terminology for aspiring data professionals, including pipeline uses, common technology, and tips for pipeline building.

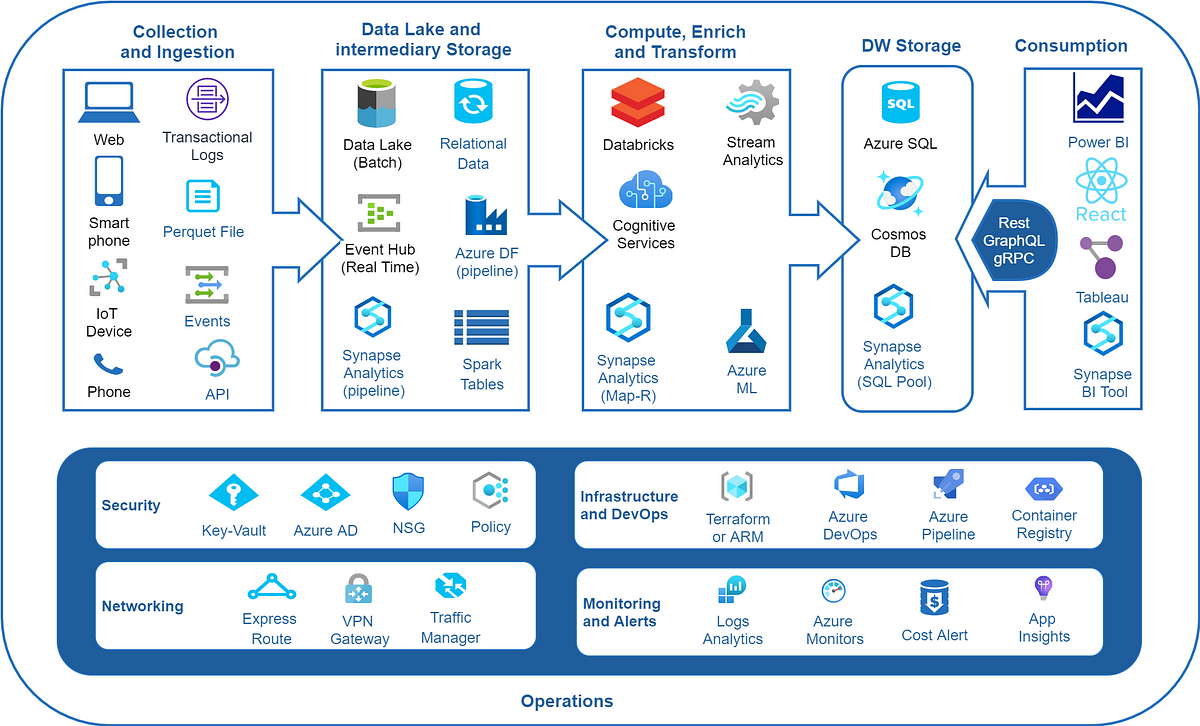

Data Engineering Pipeline Appdesk Services Data pipelines move data from a source to target system, often with some transformation along the way. it’s important to understand this movement is rarely linear, and instead is a series of. This tutorial covers the basics of data pipelines and terminology for aspiring data professionals, including pipeline uses, common technology, and tips for pipeline building. In this guide, we’ll break down the key concepts behind data pipelines, explore common use cases, and share best practices for designing and managing them effectively. A data pipeline moves data from source systems to storage through three main stages: extraction, transformation, and loading. common data sources include databases, apis, flat files, and streaming platforms, while common destinations include data warehouses, data lakes, and cloud storage. Check out this comprehensive guide on data pipelines, their types, components, tools, use cases, and architecture with examples. For data engineers, good data pipeline architecture is critical to solving the 5 v’s posed by big data: volume, velocity, veracity, variety, and value. a well designed pipeline will meet use case requirements while being efficient from a maintenance and cost perspective.

How To Improve Data Pipeline Optimization In this guide, we’ll break down the key concepts behind data pipelines, explore common use cases, and share best practices for designing and managing them effectively. A data pipeline moves data from source systems to storage through three main stages: extraction, transformation, and loading. common data sources include databases, apis, flat files, and streaming platforms, while common destinations include data warehouses, data lakes, and cloud storage. Check out this comprehensive guide on data pipelines, their types, components, tools, use cases, and architecture with examples. For data engineers, good data pipeline architecture is critical to solving the 5 v’s posed by big data: volume, velocity, veracity, variety, and value. a well designed pipeline will meet use case requirements while being efficient from a maintenance and cost perspective.

Comments are closed.