Data Architecture Semantic Scholar

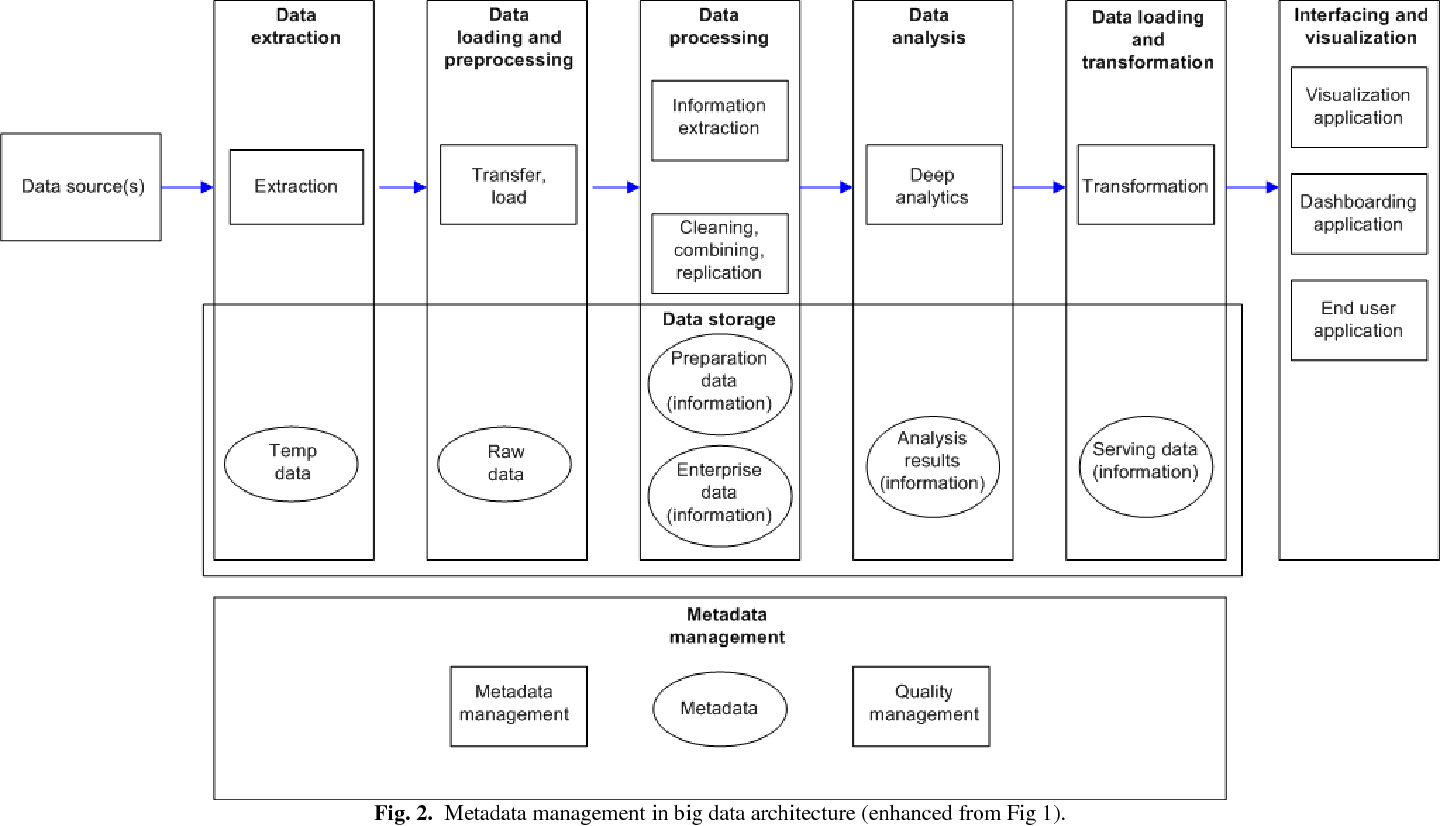

Data Architecture Semantic Scholar In information technology, data architecture is composed of models, policies, rules or standards that govern which data is collected, and how it is stored, arranged, integrated, and put to use in data systems and in organizations. A data architect is a practitioner of data architecture, an information technology discipline concerned with designing, creating, deploying and managing an organization's data architecture.

Semantic Scholar Product Semantic scholar uses groundbreaking ai and engineering to understand the semantics of scientific literature to help scholars discover relevant research. Search across a wide variety of disciplines and sources: articles, theses, books, abstracts and court opinions. This study lays the foundations for a generic data lake architecture organized into areas, where semantics plays a central role in the data ingestion and access stages, thus reinforcing their value for the user. In this paper, we describe the components of the s2 data processing pipeline and the associated apis offered by the platform. we will periodically update this document to reflect improvements and new data offerings.

Semantic Scholar Product This study lays the foundations for a generic data lake architecture organized into areas, where semantics plays a central role in the data ingestion and access stages, thus reinforcing their value for the user. In this paper, we describe the components of the s2 data processing pipeline and the associated apis offered by the platform. we will periodically update this document to reflect improvements and new data offerings. This guide covers what a semantic layer is, how its core components and design patterns work, how modern data architecture differs from traditional approaches, and — critically — how semantic layers now serve as the foundational infrastructure for large language models and ai powered analytics. With unparalleled flexibility, cdata virtuality allows data engineers and architects to unify, integrate, and model both virtual and historical data directly within the semantic layer— reducing preparation time and enabling faster adaptation to new use cases. This paper analyzes the adoption, usage and impact of big data analytics to the business value of an enterprise to improve its competitive advantage using a set of data algorithms for large data sets such as hadoop and mapreduce. Contractual agreements with the llm providers help, but they cannot fully address risks like prompt injection, unintended data exposure, or regulatory non compliance. this paper presents a practical reference architecture for securing enterprise data at inference time, organized around three main layers.

Semantic Scholar Academic Graph Api Semantic Scholar This guide covers what a semantic layer is, how its core components and design patterns work, how modern data architecture differs from traditional approaches, and — critically — how semantic layers now serve as the foundational infrastructure for large language models and ai powered analytics. With unparalleled flexibility, cdata virtuality allows data engineers and architects to unify, integrate, and model both virtual and historical data directly within the semantic layer— reducing preparation time and enabling faster adaptation to new use cases. This paper analyzes the adoption, usage and impact of big data analytics to the business value of an enterprise to improve its competitive advantage using a set of data algorithms for large data sets such as hadoop and mapreduce. Contractual agreements with the llm providers help, but they cannot fully address risks like prompt injection, unintended data exposure, or regulatory non compliance. this paper presents a practical reference architecture for securing enterprise data at inference time, organized around three main layers.

Semantic Scholar Academic Graph Api Semantic Scholar This paper analyzes the adoption, usage and impact of big data analytics to the business value of an enterprise to improve its competitive advantage using a set of data algorithms for large data sets such as hadoop and mapreduce. Contractual agreements with the llm providers help, but they cannot fully address risks like prompt injection, unintended data exposure, or regulatory non compliance. this paper presents a practical reference architecture for securing enterprise data at inference time, organized around three main layers.

Comments are closed.