Cypress Programming Openmp Hpc

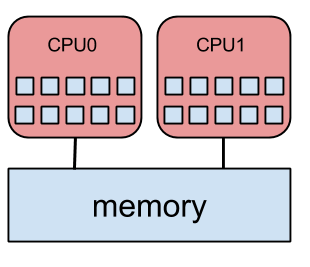

Cypress Programming Openmp Hpc Openmp is built around a shared memory space and generates concurrent threads – this is how the parallelism is facilitated. cpus that share memory are called “symmetric multi processors”, or smp for short. An application built with the hybrid model of parallel programming can run on a computer cluster using both openmp and message passing interface (mpi), such that openmp is used for parallelism within a (multi core) node while mpi is used for parallelism between nodes.

Cypress Programming Openmp Hpc The tutorial explains how to use openmp tasking, how to synchonize, how to deal with cut off strategies and how an openmp runtime environment manages the tasks in queues. Openmp (open multi processing) is a shared memory parallelization extension to c, c , and fortran builtin to supporting compilers. openmp does parallelization across threads in a single process, rather than across processes in contrast to mpi. Once you have finished the tutorial, please complete our evaluation form!. Our “advanced openmp programming” tutorial addresses this critical need by exploring the implications of possible openmp parallelization strategies, both in terms of correctness and performance.

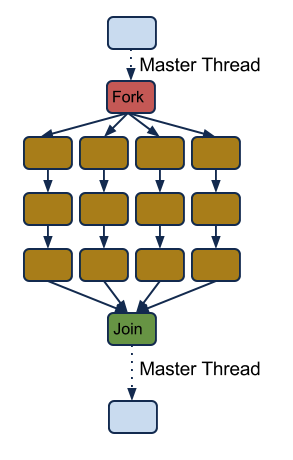

Openmp Workshop Day 1 Pdf Parallel Computing Computer Programming Once you have finished the tutorial, please complete our evaluation form!. Our “advanced openmp programming” tutorial addresses this critical need by exploring the implications of possible openmp parallelization strategies, both in terms of correctness and performance. Motivation introduction openmp is an abbreviation for open multi processing independent standard supported by several compiler vendors parallelization is done via so called compiler pragmas compilers without openmp support can simply ignore the pragmas there is a small runtime library for additional functionality. In openmp, the scope of a variable refers to the set of threads that can access the variable in a parallel block. a variable that can be accessed by all the threads in the team has shared scope, while a variable that can only be accessed by a single thread has private scope. Programming model fork join model of parallel execution begin as a single process, the master thread the master thread executes sequentially until the first parallel region construct is encountered. Openmp is a standardised api for programming shared memory computers (and more recently gpus) using threading as the programming paradigm. it supports both data parallel shared memory programming (typically for parallelising loops) and task parallelism.

Introduction To Openmp Programming Hpc Serbia Motivation introduction openmp is an abbreviation for open multi processing independent standard supported by several compiler vendors parallelization is done via so called compiler pragmas compilers without openmp support can simply ignore the pragmas there is a small runtime library for additional functionality. In openmp, the scope of a variable refers to the set of threads that can access the variable in a parallel block. a variable that can be accessed by all the threads in the team has shared scope, while a variable that can only be accessed by a single thread has private scope. Programming model fork join model of parallel execution begin as a single process, the master thread the master thread executes sequentially until the first parallel region construct is encountered. Openmp is a standardised api for programming shared memory computers (and more recently gpus) using threading as the programming paradigm. it supports both data parallel shared memory programming (typically for parallelising loops) and task parallelism.

Comments are closed.