Cvpr Poster Vivid 1 To 3 Novel View Synthesis With Video Diffusion Models

Novel View Synthesis With Diffusion Models This cvpr paper is the open access version, provided by the computer vision foundation. except for this watermark, it is identical to the accepted version; the final published version of the proceedings is available on ieee xplore. This repository is a reference implementation for vivid 1 to 3. it combines video diffusion with novel view synthesis diffusion models for increased pose and appearace consistency.

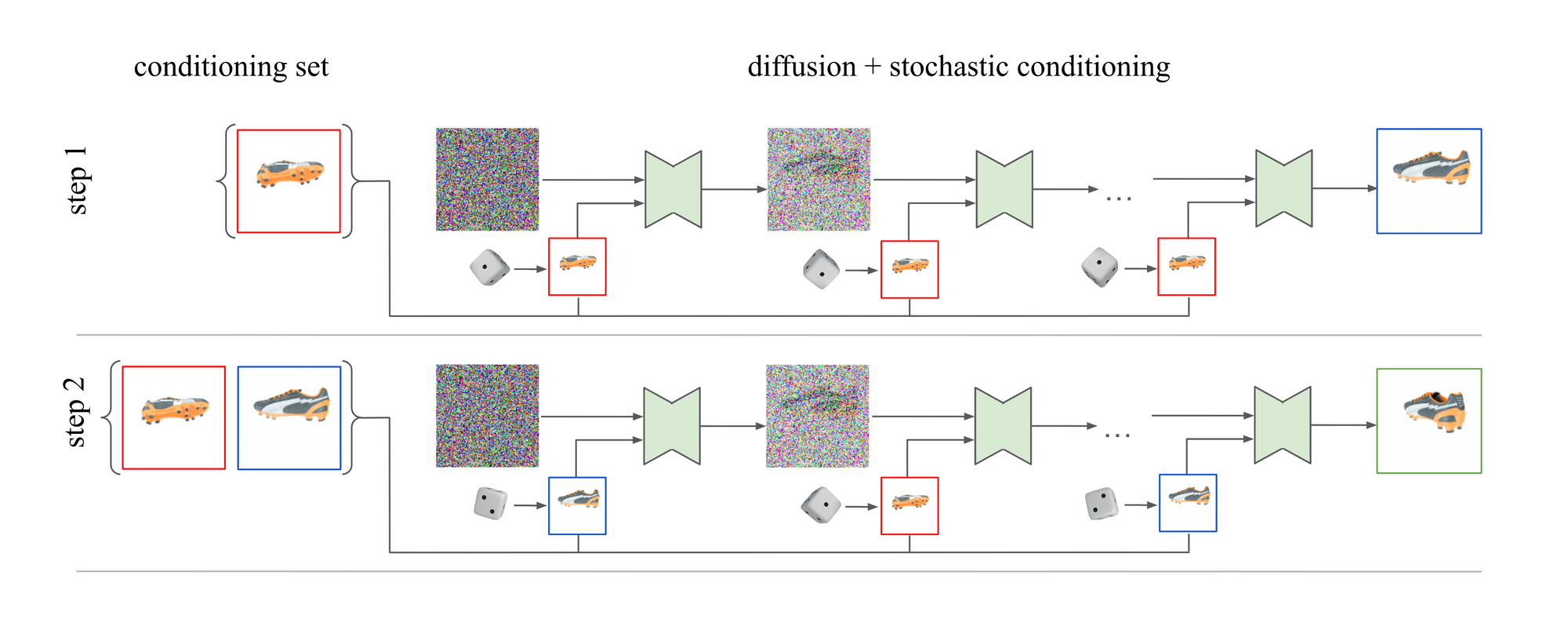

Novel View Synthesis With Diffusion Models Deepai Generating novel views of an object from a single image is a challenging task. it requires an understanding of the underlying 3d structure of the object from an. Thus, to perform novel view synthesis, we create a smooth camera trajectory to the target view that we wish to render, and denoise using both a view conditioned diffusion model and a video diffusion model. In this work, we demonstrate a strikingly simple method, where we utilize a pre trained video diffusion model to solve this problem. Thus to perform novel view synthesis we create a smooth camera trajectory to the target view that we wish to render and denoise using both a view conditioned diffusion model and a video diffusion model.

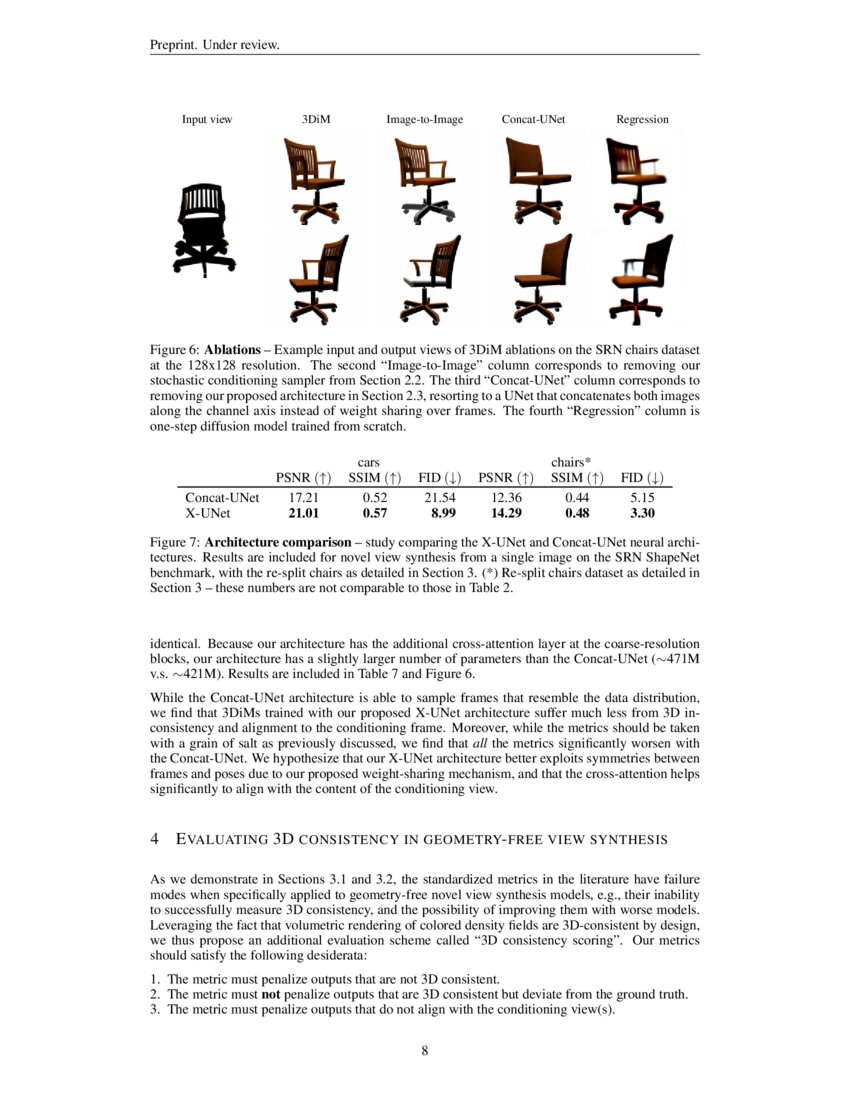

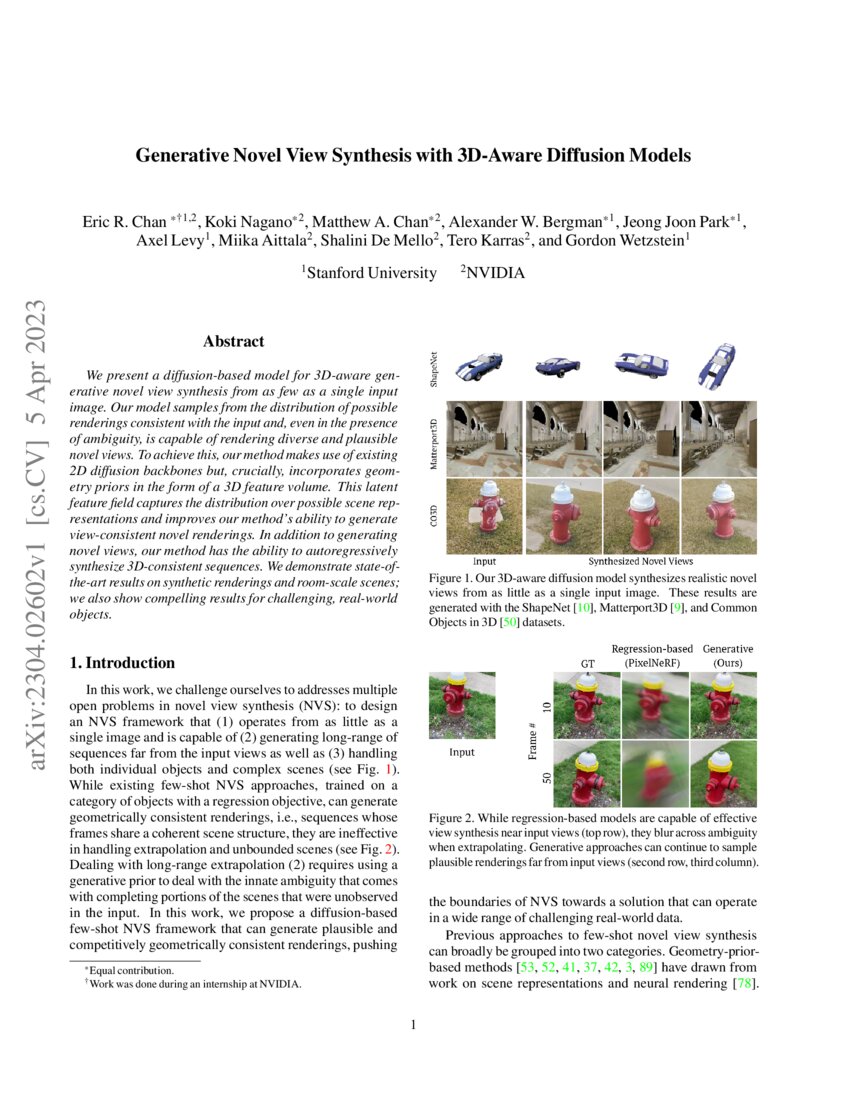

Generative Novel View Synthesis With 3d Aware Diffusion Models Deepai In this work, we demonstrate a strikingly simple method, where we utilize a pre trained video diffusion model to solve this problem. Thus to perform novel view synthesis we create a smooth camera trajectory to the target view that we wish to render and denoise using both a view conditioned diffusion model and a video diffusion model. Thus, to perform novel view synthesis, we create a smooth camera trajectory to the target view that we wish to render, and denoise using both a view conditioned diffusion model and a video diffusion model. In this work, we propose sv3d that adapts image to video diffusion model for novel multi view synthesis and 3d generation, thereby leveraging the generalization and multi view consistency of the video models, while further adding explicit camera control for nvs.

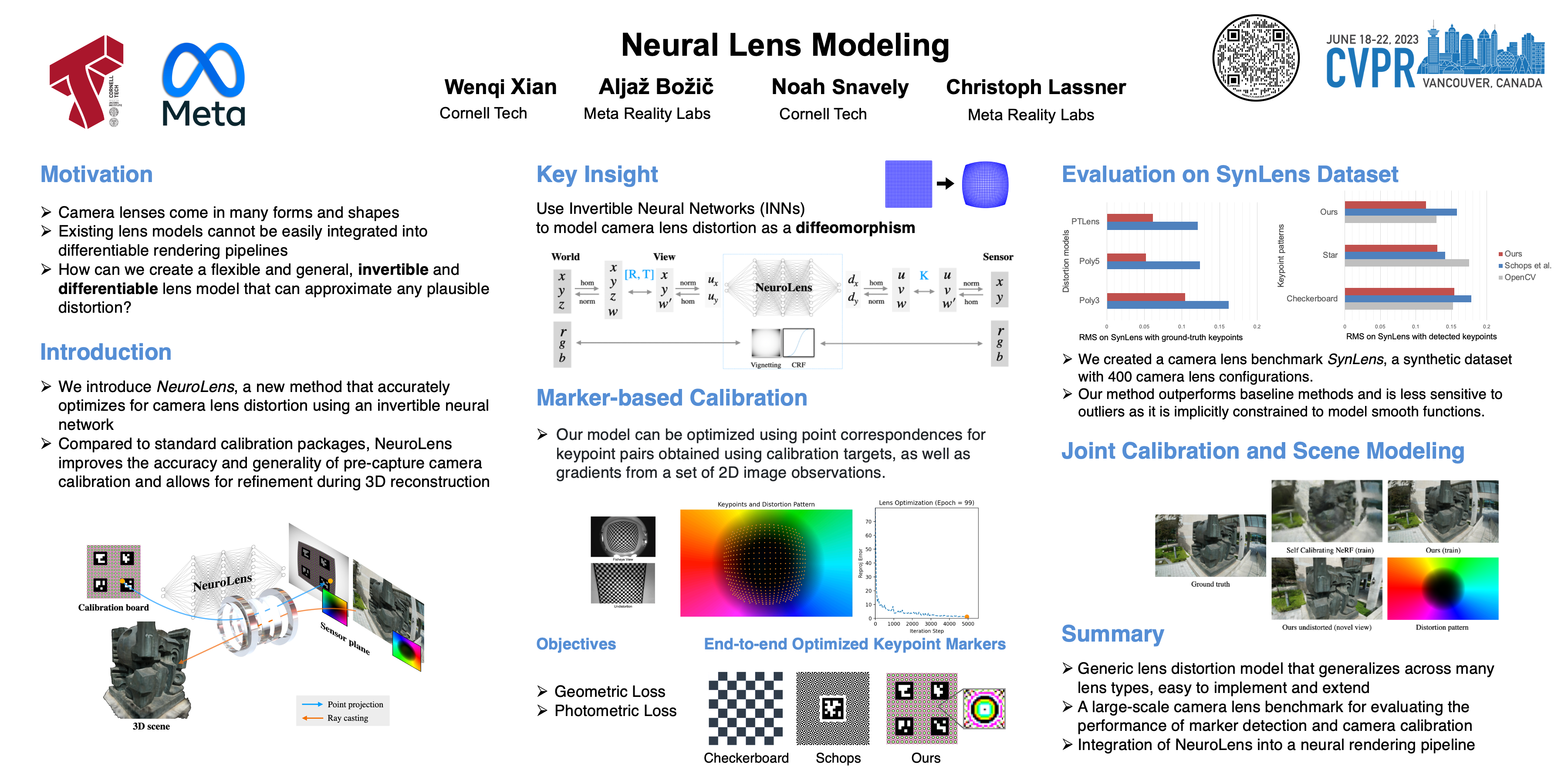

Cvpr Poster Neural Lens Modeling Thus, to perform novel view synthesis, we create a smooth camera trajectory to the target view that we wish to render, and denoise using both a view conditioned diffusion model and a video diffusion model. In this work, we propose sv3d that adapts image to video diffusion model for novel multi view synthesis and 3d generation, thereby leveraging the generalization and multi view consistency of the video models, while further adding explicit camera control for nvs.

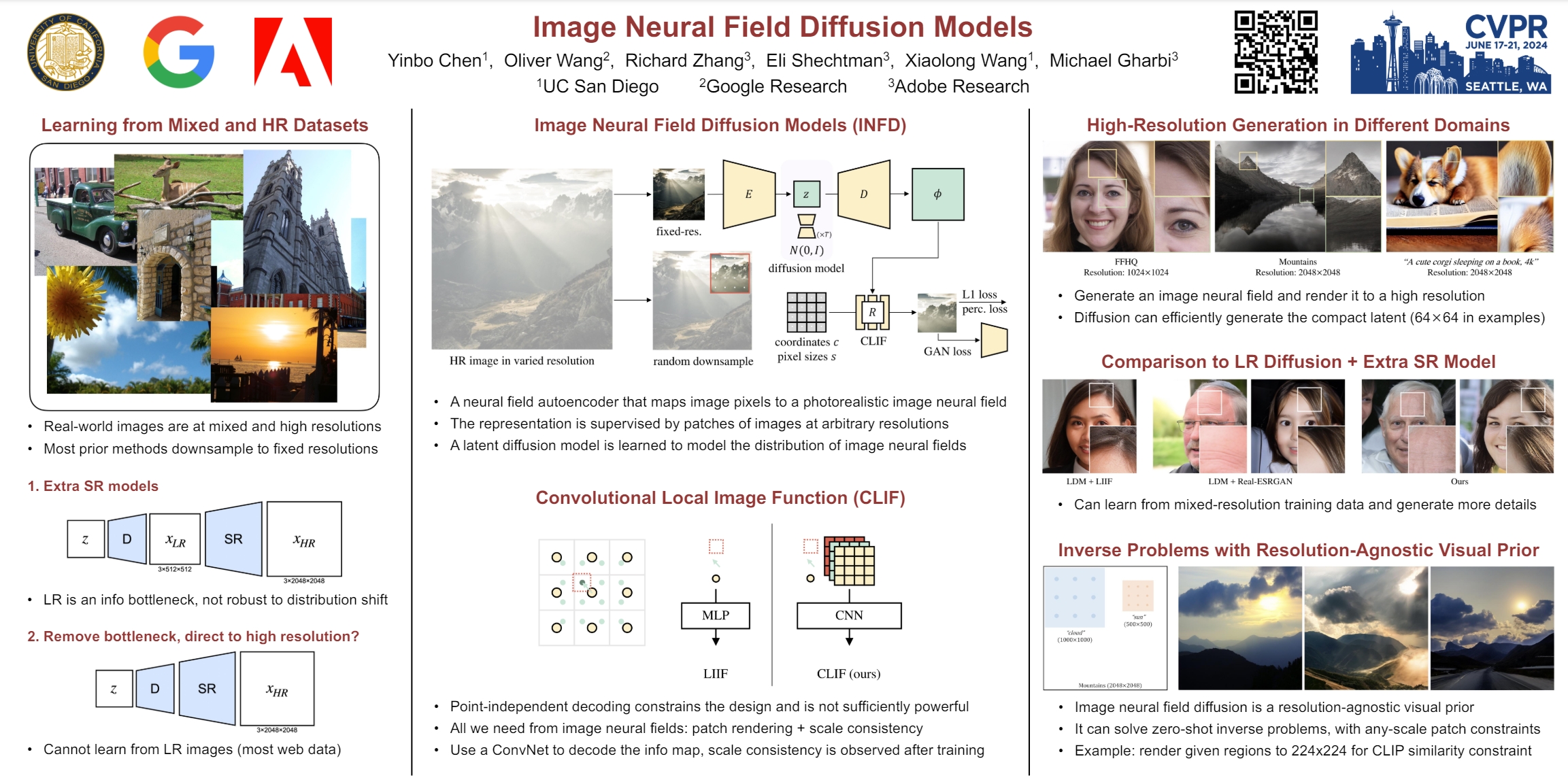

Cvpr Poster Image Neural Field Diffusion Models

Cvpr Poster One Shot Structure Aware Stylized Image Synthesis

Comments are closed.