Cvpr Poster Rethinking Gradient Projection Continual Learning

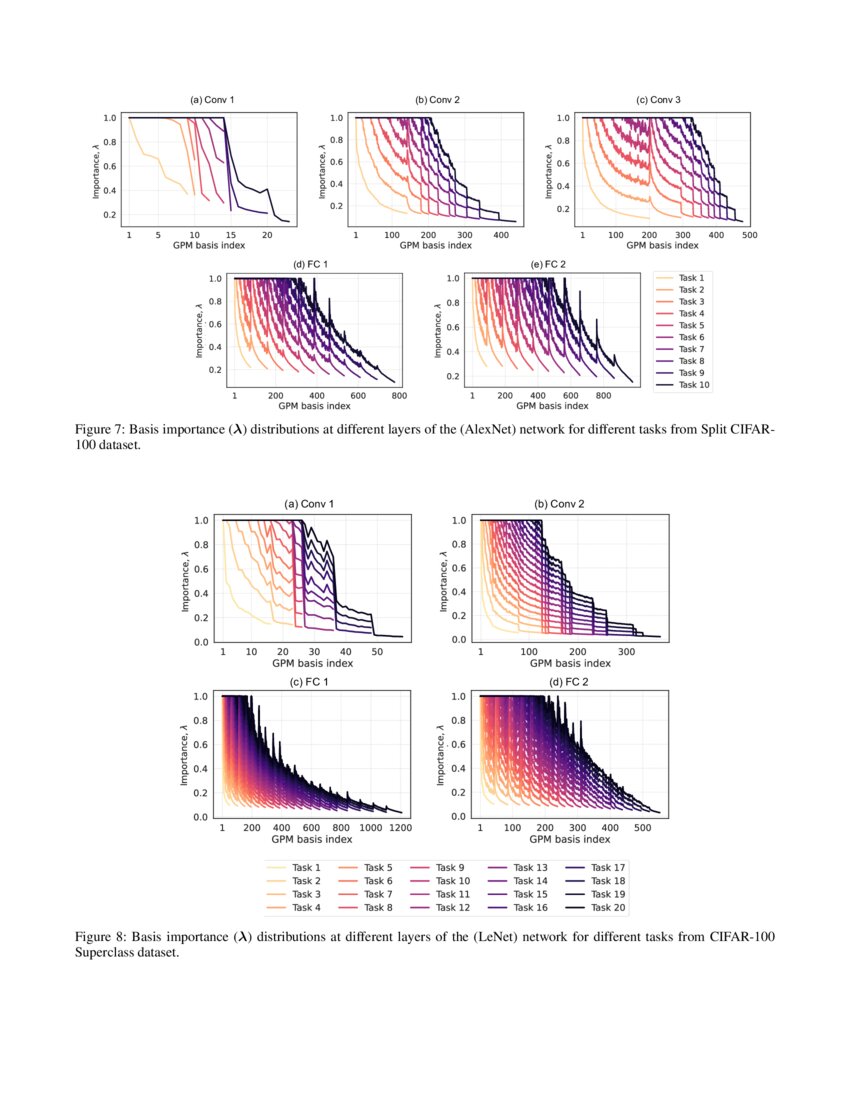

Continual Learning With Scaled Gradient Projection Deepai Continual learning aims to incrementally learn novel classes over time, while not forgetting the learned knowledge. recent studies have found that learning would not forget if the updated gradient is orthogonal to the feature space. Continual learning aims to incrementally learn novel classes over time, while not forgetting the learned knowledge. recent studies have found that learning would not forget if the updated gradient is orthogonal to the feature space.

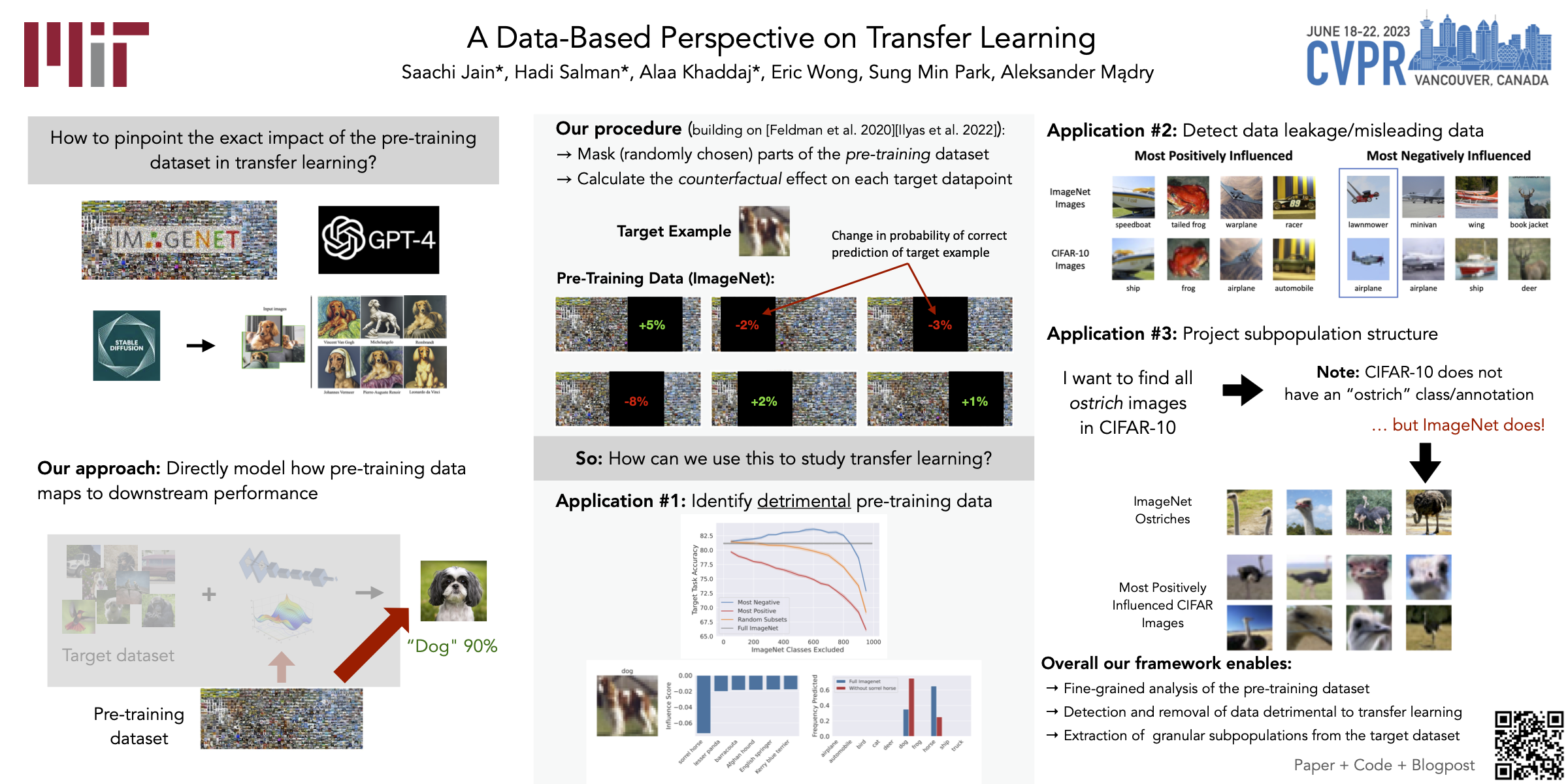

Cvpr Poster A Data Based Perspective On Transfer Learning Abstract: continual learning aims to incrementally learn novel classes over time, while not forgetting the learned knowledge. recent studies have found that learning would not forget if the updated gradient is orthogonal to the feature space. In this paper, we demonstrate the poor plasticity of recent gradient projection methods which is caused by constrain ing the gradient to be fully orthogonal to the whole feature space. Wang s, li x, sun j, et al. training networks in null space of feature covariance for continual learning[c] proceedings of the ieee cvf conference on computer vision and pattern recognition. 2021: 184 193. In contrast, we propose a novel approach where a neural network learns new tasks by taking gradient steps in the orthogonal direction to the gradient subspaces deemed important for the past tasks.

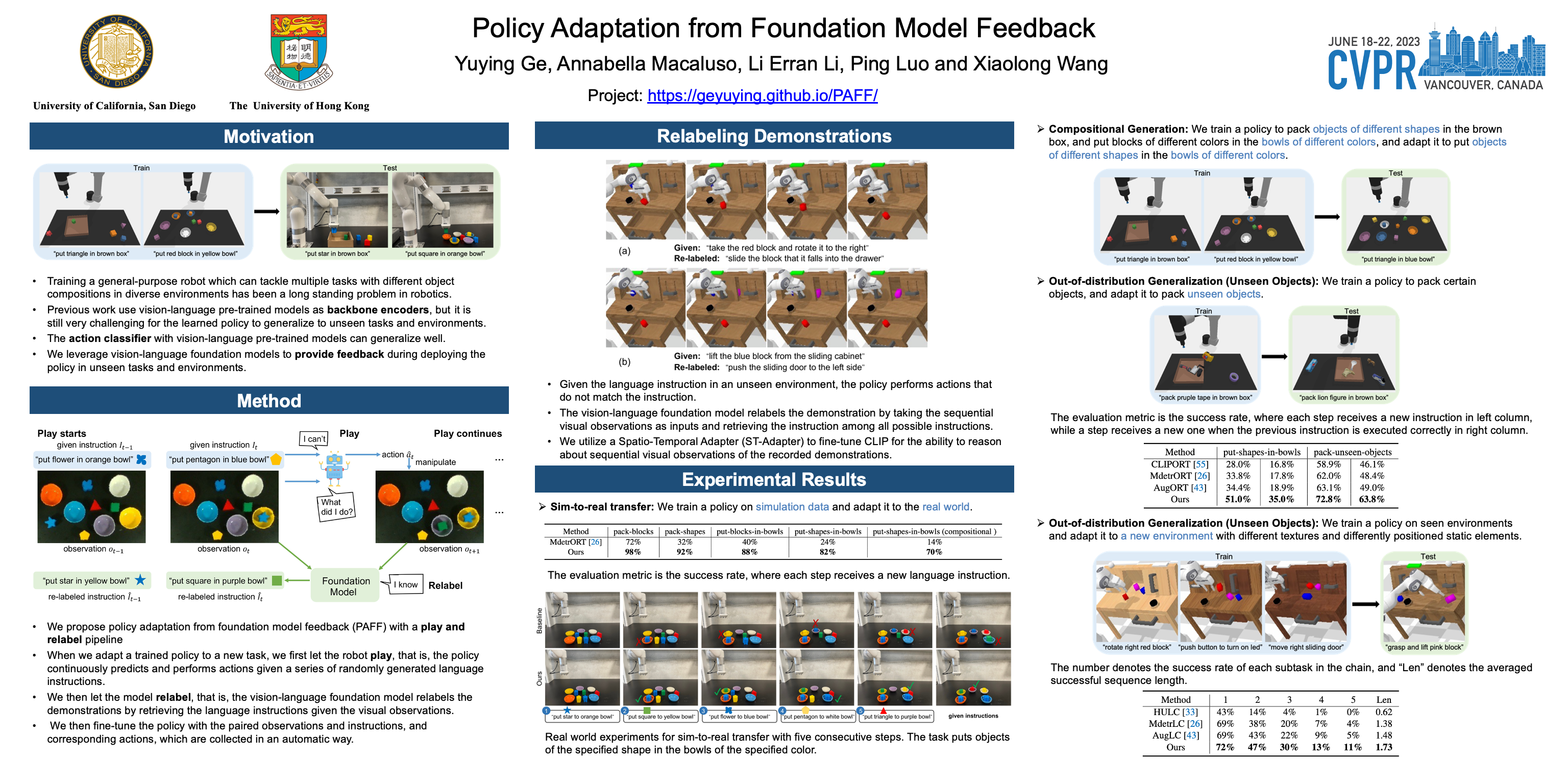

Cvpr Poster Policy Adaptation From Foundation Model Feedback Wang s, li x, sun j, et al. training networks in null space of feature covariance for continual learning[c] proceedings of the ieee cvf conference on computer vision and pattern recognition. 2021: 184 193. In contrast, we propose a novel approach where a neural network learns new tasks by taking gradient steps in the orthogonal direction to the gradient subspaces deemed important for the past tasks. After that, we give a proof of the applicability of the proposed feature space paradigm to gradient projection methods [1–4]. after that, we present the implementation details of trgp sd and adam nscl sd. finally, we provide additional data of our space decoupling (sd) algorithm. To solve this issue, we propose a novel approach where a neural network learns new tasks by taking gradient steps in the orthogonal direction to the gradient subspaces deemed important for past tasks. In this paper, we demonstrate the poor plasticity of recent gradient projection methods which is caused by constraining the gradient to be fully orthogonal to the whole feature space. Continual learning aims to incrementally learn novel classes over time, while not forgetting the learned knowledge. recent studies have found that learning would not forget if the updated gradient is orthogonal to the feature space.

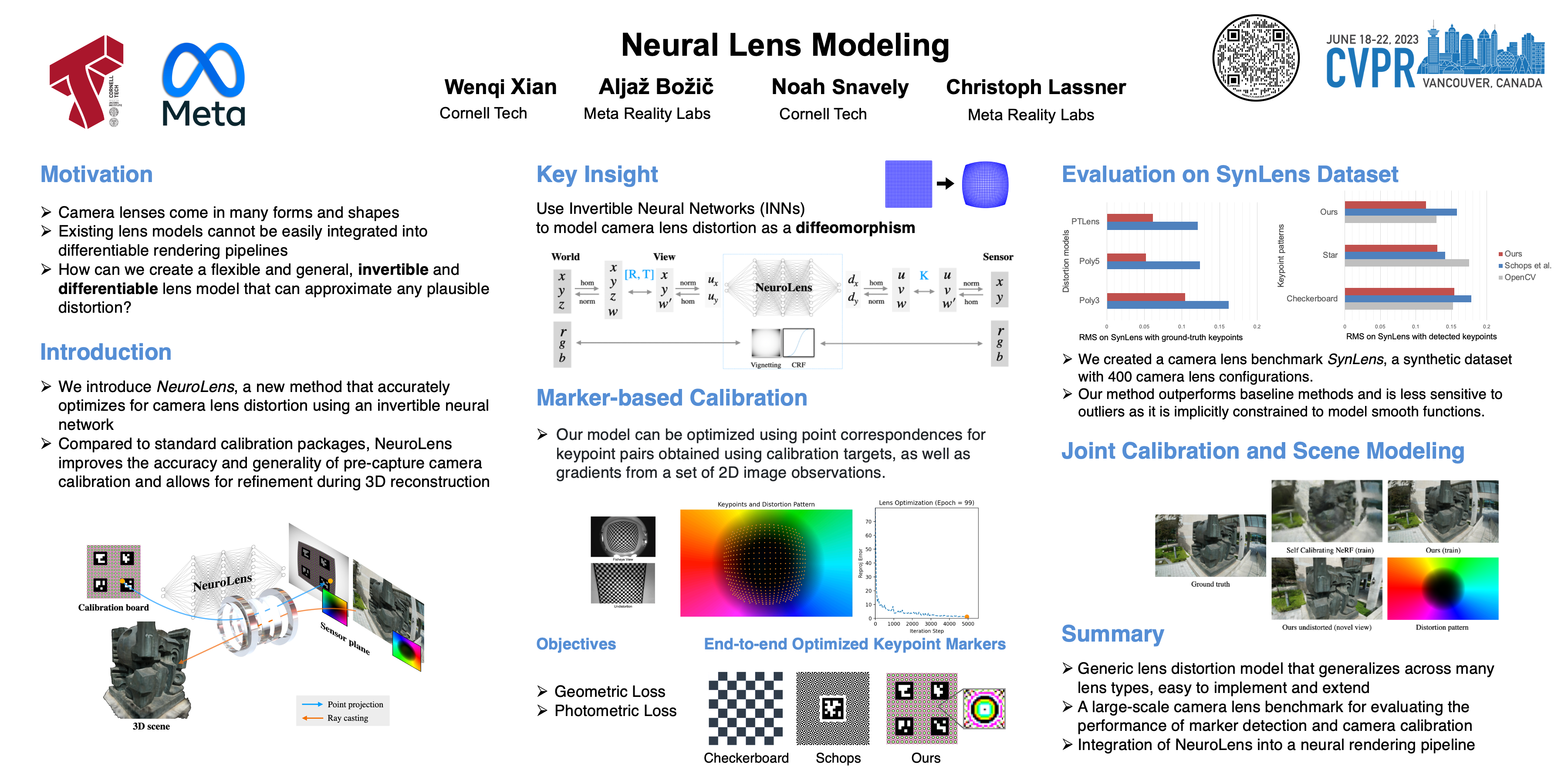

Cvpr Poster Neural Lens Modeling After that, we give a proof of the applicability of the proposed feature space paradigm to gradient projection methods [1–4]. after that, we present the implementation details of trgp sd and adam nscl sd. finally, we provide additional data of our space decoupling (sd) algorithm. To solve this issue, we propose a novel approach where a neural network learns new tasks by taking gradient steps in the orthogonal direction to the gradient subspaces deemed important for past tasks. In this paper, we demonstrate the poor plasticity of recent gradient projection methods which is caused by constraining the gradient to be fully orthogonal to the whole feature space. Continual learning aims to incrementally learn novel classes over time, while not forgetting the learned knowledge. recent studies have found that learning would not forget if the updated gradient is orthogonal to the feature space.

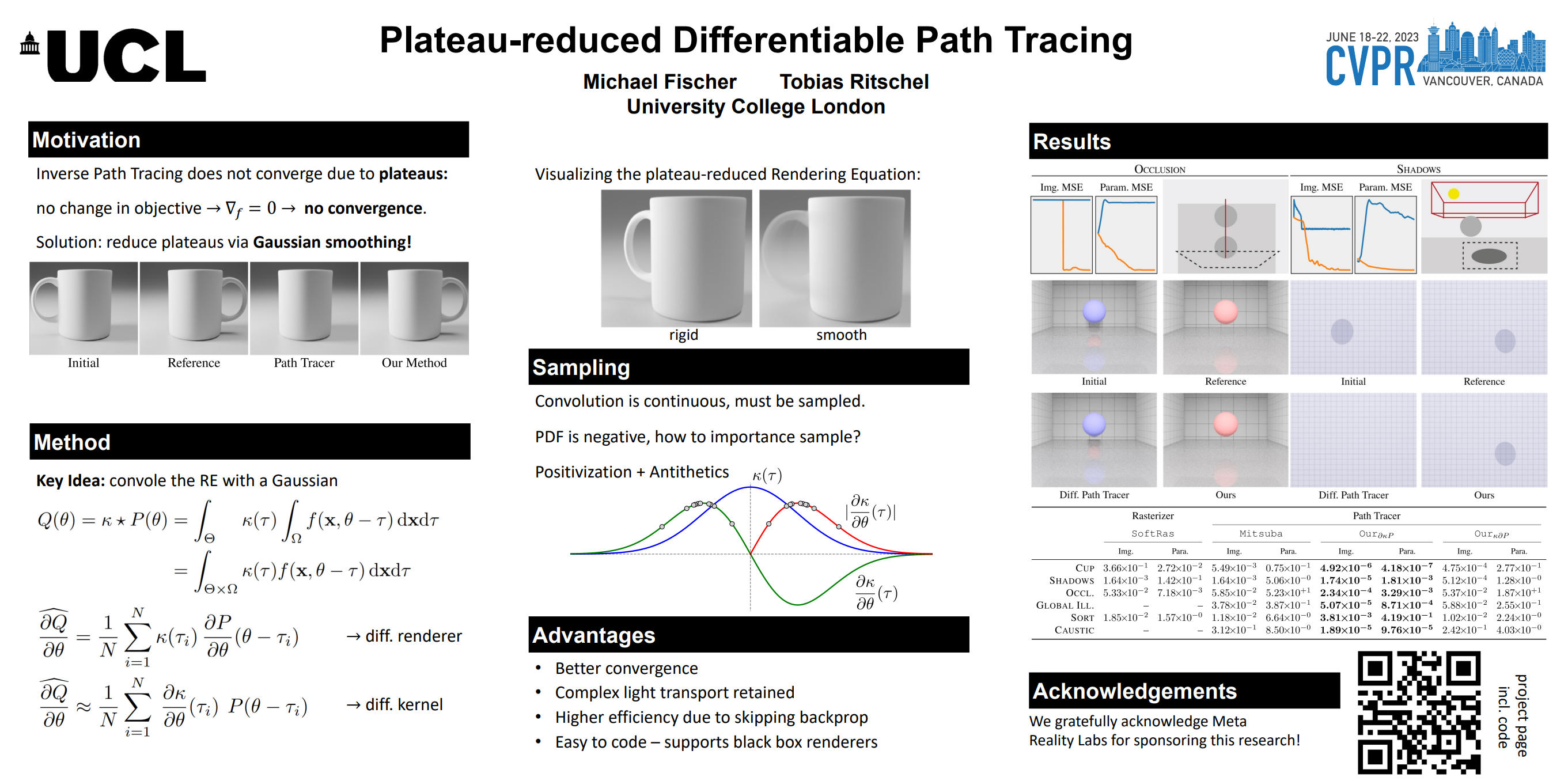

Cvpr Poster Plateau Reduced Differentiable Path Tracing In this paper, we demonstrate the poor plasticity of recent gradient projection methods which is caused by constraining the gradient to be fully orthogonal to the whole feature space. Continual learning aims to incrementally learn novel classes over time, while not forgetting the learned knowledge. recent studies have found that learning would not forget if the updated gradient is orthogonal to the feature space.

Comments are closed.