Cvpr Poster On The Scalability Of Diffusion Based Text To Image Generation

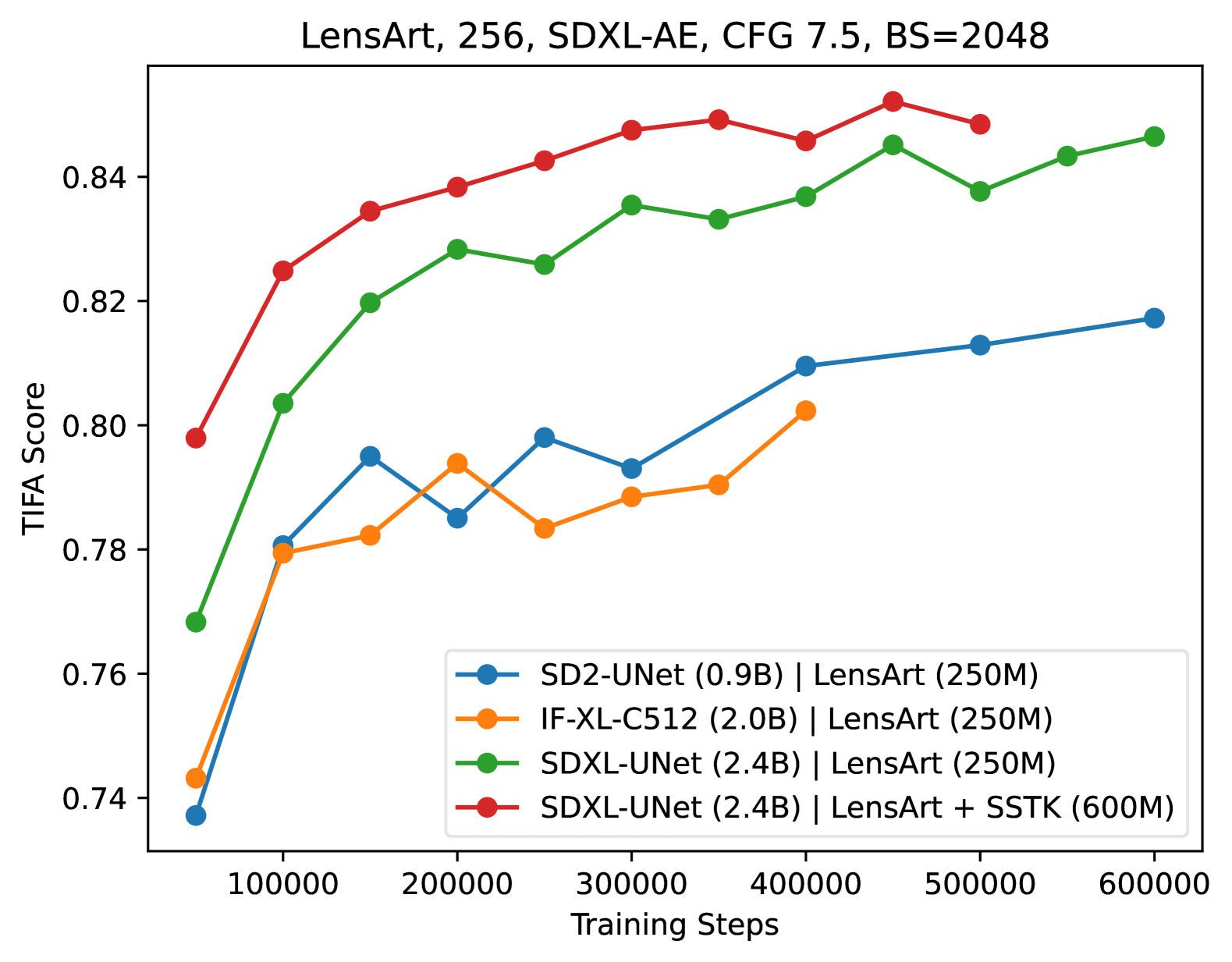

On The Scalability Of Diffusion Based Text To Image Generation Ai Scaling up model and data size has been quite successful for the evolution of llms. however, the scaling law for the diffusion based text to image (t2i) models is not fully explored. it is also unclear how to efficiently scale the model for better performance at reduced cost. Scaling up model and data size has been quite successful for the evolution of llms. however the scaling law for the diffusion based text to image (t2i) models is not fully explored. it is also unclear how to efficiently scale the model for better performance at reduced cost.

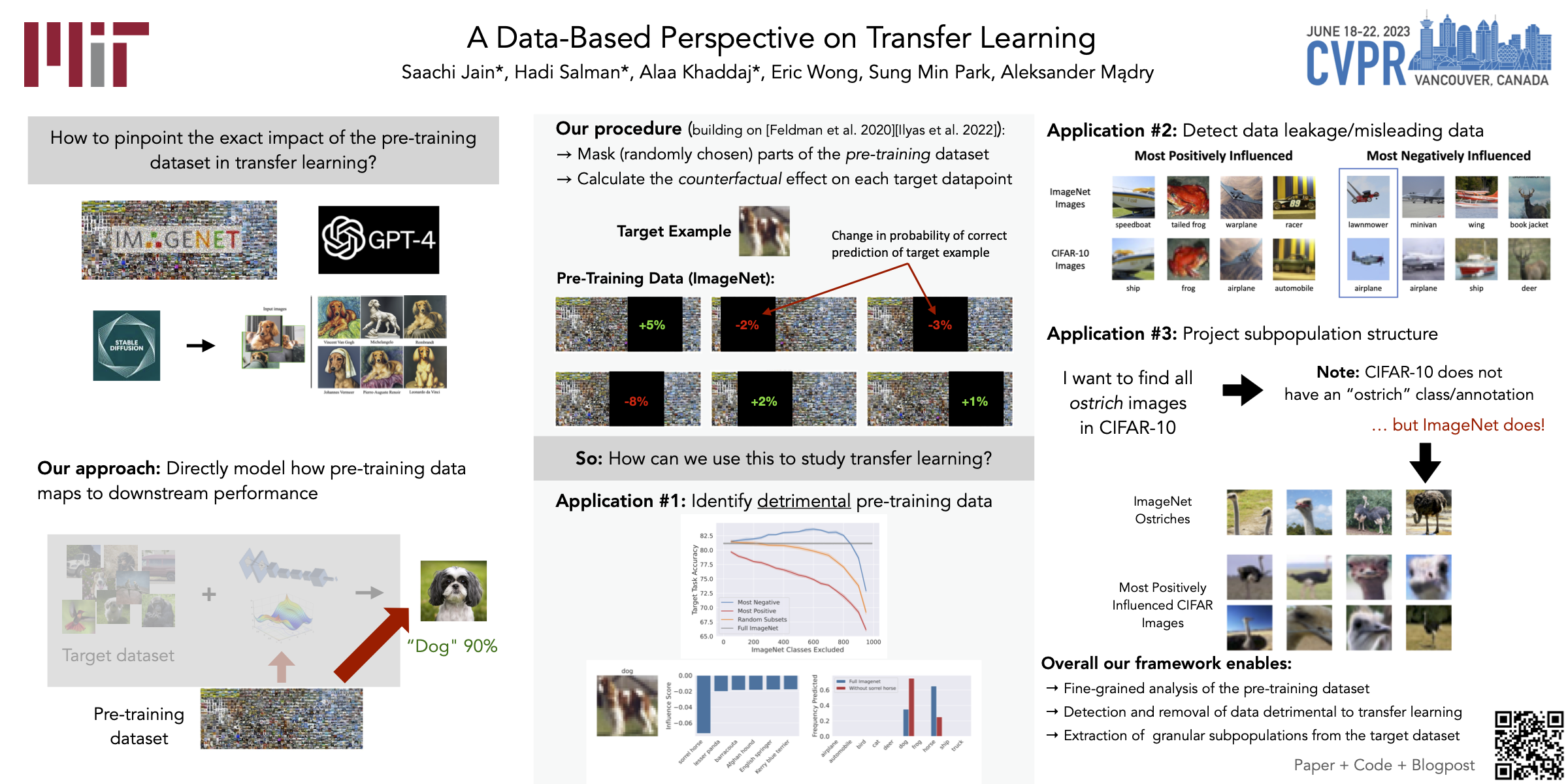

Cvpr Poster A Data Based Perspective On Transfer Learning Text encoders in diffusion models have rapidly evolved, transitioning from clip to t5 xxl. although this evolution has significantly enhanced the models' ability to understand complex prompts and generate text, it also leads to a substantial increase in the number of parameters. This work presents imagen, a text to image diffusion model with an unprecedented degree of photorealism and a deep level of language understanding, and finds that human raters prefer imagen over other models in side by side comparisons, both in terms of sample quality and image text alignment. Consequently, diffusion 4k achieves impressive performance in high quality image synthesis and text prompt adherence, especially when powered by modern large scale diffusion models (e.g., sd3 2b and flux 12b). Abstract: diffusion transformers have been widely adopted for text to image synthesis. while scaling these models up to billions of parameters shows promise, the effectiveness of scaling beyond current sizes remains.

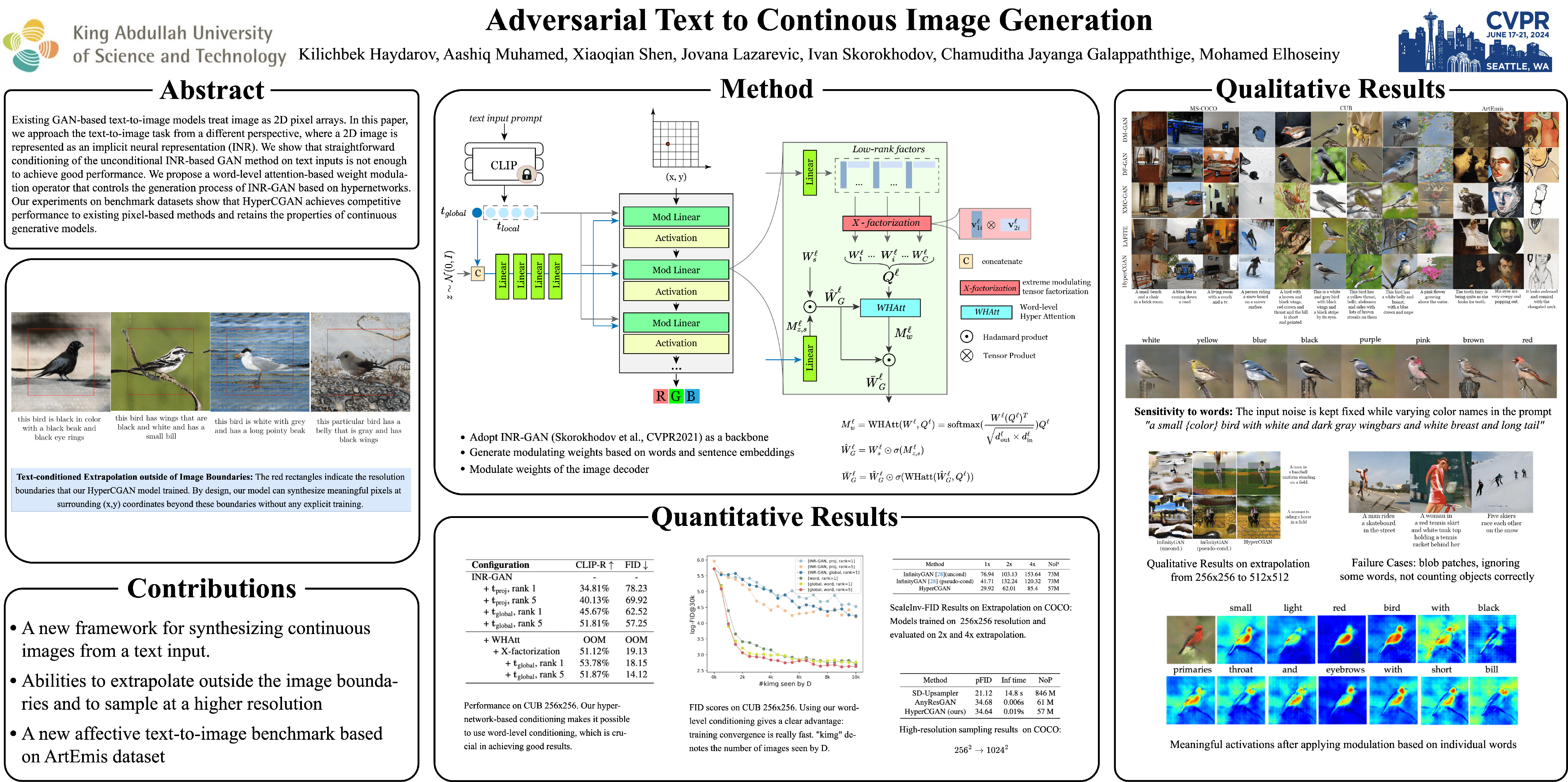

Cvpr Poster Adversarial Text To Continuous Image Generation Consequently, diffusion 4k achieves impressive performance in high quality image synthesis and text prompt adherence, especially when powered by modern large scale diffusion models (e.g., sd3 2b and flux 12b). Abstract: diffusion transformers have been widely adopted for text to image synthesis. while scaling these models up to billions of parameters shows promise, the effectiveness of scaling beyond current sizes remains. Scaling up model and data size has been quite successful for the evolution of llms. however the scaling law for the diffusion based text to image (t2i) models is not fully explored. it is also unclear how to efficiently scale the model for better performance at reduced cost. However, the scaling law for the diffusion based text to image (t2i) models is not fully explored. it is also unclear how to efficiently scale the model for better performance at reduced cost. The paper examines the scalability of diffusion based text to image generation models, exploring the challenges and tradeoffs involved in scaling up these systems to handle more complex and diverse image generation tasks.

Comments are closed.