Cs 152 Nn 6 Regularization

Training Neural Networks Subscribed 4 376 views 4 years ago day 6 of harvey mudd college neural networks class more. Try out the concepts from this lecture in the neural network playground! in the last lecture we looked at how to make choices about our network such as: the number of layers, the number of neurons in each layer and the learning rate.

Understanding Regularization In A Neural Network Baeldung On Computer How to set alpha? shown is the same neural network with different levels of regularization. which model has the largest value for alpha (i.e., largest norm penalty contribution)?. Regularization in the context of deep learning, regularization can be understood as the process of adding information changing the objective function to prevent overfitting. Early stopping can be used alone or in conjunction with other regularization strategies. early stopping requires validation data set (extra data not included with training data). Pytorch is tracking the operations in our network and how to calculate the gradient (more on that a bit later), but it hasn’t calculated anything yet because we don’t have a loss function and we haven’t done a forward pass to calculate the loss so there’s nothing to backpropagate yet!.

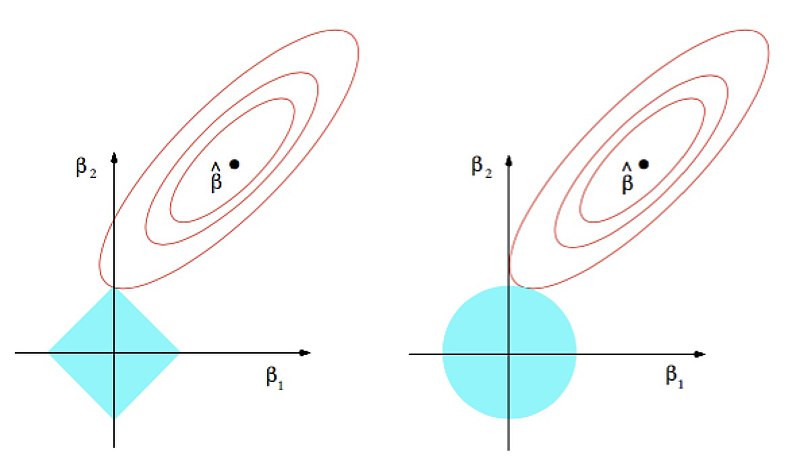

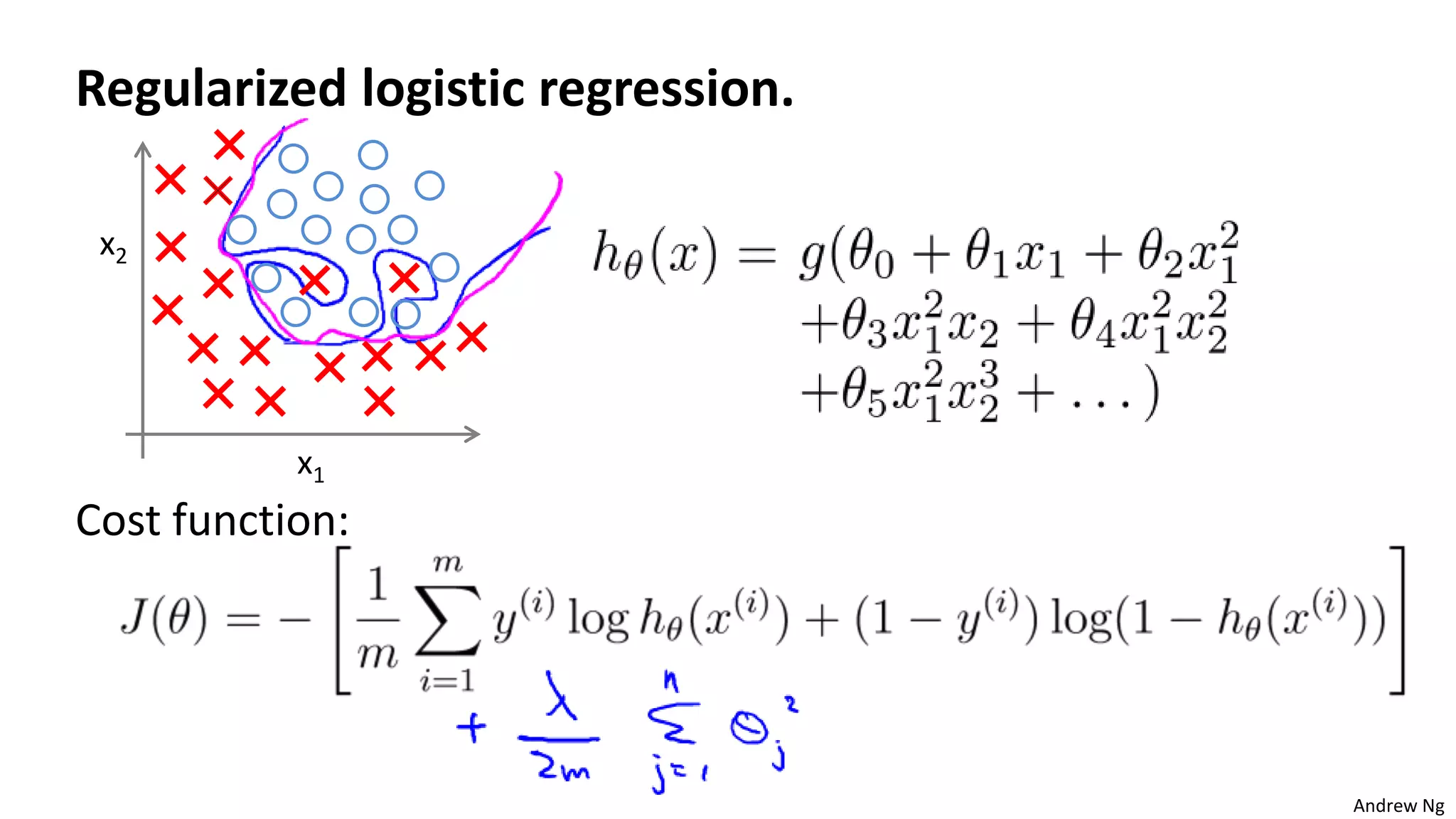

Nn Regularization For All W Parameters Advanced Learning Algorithms Early stopping can be used alone or in conjunction with other regularization strategies. early stopping requires validation data set (extra data not included with training data). Pytorch is tracking the operations in our network and how to calculate the gradient (more on that a bit later), but it hasn’t calculated anything yet because we don’t have a loss function and we haven’t done a forward pass to calculate the loss so there’s nothing to backpropagate yet!. Cs 152 nn—6: regularization—multi task learning neil rhodes 4.84k subscribers subscribe. In this course, we will introduce neural networks as a tool for machine learning and function approximation. we will start with the fundamentals of how to build and train a neural network from scratch. For “easy” problems, regularization may be necessary to make the problems well defined for example, when applying a logistic regression to a linearly separable dataset:. Cs 152 nn—6: regularization—adjust loss function neil rhodes 4.87k subscribers subscribe.

Machine Learning Lecture6 Regularization Pptx Cs 152 nn—6: regularization—multi task learning neil rhodes 4.84k subscribers subscribe. In this course, we will introduce neural networks as a tool for machine learning and function approximation. we will start with the fundamentals of how to build and train a neural network from scratch. For “easy” problems, regularization may be necessary to make the problems well defined for example, when applying a logistic regression to a linearly separable dataset:. Cs 152 nn—6: regularization—adjust loss function neil rhodes 4.87k subscribers subscribe.

Machine Learning Lecture6 Regularization Pptx For “easy” problems, regularization may be necessary to make the problems well defined for example, when applying a logistic regression to a linearly separable dataset:. Cs 152 nn—6: regularization—adjust loss function neil rhodes 4.87k subscribers subscribe.

Comments are closed.