Cross Entropy And Kl Divergence

301 Moved Permanently When we train machine learning models, especially for classification tasks, two fundamental concepts frequently arise: cross entropy and kl (kullback–leibler) divergence. while. Cross entropy is widely used in modern ml to compute the loss for classification tasks. this post is a brief overview of the math behind it and a related concept called kullback leibler (kl) divergence.

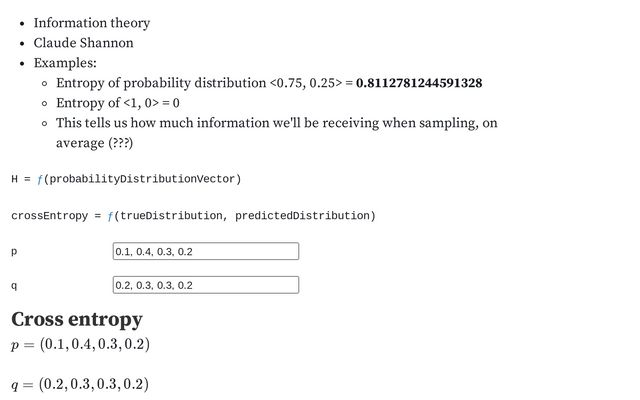

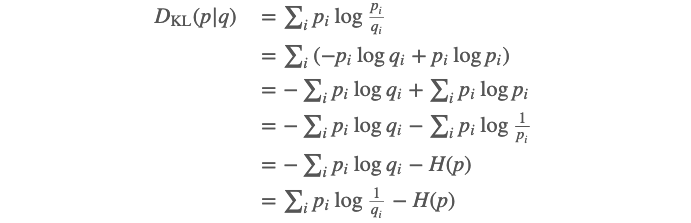

Entropy Cross Entropy And Kl Divergence Daniel Manning Observable Before we dive into cross entropy and kl divergence, let’s review an important concept related to these two terms: entropy. in information theory, entropy quantifies uncertainty, i.e., the information contained in the probability distribution of a random variable. You will need some conditions to claim the equivalence between minimizing cross entropy and minimizing kl divergence. i will put your question under the context of classification problems using cross entropy as loss functions. Detailed chapter on information theory in ai. learn to implement and apply entropy, cross entropy, and kl divergence in python for machine learning. This is the cross entropy for distributions p, q. and the kullback–leibler divergence is the difference between the cross entropy h for pq and the true entropy h for p.

Cross Entropy And Kl Divergence Tdhopper Detailed chapter on information theory in ai. learn to implement and apply entropy, cross entropy, and kl divergence in python for machine learning. This is the cross entropy for distributions p, q. and the kullback–leibler divergence is the difference between the cross entropy h for pq and the true entropy h for p. Cross entropy quantifies the overall surprise your model experiences when confronted with reality, directly reflecting the average cost of misprediction. in contrast, kl divergence isolates pure modeling errors by subtracting the inherent uncertainty of the true distribution. Cross entropy is used to assess the similarity between two distributions 𝑝 and 𝑞, while kl divergence measures the distance between the two distributions 𝑝 and 𝑞. The mathematical field that describes this process is information theory, and three of its most powerful ideas — entropy, cross entropy, and kl divergence — form the foundation of how. In this article, we're going to derive cross entropy and kl divergence from first principles so that readers will more deeply understand them.

Cross Entropy And Kl Divergence Cross entropy quantifies the overall surprise your model experiences when confronted with reality, directly reflecting the average cost of misprediction. in contrast, kl divergence isolates pure modeling errors by subtracting the inherent uncertainty of the true distribution. Cross entropy is used to assess the similarity between two distributions 𝑝 and 𝑞, while kl divergence measures the distance between the two distributions 𝑝 and 𝑞. The mathematical field that describes this process is information theory, and three of its most powerful ideas — entropy, cross entropy, and kl divergence — form the foundation of how. In this article, we're going to derive cross entropy and kl divergence from first principles so that readers will more deeply understand them.

Comments are closed.