Cracking The Memory Wall

Movie Adaptation Of Memory Wall In Development There are no silver bullets here, but there are ways to shrink that gap. ramin farjadrad, ceo and co founder of eliyan, talks about how to achieve higher bandwidth and faster data movement, and how that can help reduce the memory bottleneck. Ramin farjadrad, ceo and co founder of eliyan, talks with semiconductor engineering about how to achieve higher bandwidth and faster data movement, and how that can help reduce the memory.

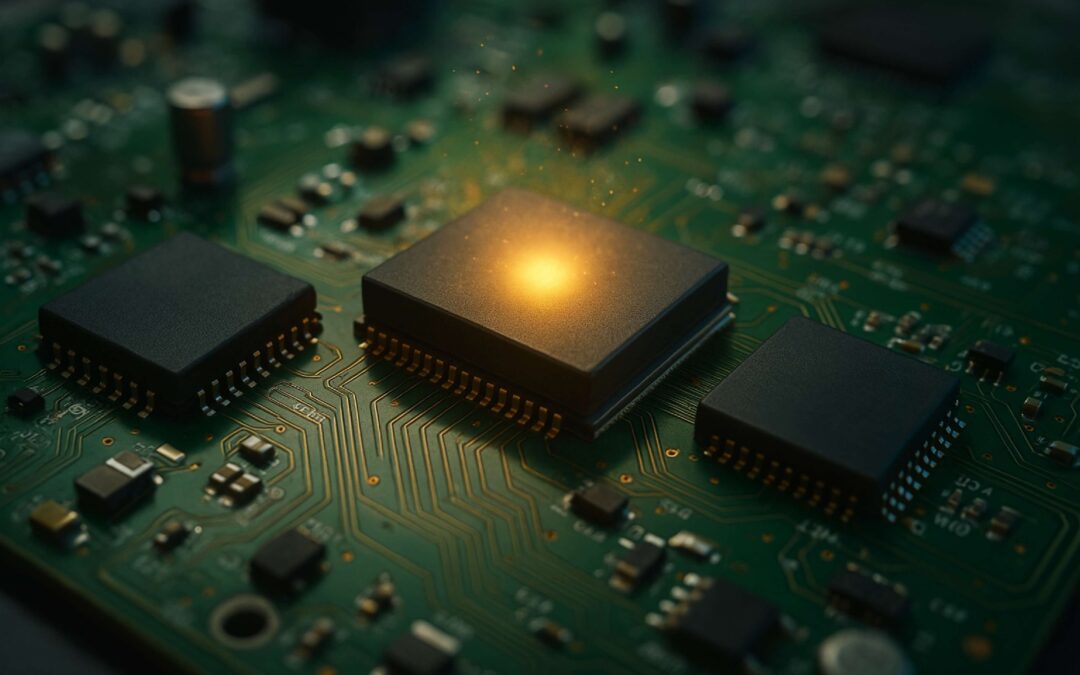

Vccp Challenger Series Cracking The Memory Code Vccp Singapore Over the last decade, gpu compute (tflops) has scaled exponentially, but memory bandwidth has barely kept up. as context windows explode from 2k to 1m tokens, the memory required to remember. An architectural analysis of processing in memory technologies designed to break the memory wall and accelerate data intensive computing workloads. The talk concludes by highlighting challenges and opportunities at the intersection of materials, devices, and system architecture —inviting the next generation of researchers to help truly crack the memory wall. By dr. steven woo, fellow and distinguished inventor at rambus. the term “memory wall” was first coined in the 1990s to describe memory bandwidth bottlenecks that were holding back cpu.

Cracking At The North Wall Experience Oxfordshire The talk concludes by highlighting challenges and opportunities at the intersection of materials, devices, and system architecture —inviting the next generation of researchers to help truly crack the memory wall. By dr. steven woo, fellow and distinguished inventor at rambus. the term “memory wall” was first coined in the 1990s to describe memory bandwidth bottlenecks that were holding back cpu. To address this bottleneck, various strategies like enhancing memory speed, increasing cache sizes, and improving data retrieval techniques have been employed, but the challenge of the memory wall persists. and it is getting increasingly expensive to circumvent. Recent advancements in deep learning have led to the widespread adoption of artificial intelligence (ai) in applications such as computer vision and natural language processing. as neural networks become deeper and larger, ai modeling demands outstrip the capabilities of conventional chip architectures. memory bandwidth falls behind processing power. energy consumption comes to dominate the. As the data demands of large language models (llms) continue to grow, challenges such as the memory wall have become increasingly prominent in modern ai accelerators. these limitations lead to constrained scalability, underutilized compute resources, and increased energy consumption. Over the last four decades, the gap between the cpu and the memory performance has grown from about 50% per year to more than 1,000x today. memory latencies have also largely remained constant in the past 20 years, making it a significant component of the performance bottleneck.

Archief Projects Ferroelectric Memory To address this bottleneck, various strategies like enhancing memory speed, increasing cache sizes, and improving data retrieval techniques have been employed, but the challenge of the memory wall persists. and it is getting increasingly expensive to circumvent. Recent advancements in deep learning have led to the widespread adoption of artificial intelligence (ai) in applications such as computer vision and natural language processing. as neural networks become deeper and larger, ai modeling demands outstrip the capabilities of conventional chip architectures. memory bandwidth falls behind processing power. energy consumption comes to dominate the. As the data demands of large language models (llms) continue to grow, challenges such as the memory wall have become increasingly prominent in modern ai accelerators. these limitations lead to constrained scalability, underutilized compute resources, and increased energy consumption. Over the last four decades, the gap between the cpu and the memory performance has grown from about 50% per year to more than 1,000x today. memory latencies have also largely remained constant in the past 20 years, making it a significant component of the performance bottleneck.

Cracking Digital Barriers Transforming Images To Videos Using Ai As the data demands of large language models (llms) continue to grow, challenges such as the memory wall have become increasingly prominent in modern ai accelerators. these limitations lead to constrained scalability, underutilized compute resources, and increased energy consumption. Over the last four decades, the gap between the cpu and the memory performance has grown from about 50% per year to more than 1,000x today. memory latencies have also largely remained constant in the past 20 years, making it a significant component of the performance bottleneck.

Comments are closed.