Cpu Cache How Caching Works Pdf Cpu Cache Random Access Memory

Cpu Cache And Memory Pdf Cpu Cache Dynamic Random Access Memory This document discusses computer memory and cache memory. it begins by explaining that cache memory is a small, fast memory located between the cpu and main memory that holds copies of frequently used instructions and data. Pdf | on oct 10, 2020, zeyad ayman and others published cache memory | find, read and cite all the research you need on researchgate.

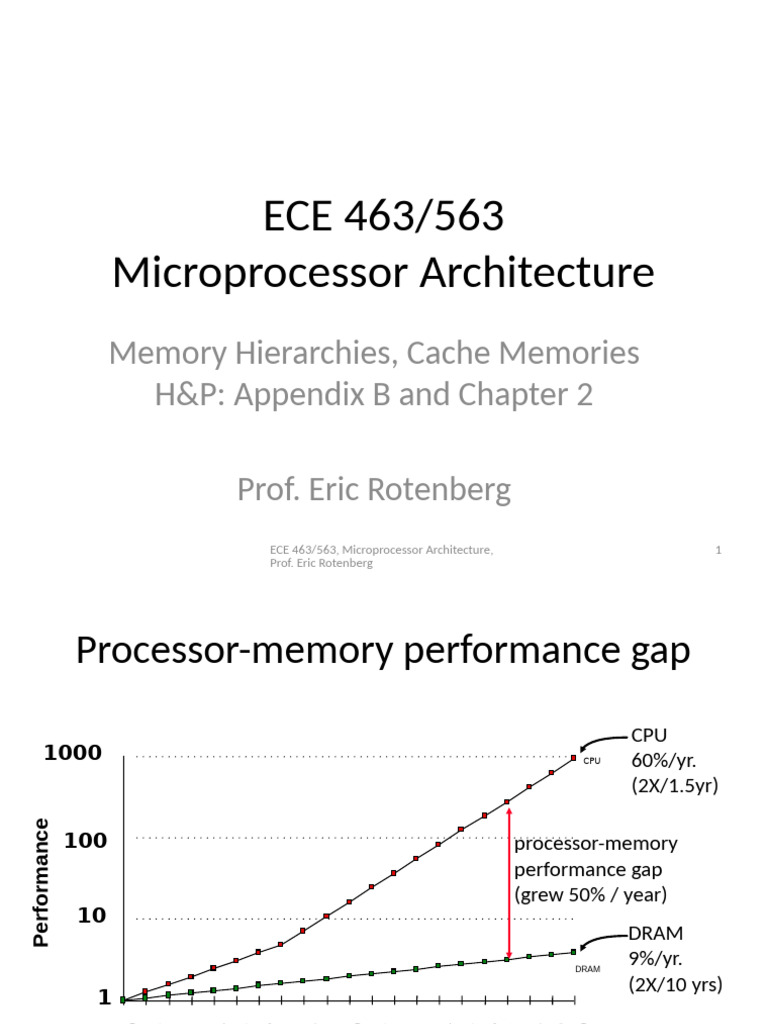

Cache Memory Download Free Pdf Cpu Cache Random Access Memory Answer: a n way set associative cache is like having n direct mapped caches in parallel. The most important element in the on chip memory system is the notion of a cache that stores a subset of the memory space, and the hierarchy of caches. in this section, we assume that the reader is well aware of the basics of caches, and is also aware of the notion of virtual memory. Registers: a cache on variables – software managed first level cache: a cache on second level cache second level cache: a cache on memory (or l3 cache) memory: a cache on hard disk. In computer architecture, almost everything is a cache! branch target bufer a cache on branch targets. most processors today have three levels of caches. one major design constraint for caches is their physical sizes on cpu die. limited by their sizes, we cannot have too many caches.

Cache Pdf Cpu Cache Random Access Memory Registers: a cache on variables – software managed first level cache: a cache on second level cache second level cache: a cache on memory (or l3 cache) memory: a cache on hard disk. In computer architecture, almost everything is a cache! branch target bufer a cache on branch targets. most processors today have three levels of caches. one major design constraint for caches is their physical sizes on cpu die. limited by their sizes, we cannot have too many caches. A cpu cache is used by the cpu of a computer to reduce the average time to access memory. the cache is a smaller, faster and more expensive memory inside the cpu which stores copies of the data from the most frequently used main memory locations for fast access. When writing to items not currently in the cache, do we bring them into the cache? which items to evict from cache when we run out of space? many algorithms! useful for designers of caches and application developers (using caches)!. As a general introduction to cpu caching and performance. the article covers fundamental cache concepts like spatial and temporal locality, set associativity, how different types of applications use the cache, the general layout a. This lecture is about how memory is organized in a computer system. in particular, we will consider the role play in improving the processing speed of a processor. in our single cycle instruction model, we assume that memory read operations are asynchronous, immediate and also single cycle.

Lecture 2 Cache 1 Pdf Random Access Memory Cpu Cache A cpu cache is used by the cpu of a computer to reduce the average time to access memory. the cache is a smaller, faster and more expensive memory inside the cpu which stores copies of the data from the most frequently used main memory locations for fast access. When writing to items not currently in the cache, do we bring them into the cache? which items to evict from cache when we run out of space? many algorithms! useful for designers of caches and application developers (using caches)!. As a general introduction to cpu caching and performance. the article covers fundamental cache concepts like spatial and temporal locality, set associativity, how different types of applications use the cache, the general layout a. This lecture is about how memory is organized in a computer system. in particular, we will consider the role play in improving the processing speed of a processor. in our single cycle instruction model, we assume that memory read operations are asynchronous, immediate and also single cycle.

What Is Cache Memory How Cache Memory Works As a general introduction to cpu caching and performance. the article covers fundamental cache concepts like spatial and temporal locality, set associativity, how different types of applications use the cache, the general layout a. This lecture is about how memory is organized in a computer system. in particular, we will consider the role play in improving the processing speed of a processor. in our single cycle instruction model, we assume that memory read operations are asynchronous, immediate and also single cycle.

Cpu Cache How Caching Works Pdf Cpu Cache Random Access Memory

Comments are closed.