Context Length In Llms How To Make The Most Out Of It

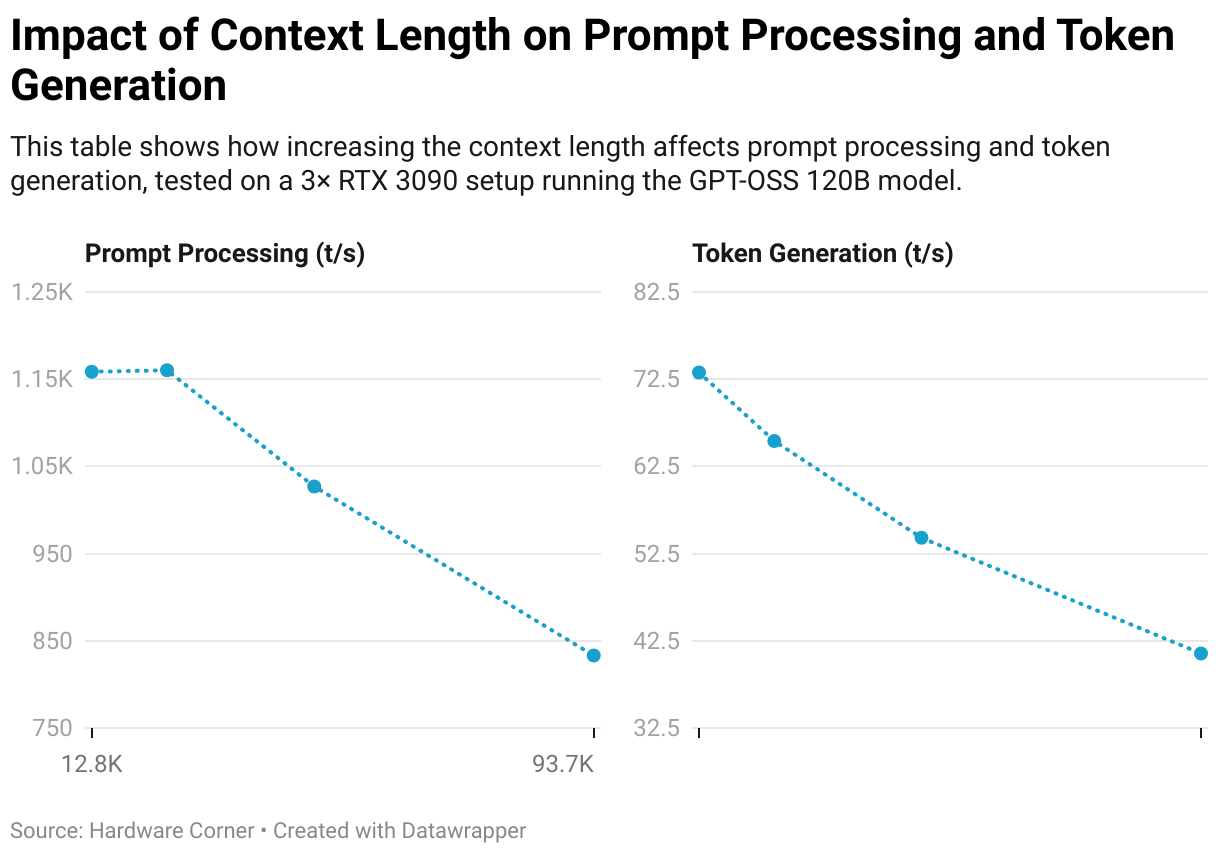

What Is Context Length In Llms And How It Impacts Your Vram And Speed Overcome llm token limits with 6 practical techniques. learn how you can use truncation, rag, memory buffering, and compression to overcome the token limit and fit the llm context window. In this article, we’ll explain the concept of llm context length, how we can improve it, and the advantages and disadvantages of varying context lengths. we will also cover how one can improve model performance by applying specific ai context in copilots.

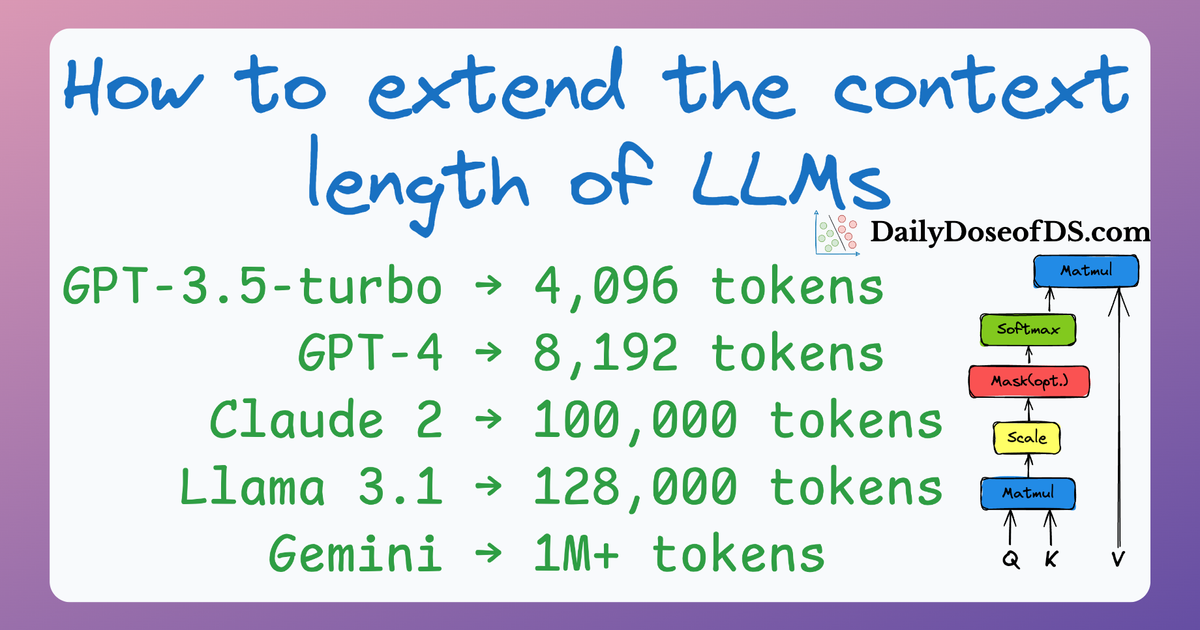

Techniques To Extend Context Length Of Llms Learn practical strategies for handling long context windows in llms, including context management techniques. Discover how context length impacts ai performance. learn which llms handle the longest text and why this matters when choosing your model. Understanding context windows—and how to work around their limitations—is essential for building practical ai applications. this guide explains what context windows are, compares limits across models, and provides strategies for processing documents that exceed those limits. This finding busts one of the most common myths about working with llms that more context is always better. the reality is that llms have architectural blind spots that make what you put in front of them, and how you structure it, far more important than how much you include. the discipline of getting this right is called context engineering.

Guide To Context In Llms Symbl Ai Understanding context windows—and how to work around their limitations—is essential for building practical ai applications. this guide explains what context windows are, compares limits across models, and provides strategies for processing documents that exceed those limits. This finding busts one of the most common myths about working with llms that more context is always better. the reality is that llms have architectural blind spots that make what you put in front of them, and how you structure it, far more important than how much you include. the discipline of getting this right is called context engineering. Master ai context windows with our comprehensive 2025 guide. learn token management, context optimization strategies, and practical techniques for developers, researchers, and educators to maximize ai performance. Recent advancements in language models (llms) claim to push the boundaries of context length, with some models reportedly capable of handling 1–2 million tokens of context. as ai. Nvidia nemo framework addresses the technical challenges of training llms with extended context lengths by providing techniques such as activation recomputation, context parallelism, and activation offloading to improve memory management and reduce computational overhead. …you’ve witnessed the consequences of mismanaging prompt length vs. context limits. let’s break down this problem: how today’s llms remember, forget, truncate, compress, and respond based on prompt size.

Comments are closed.