Constrained Decision Transformer For Offline Safe Reinforcement

Constrained Decision Transformer For Offline Safe Reinforcement The inherent trade offs between safety and task performance inspire us to propose the constrained decision transformer (cdt) approach, which can dynamically adjust the trade offs during deployment. The inherent trade offs between safety and task performance inspire us to propose the constrained decision transformer (cdt) approach, which can dynamically adjust the trade offs during deployment.

Github Troddenspade Decision Transformer On Offline Reinforcement The inherent trade offs between safety and task performance inspire us to propose the constrained decision transformer (cdt) approach, which can dynamically adjust the trade offs during deployment. Interesting approach: the combination of constrained decision transformer (cdt) with constrained penalized q learning (cpq) is not in itself extremely novel, however it addresses key shortcomings in safe offline reinforcement learning by enhancing trajectory stitching while maintaining safety. The inherent trade offs between safety and task performance inspire us to propose the constrained decision transformer (cdt) approach, which can dynamically adjust the trade offs during. The paper presents a constrained decision transformer that leverages multi objective optimization to dynamically balance safety and performance in offline rl tasks.

Offline Inverse Constrained Reinforcement Learning For Safe Critical The inherent trade offs between safety and task performance inspire us to propose the constrained decision transformer (cdt) approach, which can dynamically adjust the trade offs during. The paper presents a constrained decision transformer that leverages multi objective optimization to dynamically balance safety and performance in offline rl tasks.

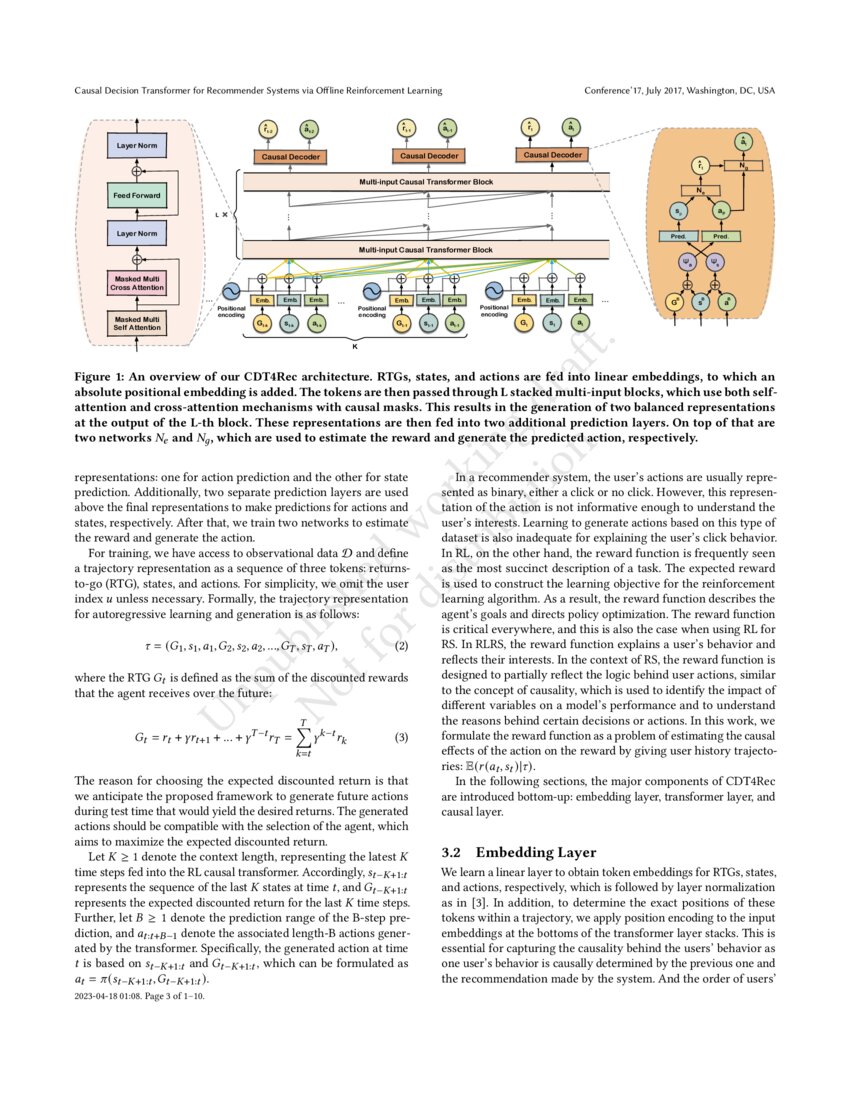

Causal Decision Transformer For Recommender Systems Via Offline

Comments are closed.