Conceptualizing Next Generation Memory Storage Optimized For Ai Inference

Ai Inference Memory System Tradeoffs Conceptualizing next generation memory & storage optimized for ai inference good afternoon. uh my name is adnan jam lead of next generation memory and storage product. Powered by the nvidia bluefield 4 processor, nvidia cmx establishes an optimized context memory tier that augments existing networked storage tiers by holding latency‑sensitive, reusable inference context and prestaging it to increase gpu utilization.

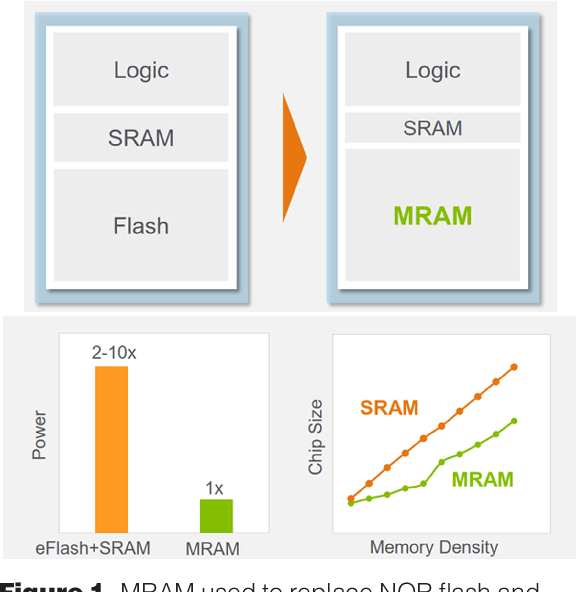

Figure 1 From Ai Inference And Storage Semantic Scholar Explore next generation memory and storage concepts optimized for ai inference, including processing in memory (pim) technology and novel architectures for llms. Can gpu sustain enough parallelism to hide storage memory latency and provide a tiered memory storage pool for applications? software and storage are the new bottleneck! gpus and emerging workloads have enough parallelism to issue these many requests in flight1. but the software stack and ssds can’t keep up. 1. bam: arxiv.org abs 2203.04910. At the 2025 ocp global summit, sk hynix showcased its full stack ai memory portfolio including hbm4, aim, dram and essd products. Simulations demonstrate that fenghuang achieves memory capacity reduction, 50% gpu compute savings, and 16× to 70× faster inter gpu communication compared to conventional gpu scaling.

Storage And Memory Enable Next Generation Ai At The 2025 Nvidia Gtc At the 2025 ocp global summit, sk hynix showcased its full stack ai memory portfolio including hbm4, aim, dram and essd products. Simulations demonstrate that fenghuang achieves memory capacity reduction, 50% gpu compute savings, and 16× to 70× faster inter gpu communication compared to conventional gpu scaling. Meanwhile, "thomas" wonha choi of next gen memory & storage presented a talk titled "conceptualizing next generation memory & storage optimized for ai inference." his session proposed directions to meet performance and power needs in line with new market conditions and customer demand. With the selection of right interfaces and semantics, we are looking forward to continue conceptualizing these memory and storage concepts that can significantly improve energy efficiency. Conceptualizing next generation memory & storage optimized for ai inference open compute project watch on. Vast ai os on nvidia bluefield 4 dpus combines storage tiers for shared kv cache, enabling reliable access for complex ai inference tasks.

Pdf Ai Optimized Storage Solutions For Generative Ai Models In Cloud Meanwhile, "thomas" wonha choi of next gen memory & storage presented a talk titled "conceptualizing next generation memory & storage optimized for ai inference." his session proposed directions to meet performance and power needs in line with new market conditions and customer demand. With the selection of right interfaces and semantics, we are looking forward to continue conceptualizing these memory and storage concepts that can significantly improve energy efficiency. Conceptualizing next generation memory & storage optimized for ai inference open compute project watch on. Vast ai os on nvidia bluefield 4 dpus combines storage tiers for shared kv cache, enabling reliable access for complex ai inference tasks.

Comments are closed.