Computer System Architecture Cache Memory

Cache Memory Definition Types Benefits Cache memory is much faster than the main memory (ram). when the cpu needs data, it first checks the cache. if the data is there, the cpu can access it quickly. if not, it must fetch the data from the slower main memory. extremely fast memory type that acts as a buffer between ram and the cpu. Answer: a n way set associative cache is like having n direct mapped caches in parallel.

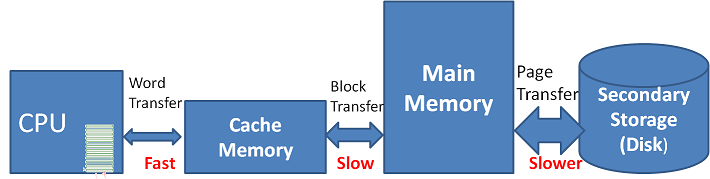

Cache Memory Computer Architecture When virtual addresses are used, the system designer may choose to place the cache between the processor and the mmu or between the mmu and main memory. a logical cache (virtual cache) stores data using virtual addresses. the processor accesses the cache directly, without going through the mmu. An efficient solution is to use a fast cache memory, which essentially makes the main memory appear to the processor to be faster than it really is. the cache is a smaller, faster memory which stores copies of the data from the most frequently used main memory locations. Caches are everywhere in computer architecture, almost everything is a cache! registers “a cache” on variables – software managed first level cache a cache on second level cache second level cache a cache on memory memory a cache on disk (virtual memory). This section explores fundamental concepts of cache memory, including basic terminology, cache controller responsibilities, and key performance factors. we'll examine how cache capacity, block size, associativity, and access time impact performance, and discuss strategies for optimizing cache design to balance speed, cost, and power consumption.

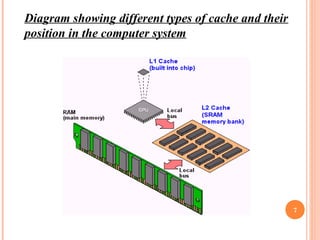

Computer Architecture Cache Memory Ppt Caches are everywhere in computer architecture, almost everything is a cache! registers “a cache” on variables – software managed first level cache a cache on second level cache second level cache a cache on memory memory a cache on disk (virtual memory). This section explores fundamental concepts of cache memory, including basic terminology, cache controller responsibilities, and key performance factors. we'll examine how cache capacity, block size, associativity, and access time impact performance, and discuss strategies for optimizing cache design to balance speed, cost, and power consumption. Cache memory is a small capacity but fast access memory which is functionally in between cpu and memory and holds the subset of information from main memory, which is most likely to be required by the cpu immediately. Cache hierarchy − computers normally have l1, l2, and l3 caches are the several layers of cache memory. the l1 cache is the smallest and fastest cache, located closest to the cpu; l2 and l3 caches are larger and slower. This guide will explain cache memory concepts and look at three types of cache memory structures: direct mapped, fully associative, and set associative. processors need to access data that resides in memory. this memory is sometimes called main memory or ram. Faster access time: cache memory is designed to provide faster access to frequently accessed data. it stores a copy of data that is frequently accessed from the main memory, allowing the cpu to retrieve it quickly. this results in reduced access latency and improved overall system performance.

Computer Architecture Cache Memory Ppt Cache memory is a small capacity but fast access memory which is functionally in between cpu and memory and holds the subset of information from main memory, which is most likely to be required by the cpu immediately. Cache hierarchy − computers normally have l1, l2, and l3 caches are the several layers of cache memory. the l1 cache is the smallest and fastest cache, located closest to the cpu; l2 and l3 caches are larger and slower. This guide will explain cache memory concepts and look at three types of cache memory structures: direct mapped, fully associative, and set associative. processors need to access data that resides in memory. this memory is sometimes called main memory or ram. Faster access time: cache memory is designed to provide faster access to frequently accessed data. it stores a copy of data that is frequently accessed from the main memory, allowing the cpu to retrieve it quickly. this results in reduced access latency and improved overall system performance.

Comments are closed.