Codacy Guardrails Demo Building A Secure Lightweight Java Server Using Ai

Guardrails Ai Codacy guardrails connects to your favorite ai assisted ide (vs code with copilot, cursor, windsurf) and enforces code security and quality standards on each ai interaction while the code. Once your repository is connected to codacy, you can go beyond traditional static analysis and start interacting with your codebase using natural language prompts.

Codacy Guardrails This script automates the installation and configuration of claude code with codacy integration, setting up a complete ai powered code analysis workflow with guardrails for code quality and security. Last month we teamed up with microsoft to show how codacy guardrails helps visual studio code agent mode produce secure code on every prompt. Codacy has released guardrails, a new solution for securing ai generated code directly in the ide to prevent vulnerabilities in code completions from reaching git. As ai coding assistants become increasingly embedded in software development workflows, teams are facing a new challenge: how to maintain trust in code that’s generated at speed. with guardrails, codacy is setting a new standard for genai code by making it secure from the source, at scale.

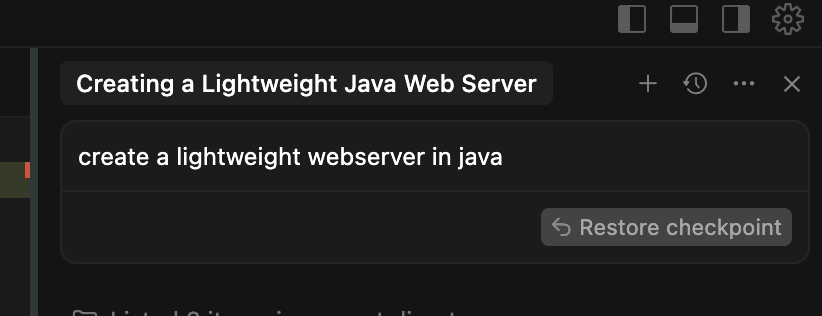

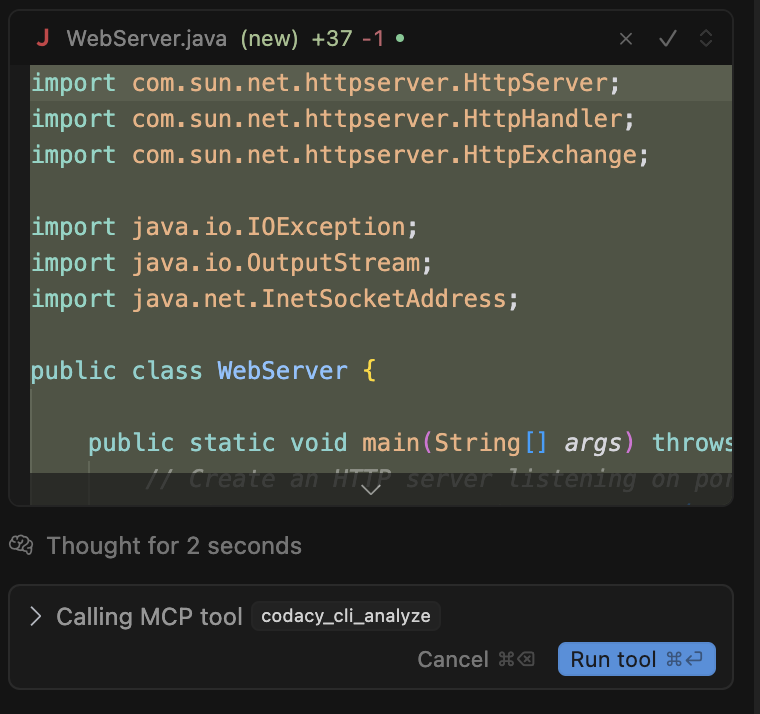

Using Codacy Guardrails Codacy Docs Codacy has released guardrails, a new solution for securing ai generated code directly in the ide to prevent vulnerabilities in code completions from reaching git. As ai coding assistants become increasingly embedded in software development workflows, teams are facing a new challenge: how to maintain trust in code that’s generated at speed. with guardrails, codacy is setting a new standard for genai code by making it secure from the source, at scale. Guardrails help developers ship safer, cleaner ai code by applying best practices and blocking insecure patterns while the code is being generated. besides real time ai code scanning, guardrails users can now prompt all their codacy findings, without ever leaving the ai chat panel inside their ide. Codacy guardrails pairs the codacy mcp server with the codacy cli, allowing your agents to write clean and secure code, fix issues, configure coding policies and create quality & compliance reports – all from the comfort of your chat panel. Today, we’re showcasing the world's first big moment of codacy guardrails: the ability to fix security and quality issues as your ai generates code. ai generated code isn’t necessarily secure, compliant, or aligned with how your team writes software, or how your company defines standards or quality. Define and enforce ai coding policies to catch ai specific risks like unapproved ai models, invisible prompt injections and vulnerable libraries inherited from outdated training data. track your security & compliance posture in real time, including sla due dates and exportable sbom reports.

Using Codacy Guardrails Codacy Docs Guardrails help developers ship safer, cleaner ai code by applying best practices and blocking insecure patterns while the code is being generated. besides real time ai code scanning, guardrails users can now prompt all their codacy findings, without ever leaving the ai chat panel inside their ide. Codacy guardrails pairs the codacy mcp server with the codacy cli, allowing your agents to write clean and secure code, fix issues, configure coding policies and create quality & compliance reports – all from the comfort of your chat panel. Today, we’re showcasing the world's first big moment of codacy guardrails: the ability to fix security and quality issues as your ai generates code. ai generated code isn’t necessarily secure, compliant, or aligned with how your team writes software, or how your company defines standards or quality. Define and enforce ai coding policies to catch ai specific risks like unapproved ai models, invisible prompt injections and vulnerable libraries inherited from outdated training data. track your security & compliance posture in real time, including sla due dates and exportable sbom reports.

Llamafirewall An Open Source Guardrail System For Building Secure Ai Today, we’re showcasing the world's first big moment of codacy guardrails: the ability to fix security and quality issues as your ai generates code. ai generated code isn’t necessarily secure, compliant, or aligned with how your team writes software, or how your company defines standards or quality. Define and enforce ai coding policies to catch ai specific risks like unapproved ai models, invisible prompt injections and vulnerable libraries inherited from outdated training data. track your security & compliance posture in real time, including sla due dates and exportable sbom reports.

Codacy Launches Guardrails To Secure Ai Generated Code From The Start

Comments are closed.