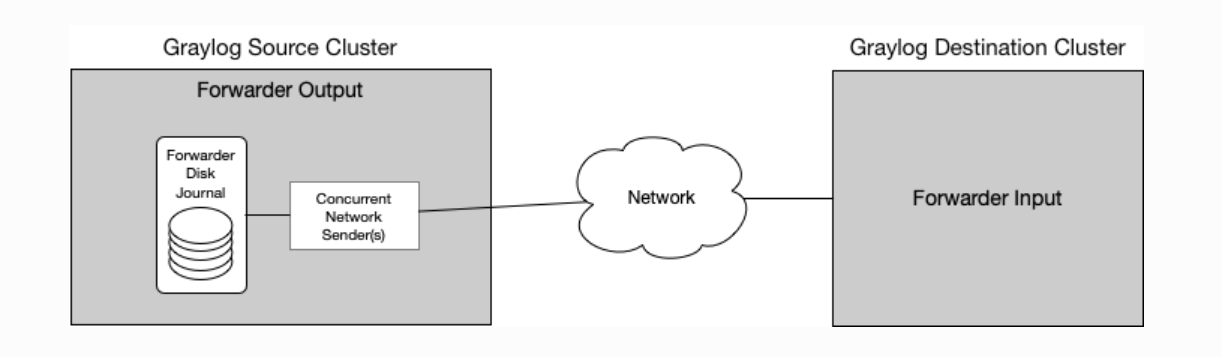

Cluster To Cluster Forwarder

Cluster To Cluster Forwarder The cluster to cluster forwarder forwards messages from one graylog cluster to another over http 2. cluster to cluster forwarder logs messages from multiple distributed graylog source clusters into one centralized destination cluster, which enables centralized alerting, reporting, and oversight. To use forwarders to get data into clusters, you must perform two types of configuration: connect forwarders to peer nodes. configure the forwarders' data inputs. before continuing, you must be familiar with forwarders and how to use them to get data into splunk enterprise.

Illustration Of Various Data Forwarding Paths From The Source Sender You can use the cluster log forwarder to send a copy of the application logs from specific projects to an external log aggregator. you can do this in addition to, or instead of, using the default elasticsearch log store. A: yes, you can use a kafka forwarder to forward messages between different versions of kafka clusters. however, you need to ensure that the kafka client libraries are compatible with both the source and destination kafka versions. When using indexer discovery with multisite clustering, you can configure each forwarder to be site aware, so that it forwards data to peer nodes only on a single specified site. Log forwarding allows you to transmit log data from any number of sources to a centralized database cluster. you can do so by creating and managing log sinks for your database clusters via the control panel or the api. kafka supports forwarding to opensearch, elasticsearch, and rsyslog.

Ppt Networked Clusters Along Transit Corridors Powerpoint When using indexer discovery with multisite clustering, you can configure each forwarder to be site aware, so that it forwards data to peer nodes only on a single specified site. Log forwarding allows you to transmit log data from any number of sources to a centralized database cluster. you can do so by creating and managing log sinks for your database clusters via the control panel or the api. kafka supports forwarding to opensearch, elasticsearch, and rsyslog. I am aware of mirroring and streaming but neither of those help when forwarding data from kafka cluster to another (when their topic names differ). mirrormaker can only be used between two clusters, so you'd have to chain a >b >c. We have a search head cluster and indexer cluster in our current splunk environment. the data to the indexer earlier was provided by multiple forwarders which had the endpoint for the indexer. now, since it is a multi indexer architecture, we need a common point for the forwarder to point the data. As an administrator of a red hat managed cluster, i want to rbac my log forwarder configuration from customer admins so they can take ownership of their log forwarder needs without being able to modify mine. This step registers a cluster's credentials to argo cd, and is only necessary when deploying to an external cluster. when deploying internally (to the same cluster that argo cd is running in), kubernetes.default.svc should be used as the application's k8s api server address. first list all clusters contexts in your current kubeconfig:.

2 Multi Hop Clustering Packet Forwarding Allows For Cluster Members I am aware of mirroring and streaming but neither of those help when forwarding data from kafka cluster to another (when their topic names differ). mirrormaker can only be used between two clusters, so you'd have to chain a >b >c. We have a search head cluster and indexer cluster in our current splunk environment. the data to the indexer earlier was provided by multiple forwarders which had the endpoint for the indexer. now, since it is a multi indexer architecture, we need a common point for the forwarder to point the data. As an administrator of a red hat managed cluster, i want to rbac my log forwarder configuration from customer admins so they can take ownership of their log forwarder needs without being able to modify mine. This step registers a cluster's credentials to argo cd, and is only necessary when deploying to an external cluster. when deploying internally (to the same cluster that argo cd is running in), kubernetes.default.svc should be used as the application's k8s api server address. first list all clusters contexts in your current kubeconfig:.

Comments are closed.