Classical Techniques 4 Ensemble Learning And Random Forests

Ensemble Learning And Random Forests Pptx The is the fourth and final part of the classical techniques in machine learning: ensemble learning and random forests. Random forest is a machine learning algorithm that uses many decision trees to make better predictions. each tree looks at different random parts of the data and their results are combined by voting for classification or averaging for regression which makes it as ensemble learning technique.

Ensemble Learning Techniques A Walkthrough With Random Forests In This document explores three popular ensemble techniques: bagging, boosting, and random forests. these methods are widely used for reducing variance, improving accuracy, and preventing overfitting in predictive models. A random forest is an ensemble of decision trees, generally trained via the bagging method (or sometimes pasting), typically with max samples set to the size of the training set. Ensemble methods combine the predictions of several base estimators built with a given learning algorithm in order to improve generalizability robustness over a single estimator. two very famous examples of ensemble methods are gradient boosted trees and random forests. Solution: let’s learn multiple trees! how to ensure they don’t all just learn the same thing?? what about cross validation? each tree is identically distributed (i.d. not i.i.d). bagged trees. are correlated! how to decorrelate the trees generated for bagging? etc.

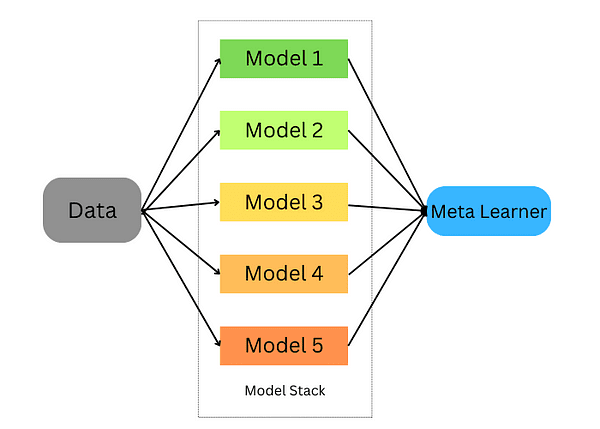

Exploring Random Forests A Versatile Ensemble Learning Algorithm Ensemble methods combine the predictions of several base estimators built with a given learning algorithm in order to improve generalizability robustness over a single estimator. two very famous examples of ensemble methods are gradient boosted trees and random forests. Solution: let’s learn multiple trees! how to ensure they don’t all just learn the same thing?? what about cross validation? each tree is identically distributed (i.d. not i.i.d). bagged trees. are correlated! how to decorrelate the trees generated for bagging? etc. We then look at random forests an ensemble method for decision trees that takes advantage of bagging and the subspace sampling method to create a "forest" of decision trees that provides consistently better predictions than any single decision tree. What is a random forest classifier? a random forest classifier is a ensemble of decision trees generally trained with the bagging method. how does the random forest algorithms introduce extra randomness when growing trees?. Ensemble learning and random forests: concepts and code today marks day 15 & 16 of my public ml learning journey. The document discusses various ensemble techniques in machine learning including bagging, boosting, stacking, and random forests. ensemble methods combine multiple learning models to improve overall predictive performance.

Mastering Random Forests рџњі Advanced Techniques For Optimal Ensemble We then look at random forests an ensemble method for decision trees that takes advantage of bagging and the subspace sampling method to create a "forest" of decision trees that provides consistently better predictions than any single decision tree. What is a random forest classifier? a random forest classifier is a ensemble of decision trees generally trained with the bagging method. how does the random forest algorithms introduce extra randomness when growing trees?. Ensemble learning and random forests: concepts and code today marks day 15 & 16 of my public ml learning journey. The document discusses various ensemble techniques in machine learning including bagging, boosting, stacking, and random forests. ensemble methods combine multiple learning models to improve overall predictive performance.

Understanding Ensemble Learning Random Forests Pdf Machine Ensemble learning and random forests: concepts and code today marks day 15 & 16 of my public ml learning journey. The document discusses various ensemble techniques in machine learning including bagging, boosting, stacking, and random forests. ensemble methods combine multiple learning models to improve overall predictive performance.

Random Forests An Ensemble Learning Method That Combines Multiple

Comments are closed.