Channel Coding Theorem

Information Theory Chapter 7 Channel Capacity And Coding Theorem The shannon theorem states that given a noisy channel with channel capacity c and information transmitted at a rate r, then if there exist codes that allow the probability of error at the receiver to be made arbitrarily small. Mmetric channel. in this case, shannon's theorem says precisely what the capacity is. it is 1 h(p) where h(p) is the entropy of one bit of our source, i.e., h(p) = p log2 p.

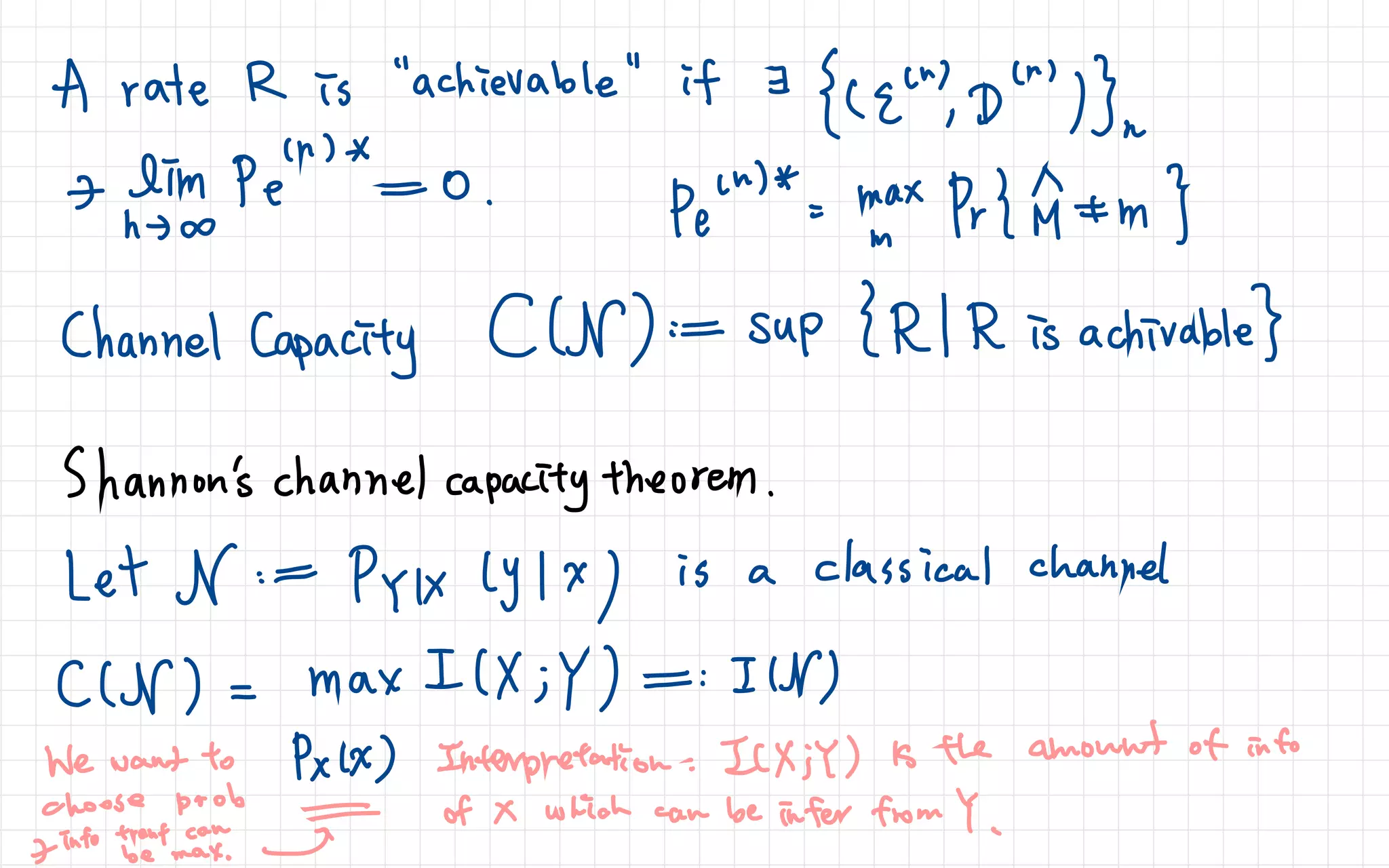

Shannon S Noisy Channel Coding Theorem Wolfram Demonstrations Project Learn the definition and proof of shanon’s channel coding theorem, which states that for every channel, there exists a constant capacity and a rate below which reliable communication is possible. see examples and applications for binary symmetric channels and random binary linear codes. This is called as channel coding theorem. the noise present in a channel creates unwanted errors between the input and the output sequences of a digital communication system. the error probability should be very low, nearly ≤ 10 6 for a reliable communication. The channel coding theorem is defined as a principle that establishes the capacity of any discrete memoryless channel, stating that this capacity is the supremum of the mutual information between the input and output, maximized over all possible input distributions. In this lecture1, we will continue our discussion on channel coding theory. in the previous lecture, we proved the direct part of the theorem, which suggests if r < c(i), then r is achievable. now, we are going to prove the converse statement: if r > c(i), then r is not achievable.

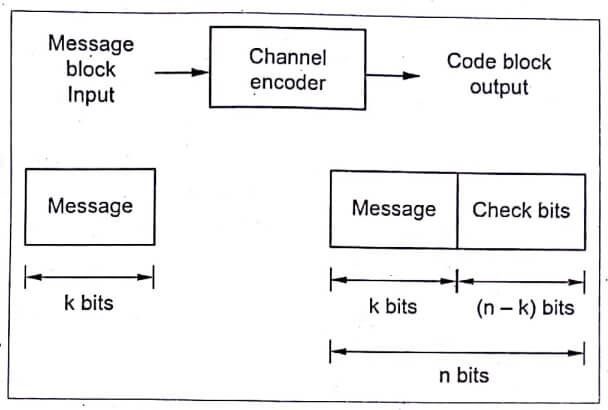

Shannon S Second Theorem Or Channel Coding Theorem Pedagogy Zone The channel coding theorem is defined as a principle that establishes the capacity of any discrete memoryless channel, stating that this capacity is the supremum of the mutual information between the input and output, maximized over all possible input distributions. In this lecture1, we will continue our discussion on channel coding theory. in the previous lecture, we proved the direct part of the theorem, which suggests if r < c(i), then r is achievable. now, we are going to prove the converse statement: if r > c(i), then r is not achievable. Channel decoder sequence decide which of the possible 2 binary sequence k was transmitted of a source encoder or the output of a source directly. the channel encoder introduces systematic redundancy into the data stream by adding bits to the message bits in such a way as to facilitate the detection and or correction of bit errors. Lecture 14: proof of channel coding theorem achievability: when r < c, exists zero error code converse: zero error code must have r < c dr. yao xie, ece587, information theory, duke university. Learn how to prove shannon's channel coding theorem using the random method and joint typicality. the lecture covers the direct part of the theorem and provides definitions, lemmas and examples. The channel coding theorem states that for a given communication channel, there exists a maximum rate, known as the channel capacity c c, at which information can be transmitted with an arbitrarily low probability of error.

The Shannon Channel Coding Theorem Pdf Channel decoder sequence decide which of the possible 2 binary sequence k was transmitted of a source encoder or the output of a source directly. the channel encoder introduces systematic redundancy into the data stream by adding bits to the message bits in such a way as to facilitate the detection and or correction of bit errors. Lecture 14: proof of channel coding theorem achievability: when r < c, exists zero error code converse: zero error code must have r < c dr. yao xie, ece587, information theory, duke university. Learn how to prove shannon's channel coding theorem using the random method and joint typicality. the lecture covers the direct part of the theorem and provides definitions, lemmas and examples. The channel coding theorem states that for a given communication channel, there exists a maximum rate, known as the channel capacity c c, at which information can be transmitted with an arbitrarily low probability of error.

The Shannon Channel Coding Theorem Pdf Learn how to prove shannon's channel coding theorem using the random method and joint typicality. the lecture covers the direct part of the theorem and provides definitions, lemmas and examples. The channel coding theorem states that for a given communication channel, there exists a maximum rate, known as the channel capacity c c, at which information can be transmitted with an arbitrarily low probability of error.

Comments are closed.