Cdc For Delta Live Tables A Game Changer For Real Time Data Pipelines

Cdc For Delta Live Tables A Game Changer For Real Time Data Pipelines This guide will demonstrate how you can leverage change data capture in delta live tables pipelines to identify new records and capture changes made to the dataset in your data lake. In this guide, i demonstrate how to implement cdc using databricks’ delta live tables (dlt)—a declarative framework designed to simplify complex data engineering workflows.

Cdc For Delta Live Tables A Game Changer For Real Time Data Pipelines Here is a simple example of how to implement cdc using delta live tables to track changes to a customer table and propagate those changes to an order table using python. Handling change data capture (cdc) with delta live tables using apply changes () offers a modern, scalable, and declarative approach to building real time, auditable, and historically aware data pipelines. Implementing change data capture (cdc) techniques and managing delta live tables (dlt) for real time data integration and analytics involves a series of steps to ensure that changes in the source data are captured and reflected in the target data store in real time. A delta cdc pipeline captures changes from source systems and writes them to delta lake tables, enabling organizations to maintain real time synchronized data warehouses while preserving complete change history and ensuring acid transaction guarantees.

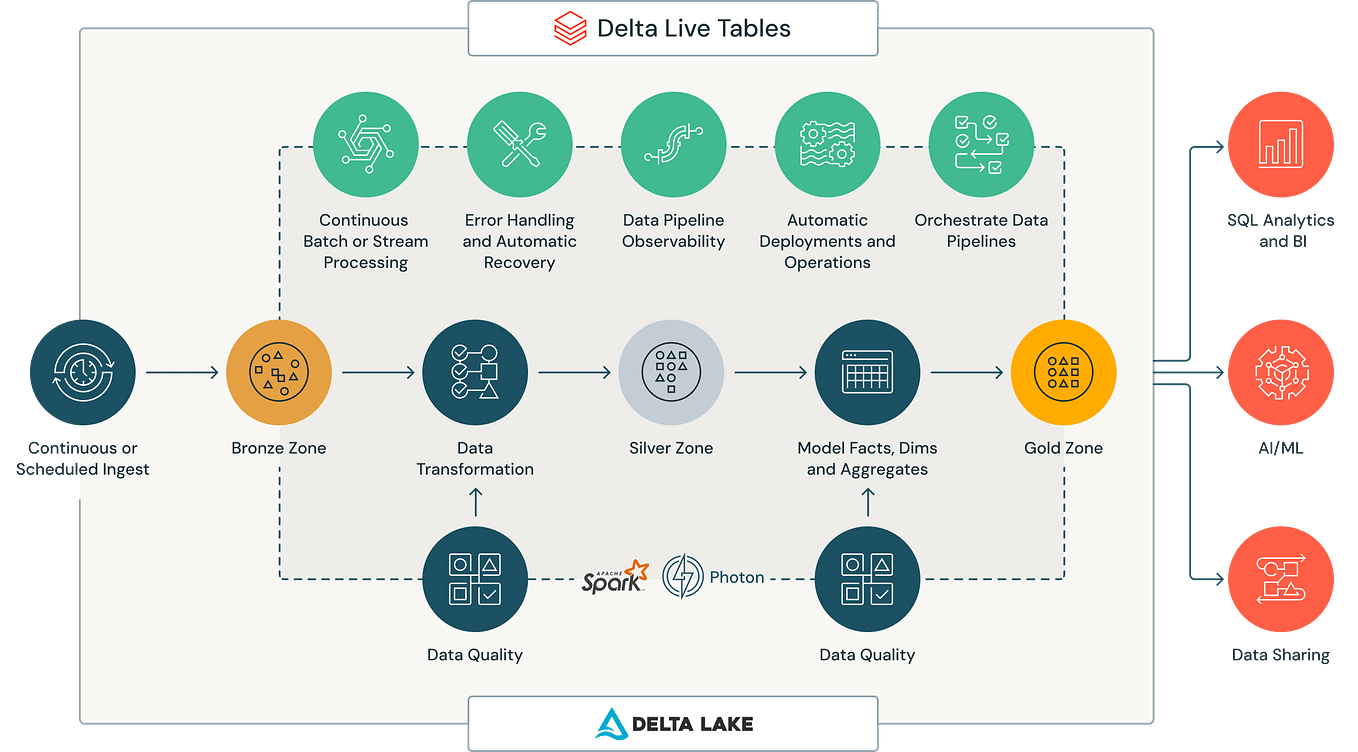

Cdc For Delta Live Tables A Game Changer For Real Time Data Pipelines Implementing change data capture (cdc) techniques and managing delta live tables (dlt) for real time data integration and analytics involves a series of steps to ensure that changes in the source data are captured and reflected in the target data store in real time. A delta cdc pipeline captures changes from source systems and writes them to delta lake tables, enabling organizations to maintain real time synchronized data warehouses while preserving complete change history and ensuring acid transaction guarantees. This project implements a databricks delta live tables (dlt) pipeline to ingest, transform, and serve data for analytics. it follows a medallion architecture approach with bronze → silver → gold layers, ensuring data quality, lineage, and scalability. In this blog, we’ll guide you step by step on how to build an end to end etl pipeline using dlt, from basics to advanced features, including data quality, orchestration, error handling, and cdc. This page describes how to update tables in your pipelines based on changes in source data. to learn how to record and query row level change information for delta tables, see use delta lake change data feed on azure databricks. In this article i describe how to load data from recurring full snapshots with delta live tables relatively easily and elegantly into a bronze table without the amount of data exploding.

Cdc For Delta Live Tables A Game Changer For Real Time Data Pipelines This project implements a databricks delta live tables (dlt) pipeline to ingest, transform, and serve data for analytics. it follows a medallion architecture approach with bronze → silver → gold layers, ensuring data quality, lineage, and scalability. In this blog, we’ll guide you step by step on how to build an end to end etl pipeline using dlt, from basics to advanced features, including data quality, orchestration, error handling, and cdc. This page describes how to update tables in your pipelines based on changes in source data. to learn how to record and query row level change information for delta tables, see use delta lake change data feed on azure databricks. In this article i describe how to load data from recurring full snapshots with delta live tables relatively easily and elegantly into a bronze table without the amount of data exploding.

Comments are closed.