Caching In Apache Spark Best Practices And Implementation By Chai

Spark Best Practices Pdf Apache Spark Databases Caching is a powerful optimization technique in apache spark that can significantly improve the performance of your data processing tasks. this article will explain what caching is, when to use it, how to implement it, and how to remove objects from the cache when they’re no longer needed. In this comprehensive guide, we’ll explore what dataframe caching is, why it’s essential, how to implement it in spark, and best practices to maximize its benefits.

Caching In Spark Pdf Apache Spark Cache Computing Those techniques, broadly speaking, include caching data, altering how datasets are partitioned, selecting the optimal join strategy, and providing the optimizer with additional information it can use to build more efficient execution plans. Master spark caching strategies with practical examples. learn how persistence boosts performance by storing rdds dataframes in memory or disk. Learn how to optimize apache spark performance with caching techniques. discover when to cache, strategies to use, and the importance of unpersisting. This guide dives into the practical techniques for leveraging spark's cache() and persist() methods, explaining when and how to use them. you'll learn to optimize your data pipelines, leading to significantly faster job completion times and more efficient resource utilization.

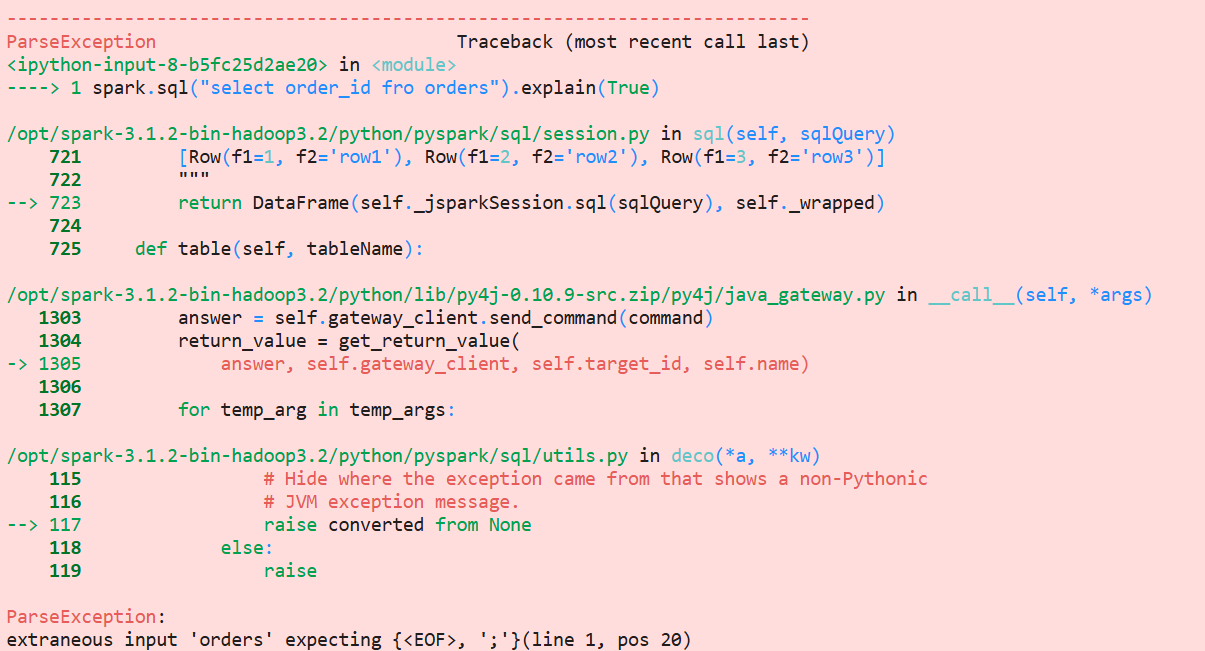

Caching In Apache Spark Best Practices And Implementation By Chai Learn how to optimize apache spark performance with caching techniques. discover when to cache, strategies to use, and the importance of unpersisting. This guide dives into the practical techniques for leveraging spark's cache() and persist() methods, explaining when and how to use them. you'll learn to optimize your data pipelines, leading to significantly faster job completion times and more efficient resource utilization. Effective cache management in spark is more art than science. by carefully considering which datasets to cache and implementing proper cleanup strategies, you can significantly improve. Caching and persistence are techniques that allow you to store intermediate or frequently used data in memory or on disk, reducing the need for recomputation and enhancing overall performance. By assigning the cached data to a new df, you can easily view the analyzed plan, which is used to read from cache. if you do not assign the cache to a new df, this is harder to view, as the example in the article shows and you might think cache is used while it is not. When it comes to optimizing apache spark performance, two of the most powerful techniques are partitioning and caching. these strategies can significantly reduce processing time, memory usage, and cluster resource consumption—making your spark jobs faster, more scalable, and cost efficient.

Caching In Apache Spark Best Practices And Implementation By Chai Effective cache management in spark is more art than science. by carefully considering which datasets to cache and implementing proper cleanup strategies, you can significantly improve. Caching and persistence are techniques that allow you to store intermediate or frequently used data in memory or on disk, reducing the need for recomputation and enhancing overall performance. By assigning the cached data to a new df, you can easily view the analyzed plan, which is used to read from cache. if you do not assign the cache to a new df, this is harder to view, as the example in the article shows and you might think cache is used while it is not. When it comes to optimizing apache spark performance, two of the most powerful techniques are partitioning and caching. these strategies can significantly reduce processing time, memory usage, and cluster resource consumption—making your spark jobs faster, more scalable, and cost efficient.

Caching In Apache Spark Best Practices And Implementation By Chai By assigning the cached data to a new df, you can easily view the analyzed plan, which is used to read from cache. if you do not assign the cache to a new df, this is harder to view, as the example in the article shows and you might think cache is used while it is not. When it comes to optimizing apache spark performance, two of the most powerful techniques are partitioning and caching. these strategies can significantly reduce processing time, memory usage, and cluster resource consumption—making your spark jobs faster, more scalable, and cost efficient.

Comments are closed.