Caching In A Nutshell Part 2 System Design

System Caching Part 1 Pdf In this video i explain caching strategies in a large scale system and touch some of the cache eviction policies. caching part 1: • caching in a nutshell part 1 | system de. Caching is a concept that involves storing frequently accessed data in a location that is easily and quickly accessible. the purpose of caching is to improve the performance and efficiency of a system by reducing the amount of time it takes to access frequently accessed data.

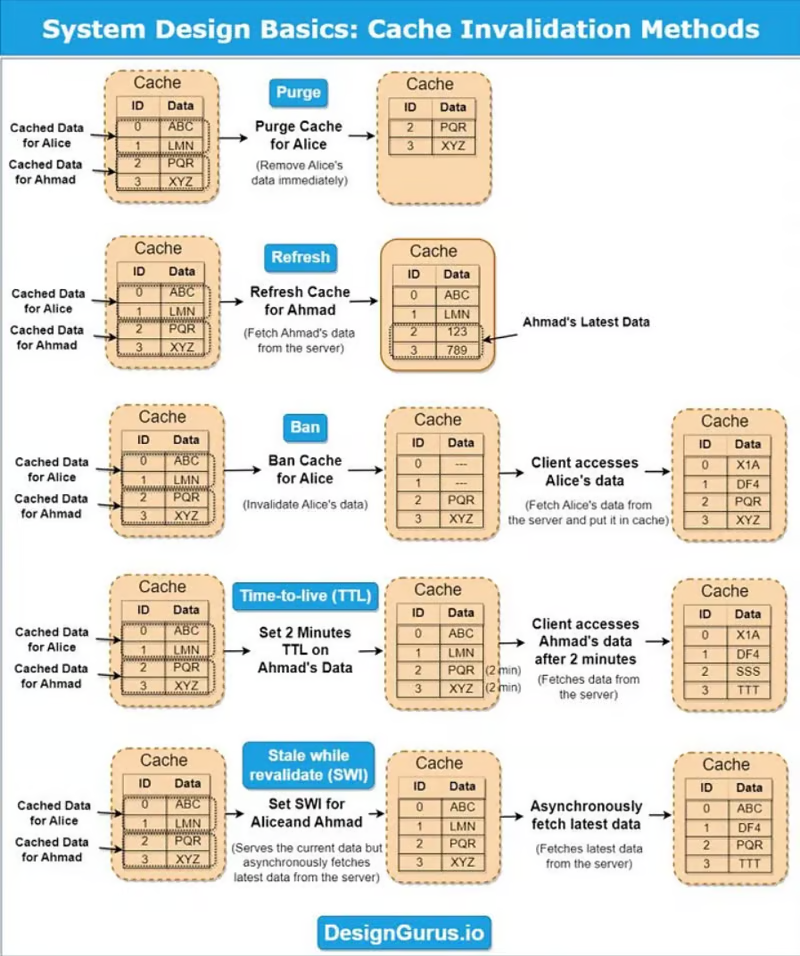

System Design Caching System Design Interviewhelp Io This blog will teach you a step by step approach for applying caching at different layers of the system to enhance scalability, reliability and availability. Caching is one of the most powerful strategies to improve performance and handle high load. by storing frequently used or expensive to fetch data in a faster storage layer, we can dramatically. System design is a crucial aspect of software engineering, but it can be challenging due to the complex terminology used in system design resources you typically get online. in order to design effective systems, it’s essential to understand the tools and terms used to solve specific problems. In this article, we are going to be focusing on discussing what techniques are used in populating and maintaining caches. depending on the kind of data we’re dealing with in the system, we can ask the following questions when choosing caching techniques: how many times is the data read and written? how is the load on read vs write in the system?.

System Design Basics Caching Sanjay Damodaran System design is a crucial aspect of software engineering, but it can be challenging due to the complex terminology used in system design resources you typically get online. in order to design effective systems, it’s essential to understand the tools and terms used to solve specific problems. In this article, we are going to be focusing on discussing what techniques are used in populating and maintaining caches. depending on the kind of data we’re dealing with in the system, we can ask the following questions when choosing caching techniques: how many times is the data read and written? how is the load on read vs write in the system?. Learn about caching and when to use it in system design interviews. in system design interviews, caching comes up almost every time you need to handle high read traffic. your database becomes the bottleneck, latency starts creeping up, and the interviewer is waiting for you to say the word: cache. Caching is a vital system design strategy that helps improve application performance, reduce latency, and minimize the load on backend systems. understanding different caching strategies, policies, and distributed caching solutions is essential when designing scalable, high performance systems. Caching part ii (we go deeper into caching, different types of caching and how it works). Caching is a technique where systems store frequently accessed data in a temporary storage area, known as a cache, to quickly serve future requests for the same data. this temporary storage is typically faster than accessing the original data source, making it ideal for improving system performance. real world example:.

Comments are closed.